Kimi K2.6 is Moonshot AI’s latest open weights model. Compared with Kimi K2.5, it is better at long horizon coding, more reliable in agent style workflows, stronger at tool use, and much better at handling complex tasks that need planning, decomposition, and execution across many steps.

If K2.5 already looked promising, K2.6 feels like the version where the idea becomes practical. It keeps the same broad architecture, but the gains are not cosmetic. They show up in coding benchmarks, autonomous research tasks, and in the way the model coordinates multiple tool based actions without losing track of the goal.

What Kimi K2.6 is

Kimi K2.6 is an open source, native multimodal, agentic model. It supports text output with image and video input, and it keeps the long context window of roughly 256k tokens. Architecturally, it remains a Mixture of Experts model with 1 trillion total parameters and 32 billion active parameters per token. That means Moonshot is not reinventing the whole stack here. Instead, it is improving training, orchestration, and practical task execution on top of an already capable foundation.

A new model version is not always better because it is larger. In K2.6, the improvement is more about execution quality than headline size. It is designed for coding, multimodal understanding, tool use, and agent workflows that can run for a long time without collapsing into repetition or hallucination.

Why Kimi K2.6 is better than K2.5

The clearest difference is in agentic performance. On Artificial Analysis’ GDPval AA benchmark, which focuses on knowledge work tasks performed in an agent loop with tools, Kimi K2.6 reached an Elo score of 1520. K2.5 was at 1309. That is a large jump.

There is also a meaningful improvement in hallucination behavior. K2.6 reportedly reduced hallucination rate to 39 percent from 65 percent in K2.5 on the AA Omniscience Index setup. In plain language, the new model is more willing to admit uncertainty instead of inventing an answer. For enterprise and research use, that is often more valuable than a small gain on a static benchmark.

The coding side also looks stronger. K2.6 is positioned for long horizon engineering tasks across Python, Go, and Rust, plus front end work, DevOps, and optimization tasks. In benchmark reporting and vendor testing, the model shows better instruction following, more stable long context behavior, and a higher success rate when using tools. On SWE Bench style evaluations and Terminal Bench style agent tasks, it competes much more seriously with top proprietary models than K2.5 did.

There is one caveat. K2.6 uses a lot of tokens. That is partly the cost of stronger reasoning and more extensive tool mediated work. So yes, it is better, but part of the improvement comes from spending more compute on difficult tasks. For teams comparing raw quality only, that may be acceptable. For teams optimizing latency and cost, it means routing and workload design still matter.

Where the upgrade really shows up

Long horizon coding

K2.6 is aimed at workflows where the model needs to inspect a codebase, form a plan, edit files, run tools, interpret failures, and continue over many iterations. This is where many models still become brittle. They may write good code in isolation, but they struggle to sustain coherence over long sessions.

K2.6 appears to improve exactly that. Moonshot emphasizes long running coding sessions, strong tool calling, and reliable execution over thousands of steps. That makes it more relevant for coding agents and autonomous developer assistants than a model that only shines in short benchmark prompts.

Better multimodal work

K2.5 added vision, but K2.6 leans further into multimodal workflows. It can work from image and video input and use those inputs in coding driven design tasks. That means a prompt can include a screenshot, interface mockup, or product idea and the model can turn it into a more complete front end or lightweight full stack output. This is not just image understanding for classification. It is closer to visual specification plus implementation.

Lower hallucination and more abstention

One of the more underrated improvements in K2.6 is not how often it answers, but how often it refuses to fake certainty. For search, research, and coding tasks, a model that knows when to stop and verify is usually more useful than one that answers everything confidently. K2.6’s lower hallucination rate suggests better practical reliability, especially in agent loops that depend on external tools.

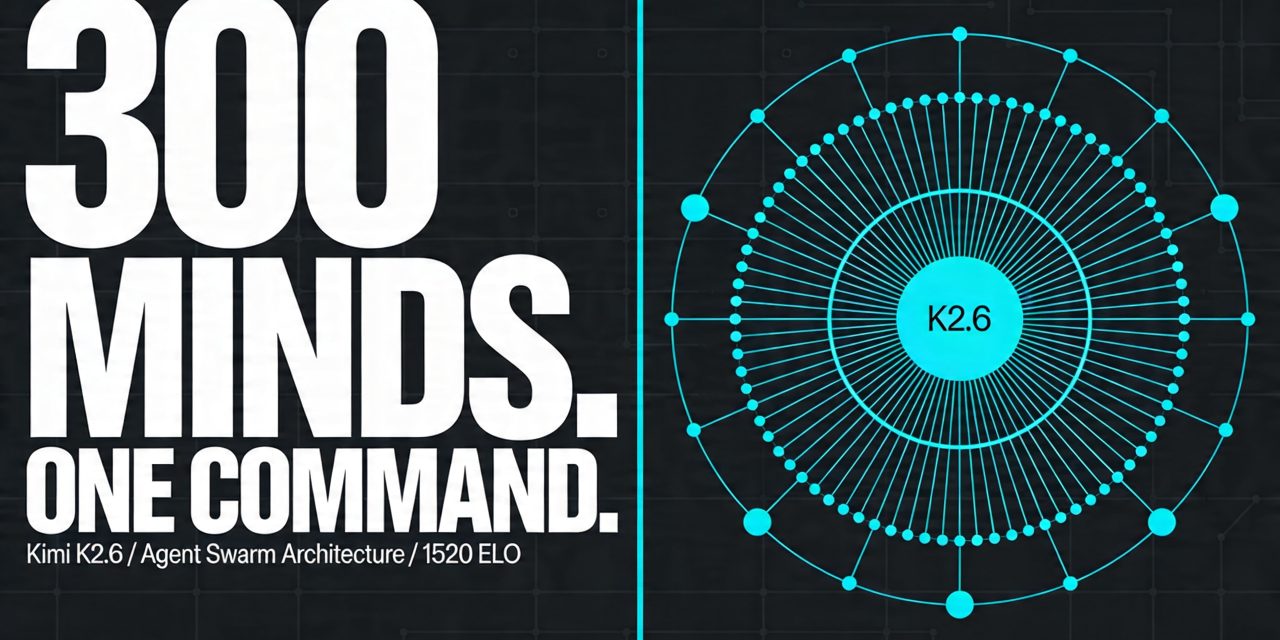

What Agent Swarm means

Agent Swarm is the most distinctive part of the K2.6 story. Instead of treating one model instance as a single worker that handles a task sequentially, Agent Swarm breaks a larger task into many smaller subtasks and distributes them across multiple specialized sub agents. K2.6 then coordinates those agents, tracks intermediate outputs, and merges them into a final result.

Moonshot describes K2.6 as scaling to 300 sub agents and around 4,000 coordinated steps in one autonomous run. That is a major increase over K2.5, which was presented with support for around 100 sub agents and 1,500 steps. The core idea is horizontal scaling. Rather than asking one agent to do everything in order, the system can let multiple agents work in parallel on search, analysis, writing, coding, formatting, or verification.

How Agent Swarm works in practice

Imagine you ask for a full product launch package. A single AI agent might research competitors, draft the copy, create a landing page, build a slide deck, and prepare a spreadsheet one after another. That works, but it is slow and fragile.

In an Agent Swarm setup, one cluster of agents can research competitors, another can write copy, another can generate front end code, and another can structure documentation. A coordinating layer assigns tasks, checks progress, and re routes work if one branch fails. The result is not just faster parallelism. It is better decomposition of complex work.

This is why benchmark names such as BrowseComp Agent Swarm and DeepSearchQA matter here. They are not just testing trivia recall. They test whether the model can search, use tools, synthesize information, and complete multi step workflows with a degree of autonomy.

Why Agent Swarm matters more than a benchmark win

Most people do not need an AI model because it scores a few points higher on a leaderboard. They need it because a real task involves too many moving parts for a single prompt. Research pipelines, software maintenance, report generation, multi format content creation, and ongoing background agents all fit this pattern.

K2.6 is interesting because it tries to make that team structure part of the product rather than something developers have to build entirely themselves.