ZeroClaw positions itself as a personal AI assistant you run on your own infrastructure. The project does not present the assistant as a cloud service with a thin local client. It places the control plane, workspace, channels, tools, memory, and policy controls on your own devices. For people who want a single user agent that stays available across messaging apps, terminal sessions, browser workflows, and edge hardware, that architecture is the core proposition.

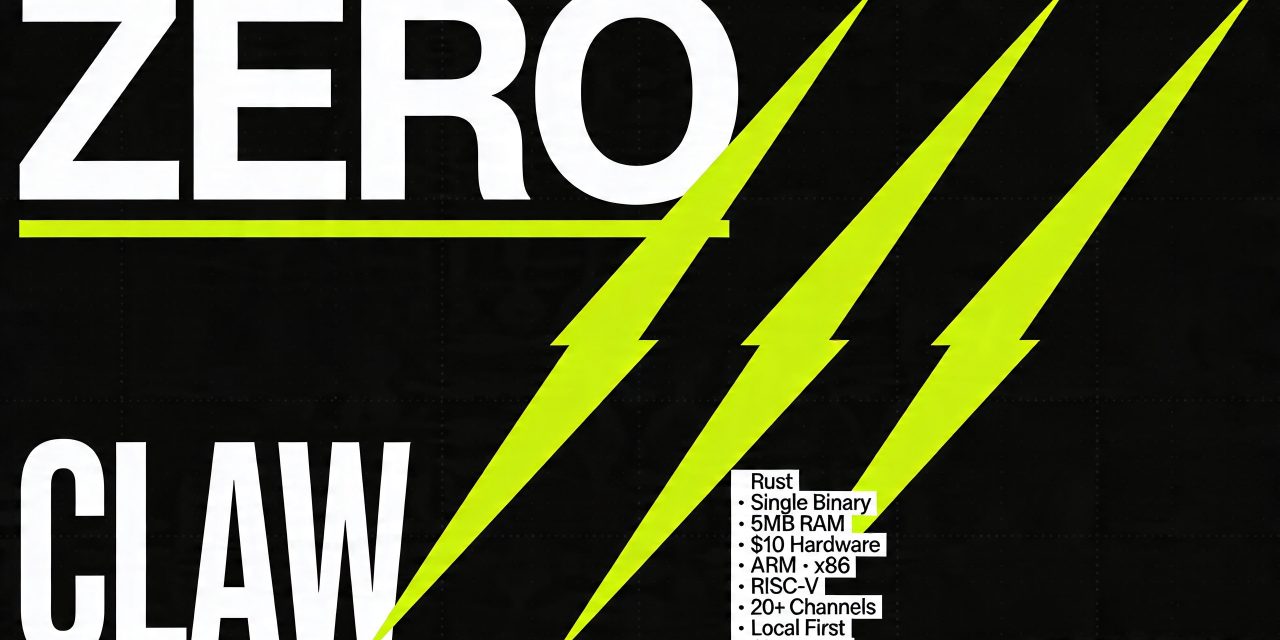

The technical profile is unusually lean. ZeroClaw is written in Rust, ships as a single binary, and claims a runtime footprint below 5 MB RAM for common CLI and status workflows on release builds. The project states it can run on $10 hardware, supports ARM, x86, and RISC V, and avoids heavyweight runtime dependencies. In a market where many agent stacks still depend on Python or Node.js layers, that design choice shifts the cost model. It reduces memory pressure, shortens cold starts, and widens the range of environments where a personal assistant can stay always on.

What ZeroClaw actually is

ZeroClaw is an assistant runtime with a local first gateway, a web dashboard, tool execution, memory management, scheduled jobs, event triggers, hardware connectors, and multi channel messaging support. The assistant can answer on channels you already use, including WhatsApp, Telegram, Slack, Discord, Signal, iMessage, Matrix, IRC, Email, Bluesky, Nostr, Mattermost, Nextcloud Talk, DingTalk, Lark, QQ, Reddit, LinkedIn, Twitter, MQTT, WeChat Work, WebSocket, and more.

That breadth changes the role of the system. ZeroClaw does not force you into one interface. It can become a single personal agent that follows you across communication surfaces and keeps the same memory, tools, and policy constraints. For a user, that means less interface switching. For an operator, that means one control plane instead of separate bots, automations, and scripts for each channel.

Why the personal agent model matters

The phrase personal agent is often used loosely. In ZeroClaw’s case, it refers to a single user assistant with a persistent workspace and local context. The project explicitly says the gateway is only the control plane and the product is the assistant. That distinction is useful. It separates infrastructure from experience.

A personal agent needs four things to be credible. It needs persistent memory, access to your working channels, policy boundaries for autonomous action, and enough performance to stay always available. ZeroClaw implements all four. The workspace lives under a local directory structure. Prompt files such as IDENTITY.md, USER.md, MEMORY.md, AGENTS.md, and SOUL.md define personality, user context, long term facts, and operating conventions. Skills can be added at workspace level. Auth profiles are stored locally and encrypted at rest. Scheduled tasks and SOP driven workflows let the assistant act when triggered by time, webhooks, MQTT, or peripherals.

That architecture facilitates continuous operation. The assistant can observe, wait, act with approval, or act autonomously within policy limits. Those autonomy levels are exposed as ReadOnly, Supervised, and Full. The default is Supervised.

Low resource deployment is important

ZeroClaw’s strongest differentiator is the small footprint deployment. The repository describes a lean runtime by default, fast cold starts, and cost efficient deployment on low end boards and small cloud instances. It also notes that common workflows run in a few megabytes of memory on release builds.

The broader implication is operational rather than aesthetic. If you can run an assistant on cheap hardware, you can place it closer to devices, keep it local, and scale out specialized agents without treating RAM as the main bottleneck. Source material around the project contrasts this with heavier agent frameworks that bring a Node.js or Python runtime into the stack. ZeroClaw’s static binary approach reduces that overhead.

The project has pre built binaries for Linux on x86_64, aarch64, and armv7, for macOS on x86_64 and aarch64, and for Windows on x86_64. That matters for practical adoption. Cross platform support is only useful if installation friction stays low. ZeroClaw’s preferred path is an onboarding flow in the terminal that configures workspace, provider, channels, and authentication step by step on macOS, Linux, and Windows through WSL2.

How ZeroClaw handles models and providers

ZeroClaw is provider agnostic. It supports multiple LLM providers and lets you swap providers, channels, tools, memory backends, and tunnels through pluggable traits. The repository references support for 20 plus LLM backends, OpenAI compatible providers, model failover, retry logic, model routing, and authentication profile rotation between OAuth and API key based setups.

That flexibility addresses a common structural risk in agent deployments. The risk is not only price volatility. It is policy volatility, credential restrictions, model deprecations, and uneven tool reliability across providers. ZeroClaw’s architecture acknowledges that providers are unstable dependencies. It therefore treats provider selection as a replaceable layer rather than a product identity.

The repository also includes a clear warning on credential use. It notes that Anthropic updated its authentication and credential use terms on 2026 02 19 and states that Claude Code OAuth tokens intended for Claude Code and Claude.ai should not be used in other products or services. That kind of note is operationally significant. It shows that personal agent infrastructure sits inside a moving compliance perimeter. Flexibility helps, but it does not remove the need to track provider terms closely.

Channels and tools

ZeroClaw supports a long list of channels, but channels alone do not make an agent useful. The practical value comes from the tool layer. The project includes first class tools for shell access, file I O, browser control, git operations, web fetch and search, MCP, Jira, Notion, Google Workspace, Microsoft 365, LinkedIn, Composio, Pushover, weather access, scheduling, memory operations, and more than 70 additional tools. It also supports delegate and swarm style functions for multi agent orchestration.

This matters because a personal agent becomes valuable when it can cross the boundary from answering to doing. It can inspect files, schedule recurring work, browse, fetch, summarize, update systems, and route outputs to your existing channels. For a technical user, that allows a single assistant to cover tasks that would otherwise be split across shell scripts, browser automations, SaaS connectors, and chatbots.

The platform also includes lifecycle hooks that can intercept and modify LLM calls, tool executions, and messages at multiple stages. That gives advanced users a way to shape behavior without rewriting the core runtime.

Security is central because messaging surfaces are hostile by default

ZeroClaw’s security posture starts from a realistic assumption. Inbound direct messages are untrusted input. That is an important baseline for any assistant that connects to public or semi public channels. The default behavior is pairing based access control. Unknown senders receive a short pairing code and the bot does not process their message until you approve the code locally. Public inbound direct messages require an explicit opt in in the configuration.

The system then layers additional controls. The repository lists workspace isolation, path traversal blocking, command allowlisting, forbidden paths such as /etc, /root, and ~/.ssh, Linux Landlock support, Bubblewrap, rate limits on actions per hour, configurable cost caps per day, interactive approval for medium and high risk operations, and an emergency stop capability. It also cites 129 plus security tests in automated CI.

This is where ZeroClaw takes a different line than consumer assistants. It assumes the assistant may reach real filesystems, shells, channels, and hardware. It therefore cannot rely on conversational guardrails alone. The relevant question is not whether the agent can reason about risk. The relevant question is whether the runtime can prevent damage when the model reasons badly or a prompt injection succeeds.

Hardware support extends the assistant beyond software

ZeroClaw supports hardware peripherals through connectors for ESP32, STM32 Nucleo, Arduino, and Raspberry Pi GPIO. The repository also references a peripheral trait, sensor and actuator bridges, serial bridge patterns, and industrial peripheral examples. That broadens the project’s scope. ZeroClaw is not only a messaging assistant and not only a terminal agent. It can operate as a bridge between language models, local policy, and physical devices.

That opens practical use cases in labs, workshops, and edge deployments. A personal agent can monitor a sensor feed, react to a scheduled event, report over Telegram or Slack, and require human approval before taking a higher risk action. The core point is not robotics in the narrow sense. It is that a personal agent becomes operational when it can sense, report, and act across both digital and physical interfaces.

The dashboard and CLI reflect a control first design

ZeroClaw includes a web dashboard built with React 19 and Vite. It is served directly from the gateway. The dashboard exposes system overview, health status, uptime, cost tracking, interactive chat, memory management, configuration editing, cron management, tool inspection, logs, diagnostics, integrations, and pairing management.

The CLI surface is equally broad. Commands include gateway, agent, onboard, doctor, status, service, migrate, auth, cron, channel, and skills. That suggests a product designed for operators who expect observability and maintenance from day one. The inclusion of a doctor command is especially telling. Personal agents tend to fail in practical ways before they fail conceptually. Credentials expire. Channels drift. Policies become too permissive or too strict. Diagnostics are therefore part of the product, not an accessory.

Migration and extensibility reduce lock in

ZeroClaw includes migration support from OpenClaw, including import of workspace, memory, and configuration from ~/.openclaw/ to ~/.zeroclaw/, with automatic conversion from JSON to TOML. That lowers switching costs for users already operating in a related ecosystem.

At the architecture level, the project emphasizes swappability. Providers, channels, tools, memory, tunnels, and peripherals are all trait based. New extensions fit into clearly defined directories, from providers and channels to observability modules, tools, memory backends, tunnels, peripherals, and skills. That matters for users who do not want to bet on a closed product roadmap. It also matters for institutions and developers who need a controllable substrate rather than a fixed assistant personality.

What makes ZeroClaw different from typical agent stacks

Many agent frameworks still optimize for demos. They optimize for the shortest path from prompt to tool call. ZeroClaw appears to optimize for continuous deployment under constraints. The differences show up in small but consequential choices. Single binary packaging. Local first control. Pairing by default. Workspace scoped memory. Cost caps. Model failover. Tunnel support through Cloudflare, Tailscale, ngrok, OpenVPN, and custom commands. Native runtime as default with Docker as a stricter option when you need stronger isolation.

That does not make ZeroClaw universally superior. It makes it opinionated. The system is best read as infrastructure for people who want a personal assistant that lives in their own environment and can survive real operational friction. If your priority is a polished consumer GUI and zero terminal exposure, this is not the center of gravity. If your priority is a local, portable, always on agent with a wide surface of channels and tools, the project aligns much better.

Limits and open questions

The project remains ambitious and broad. That creates the usual tension between capability surface and operational maturity. ZeroClaw supports many providers, channels, and tools, but each integration adds maintenance burden. The repository itself points users to troubleshooting guides, operational runbooks, network deployment guides, and security documentation before exposing anything. That is sensible. It also signals that this is infrastructure, not appliance software.

There is also a governance dimension. The repository includes repeated warnings about impersonation, unofficial domains, and unauthorized forks claiming affiliation with the project. For users evaluating personal agent infrastructure, provenance matters. If the assistant will hold credentials, memory, and message access, repository authenticity and release hygiene are not side issues.