ChatGPT Images 2.0 is not a cosmetic update. It is a serious jump in how OpenAI handles image generation, editing, and prompt understanding. OpenAI describes the new model as a major step forward in world knowledge, instruction following, dense detail, and text inside images. It also adds a new thinking mode that can use reasoning and live web search during image creation, which is one of the clearest differences versus earlier ChatGPT image systems.

What changed most in ChatGPT Images 2.0

The biggest improvement is control. Previous versions could already generate impressive images, but they still had familiar weak spots. Complex prompts could drift. Text inside visuals often needed retries. Edits sometimes changed more than requested. Character consistency and identity sensitive workflows were harder to trust. OpenAI positions gpt-image-2, the model behind this generation stack, as the new default for highest quality generation and editing, especially for customer facing work, photorealism, text heavy visuals, and workflows where fewer retries matter.

In plain English, version 2.0 is better at doing exactly what you asked for without improvising in the wrong direction.

Better at text inside images

One of the most noticeable upgrades is text rendering. Earlier image models across the industry often stumbled on labels, captions, posters, menus, diagrams, and infographics. ChatGPT Images 2.0 is much stronger here. OpenAI’s documentation emphasizes crisp lettering, more consistent layouts, stronger contrast, and better handling of dense text. It also recommends the new model for text heavy images and polished productivity visuals such as charts, slides, and diagrams.

That matters far beyond marketing graphics. For AI and robotics publishers, this means cleaner architecture diagrams, more readable annotated schematics, more useful explainer visuals, and fewer failed generations when a chart or interface needs real labels instead of pseudo text. This is one of the most practical quality jumps over the previous version.

Stronger editing and more reliable preservation

Another major upgrade is editing performance. OpenAI says gpt-image-2 improves editing reliability and supports production workflows more broadly than earlier models. The prompting guide repeatedly stresses identity preservation, layout preservation, geometry preservation, and surgical edits where only one element changes while the rest stays intact.

This is important because older image workflows often broke when asked to make small changes. You could request a new jacket and get a new face. You could ask for a lighting change and lose the scene composition. With 2.0, OpenAI is clearly targeting those failure points. The model is built to better preserve faces, brand elements, layouts, and scene structure during iterative edits. That makes it much more useful for mockups, product visualization, storyboard work, and repeatable content pipelines.

Higher realism with better world knowledge

OpenAI also says ChatGPT Images 2.0 has significantly enhanced world knowledge. In practice, that means the model can infer context more accurately and generate scenes that match real places, moments, or physical details with less hand holding. The official prompting guide gives an example where the model can infer a Woodstock related scene from a request set in Bethel, New York, in August 1969.

Compared with earlier versions, this should lead to fewer generic looking results and more context aware images. That translates into visuals that feel less synthetic and more grounded in the real world. Combined with better material rendering, natural lighting, and richer color handling, 2.0 appears much stronger at photorealism and believable scene construction.

The new feature that changes the workflow

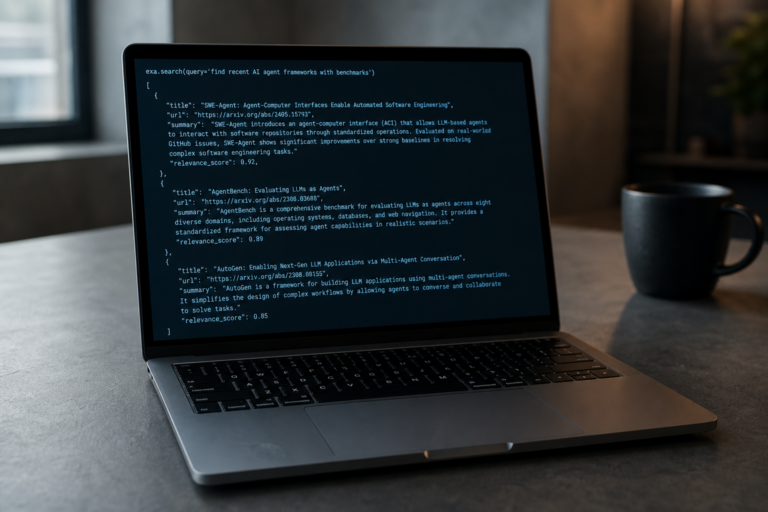

The most interesting addition is thinking mode. OpenAI says this mode adds reasoning and tool use to the image generation process. It can integrate live web search data, generate multiple images from a single prompt, and use the reasoning stack to turn a basic request into a more researched and thought through final image. That is a meaningful shift from the earlier generate and hope workflow.

This suggests ChatGPT Images 2.0 is not just a better renderer. It is becoming a more agentic visual system. If you ask for a historically grounded scene, a scientific explainer, or a concept image that depends on current context, the system can do more of the interpretive work before rendering. That is a real upgrade over prior versions that relied more heavily on prompt quality alone. This is partly an inference based on OpenAI’s description of reasoning plus web search, but it is a fair one.

More flexible for real production use

OpenAI’s API documentation positions gpt-image-2 as the recommended default for new builds and the upgrade target for teams still on older image models. It supports flexible sizing beyond a few fixed dimensions, as long as requests stay within defined limits, and it offers quality settings that let teams balance speed and fidelity depending on the workload.

That flexibility matters because not every workflow needs the same thing. A newsroom mockup, an ecommerce visual, a robotics conference slide, and a comic strip all have different demands. Earlier versions were easier to treat as one shot generators. Version 2.0 looks more like a platform for varied image pipelines, from fast ideation to high fidelity assets.

What it is better at in one sentence

If the previous version was good at creating impressive images, ChatGPT Images 2.0 is better at creating useful images. It follows instructions more faithfully, handles text better, edits with more precision, preserves identity and structure more reliably, and introduces a reasoning layer that can improve the final output before the image is even made.

The catch that also grew with the model

There is an unavoidable tradeoff. As realism improves, misuse risks rise too. OpenAI’s new system card says ChatGPT Images 2.0 allows heightened realism that could enable more convincing deepfakes if left unguarded, especially in sensitive imagery involving real people, places, or events. In response, OpenAI says it uses layered safeguards at the prompt, input image, and output image stages.