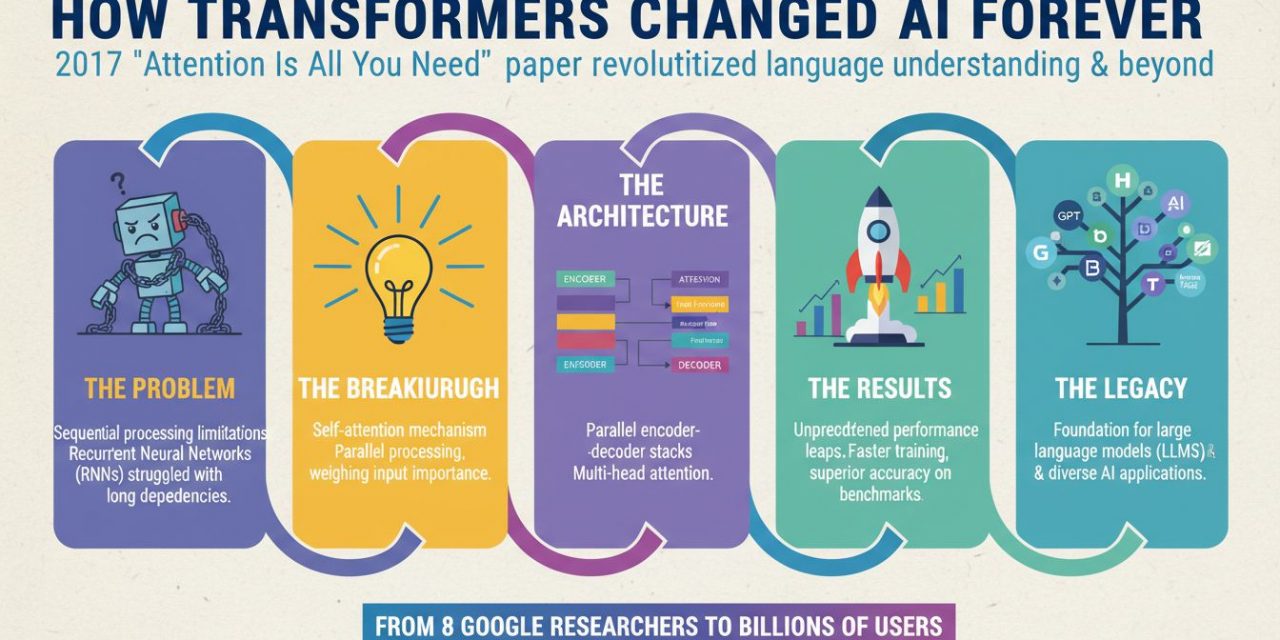

In the fast-paced world of artificial intelligence, it is rare for a single research paper to completely redefine the trajectory of an entire field. Yet, in 2017, a team of researchers at Google did exactly that. With the release of the paper titled Attention Is All You Need, they introduced the Transformer architecture, a novel neural network design that discarded the dominant methods of the time in favor of a mechanism called self-attention.

Before this paper, the field of Natural Language Processing (NLP) was dominated by Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks. While effective to a degree, these models suffered from significant bottlenecks regarding training speed and handling long sequences of text. The Transformer changed everything. It laid the foundation for the Large Language Models (LLMs) we use today.

The best Minds behind the Machine

The paper Attention Is All You Need was a collaborative effort by researchers at Google Brain and Google Research. The list of authors has since become legendary in the AI community, as many have gone on to found their own major AI startups. The authors listed on the paper are:

- Ashish Vaswani

- Noam Shazeer

- Niki Parmar

- Jakob Uszkoreit

- Llion Jones

- Aidan N. Gomez

- Lukasz Kaiser

- Illia Polosukhin

It is worth noting that the title itself was a bold declaration. At the time, the industry standard for sequence-to-sequence tasks (like translation) relied heavily on recurrence and convolutions. By stating that attention was all that was needed, the authors were proposing a radical simplification that promised better results and faster training times.

The Problem with RNNs and LSTMs

To understand the brilliance of the Transformer, you first need to understand the limitations of what came before. Traditionally, language tasks were solved using Recurrent Neural Networks (RNNs). Imagine reading a sentence one word at a time. You read the first word, process it, update your mental state, and then move to the second word. This is how RNNs work: they process data sequentially.

The Sequential Bottleneck

This sequential nature created two massive problems:

- No Parallelization: Because the network needs the output of the previous step to calculate the current step, you cannot parallelize the computation. You cannot throw more GPUs at the problem to make it process a single sentence faster. This made training on massive datasets incredibly slow.

- Long-Range Dependencies: In a long sentence or paragraph, the model often forgot information from the beginning by the time it reached the end. While LSTMs (Long Short-Term Memory networks) tried to solve this with gating mechanisms, they still struggled with very long sequences.

The authors of Attention Is All You Need asked a critical question: What if we stop processing words one by one and instead look at the entire sentence at once?

The Transformer Architecture

The Transformer architecture proposes a model that relies entirely on an attention mechanism to draw global dependencies between input and output. The architecture is split into two main parts: the Encoder and the Decoder.

In a translation task (e.g., English to German), the Encoder processes the English sentence, and the Decoder generates the German sentence. However, unlike RNNs, the Transformer sees all the words in the English sentence simultaneously. To make sense of this data without recurrence, the paper introduced several key innovations.

Self-Attention is the Core Mechanism

The heart of the paper is the Self-Attention mechanism. Conceptually, self-attention allows the model to look at other words in the input sequence as it encodes a specific word. It helps the model understand the context and relationship between words.

Consider the sentence: The animal didn’t cross the street because it was too tired.

When the model processes the word “it”, how does it know what “it” refers to? Is it the street? Or the animal? As humans, we know “it” refers to the animal because of the adjective “tired.” Self-attention allows the model to associate “it” strongly with “animal” by assigning a higher attention score to that relationship.

Query, Key, and Value (Q, K, V)

To implement this mathematically, the authors introduced the concept of Queries, Keys, and Values. These are vectors created by multiplying the input embedding by three distinct weight matrices that are learned during training.

Think of it like a database retrieval system:

- Query (Q): What you are looking for.

- Key (K): The label or identifier in the database.

- Value (V): The actual information content.

To calculate attention, the model takes the dot product of the Query vector of one word with the Key vectors of all other words. This dot product essentially calculates similarity. If the Query and Key are aligned (pointing in the same direction), the result is a high score. This score determines how much attention to pay to that specific word.

These scores are then scaled (divided by the square root of the dimension of the key vectors) to ensure gradients remain stable, and passed through a Softmax function. The Softmax normalizes the scores so they add up to 1. Finally, these normalized scores are multiplied by the Value vectors. The result is a weighted sum that represents the word in the context of the entire sentence.

Multi-Head Attention

The paper argues that a single attention mechanism isn’t enough. A sentence has multiple layers of meaning—grammar, semantic relationship, tone, and reference. To capture this, the authors proposed Multi-Head Attention.

Instead of calculating attention once, the Transformer runs the process multiple times in parallel (e.g., 8 heads). Each “head” has its own set of Q, K, and V weight matrices. This allows the model to attend to information from different “representation subspaces” at different positions. One head might focus on the relationship between the subject and verb, while another focuses on adjectives and nouns. The outputs of all heads are concatenated and projected back into a single dimension.

Positional Encoding

Since the Transformer processes all words simultaneously (in parallel) rather than sequentially, it inherently lacks a sense of order. To the model, “The cat eats the mouse” and “The mouse eats the cat” would look like the same bag of words without additional information.

To solve this, the authors inject information about the position of the tokens in the sequence. They add Positional Encodings to the input embeddings. Interestingly, the paper chose to use sine and cosine functions of different frequencies to generate these encodings. This allows the model to learn relative positions and distances between words, effectively giving the neural network a sense of time and order without requiring sequential processing.

The Architecture, Encoder and Decoder

While the attention mechanism is the engine, the chassis of the car is the Encoder-Decoder structure.

The Encoder

The Encoder consists of a stack of identical layers (6 in the original paper). Each layer has two sub-layers:

- A Multi-Head Self-Attention mechanism.

- A simple, position-wise fully connected Feed-Forward Network.

Crucially, the authors employed a residual connection around each of these two sub-layers, followed by layer normalization. This helps the information flow through the deep network without vanishing gradients, making the model easier to train.

The Decoder

The Decoder is also a stack of identical layers, but it has a slight variation. In addition to the two sub-layers found in the encoder, the decoder inserts a third sub-layer, which performs multi-head attention over the output of the encoder stack. This is often called Cross-Attention.

In Cross-Attention, the Queries come from the previous decoder layer, but the Keys and Values come from the output of the Encoder. This allows every position in the decoder to attend over all positions in the input sequence. Essentially, when the decoder is trying to generate the next word in the translation, it can “look back” at the entire original sentence to decide what is relevant.

Furthermore, the self-attention mechanism in the decoder is modified to be Masked. Since the decoder generates words one by one during inference, it shouldn’t be allowed to “peek” at future words during training. The masking ensures that the prediction for position i can depend only on the known outputs at positions less than i.

Why is this Paper so important?

The impact of Attention Is All You Need cannot be overstated. It is widely considered the “iPhone moment” of Natural Language Processing. Here is why it matters:

Computational Efficiency and Scaling

The most immediate benefit was speed. By eliminating recurrence, the Transformer allowed for massive parallelization. Training models on huge datasets became feasible because GPUs could process entire sequences at once. This efficiency paved the way for training models on the entire internet, leading to the massive foundation models we see today.

Handling Long-Range Dependencies

The attention mechanism solved the memory problem of RNNs. In a Transformer, the distance between any two words is just one step—the attention calculation. It doesn’t matter if the related words are 5 positions apart or 500 positions apart; the model can connect them instantly. This capability is essential for generating coherent paragraphs, summarizing long documents, and writing code.

The Birth of Pre-trained Models

The Transformer architecture enabled the strategy of pre-training on vast amounts of unlabeled text and then “fine-tuning” for specific tasks.

- BERT (Bidirectional Encoder Representations from Transformers): Uses just the Encoder stack. It revolutionized search and understanding tasks by looking at context from both left and right.

- GPT (Generative Pre-trained Transformer): Uses just the Decoder stack. It focuses on predicting the next word, leading to the generative AI boom we are experiencing now.

Conclusion

The paper “Attention Is All You Need” did more than just propose a new algorithm; it fundamentally shifted the paradigm of how machines process information. By proving that recurrence was unnecessary and that attention mechanisms alone could handle the complexities of language, Vaswani and his team unlocked the potential for AI to understand and generate human language with unprecedented fluency.

Today, every time you use ChatGPT, translate a webpage, or use an AI coding assistant, you are relying on the mechanisms described in this 2017 paper. The Transformer has proven to be a robust, scalable, and flexible architecture, extending even beyond text into image processing (Vision Transformers) and biology (AlphaFold). It turns out, attention really was all we needed.