Claude Opus 4.7 is not a cosmetic refresh of Opus 4.6. Anthropic positions it as a direct upgrade, but the practical change is more specific. The model is stronger where organizations actually deploy large models in production: software engineering, long running agent workflows, document analysis, finance tasks, multimodal inspection, and multi session work that depends on persistent notes. At the same time, the upgrade introduces migration friction. Prompt behavior changes. Token usage can rise. Some API behaviors break.

That makes the central question less about headline benchmarks and more about operational fit. If you already use Claude Opus 4.6, where does Claude Opus 4.7 deliver a material gain, and where do you need to retune prompts, budgets, and workflows before you switch?

What Claude Opus 4.7 is in Anthropic’s lineup

Anthropic describes Opus 4.7 as its most capable generally available model. It sits below the restricted Claude Mythos Preview model, which Anthropic keeps limited because of cybersecurity risk concerns, but above Opus 4.6 in most areas that matter for enterprise use.

Opus 4.7 is available across Claude products and the API, as well as Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Pricing stays unchanged from Opus 4.6 at $5 per million input tokens and $25 per million output tokens. On paper, that suggests a smooth upgrade path. In practice, the same price per token does not guarantee the same bill, because Opus 4.7 uses a new tokenizer and can consume more tokens for the same input.

Where Claude Opus 4.7 is clearly better than Opus 4.6

1. Advanced software engineering

The largest and most consistent message in Anthropic’s material is coding. Opus 4.7 improves most on difficult engineering tasks, especially tasks that run over many steps and require the model to plan, execute, validate, and continue without losing coherence.

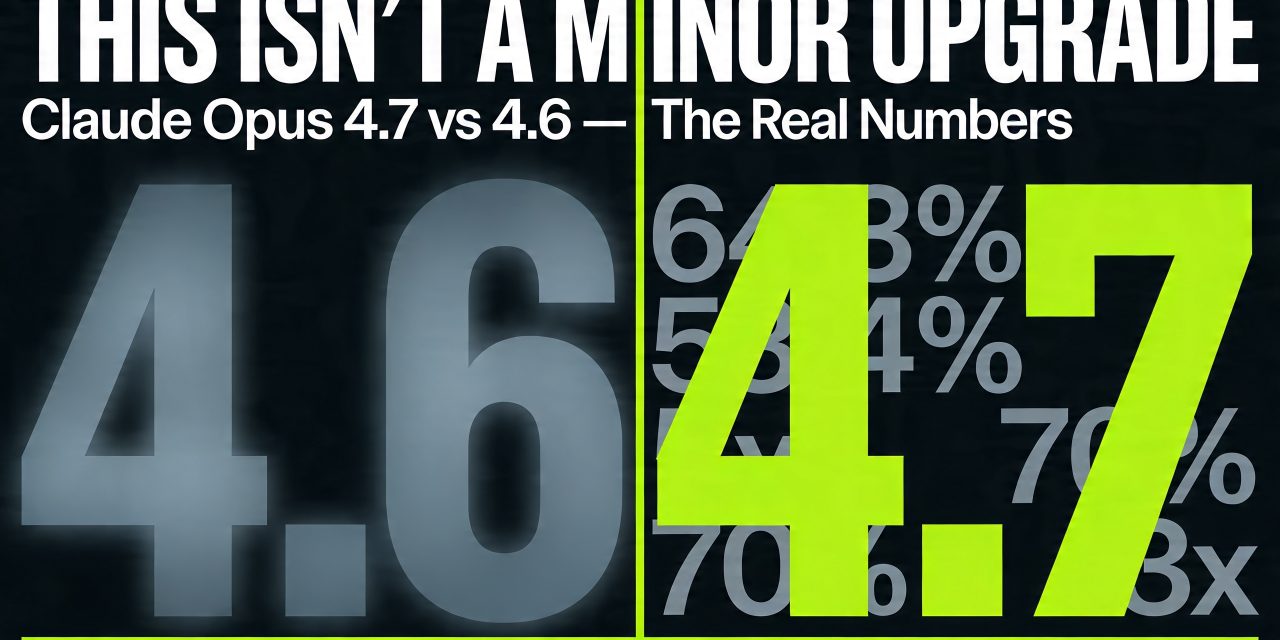

Anthropic reports that on SWE bench Pro, Opus 4.7 resolves 64.3% of tasks versus 53.4% for Opus 4.6. That is a double digit jump in a benchmark category that directly tracks agentic software work. On a 93 task coding benchmark cited by Cognition, Opus 4.7 lifted resolution by 13% over Opus 4.6, including four tasks that neither Opus 4.6 nor Sonnet 4.6 solved.

Other partner data points align with that pattern. CursorBench moved to 70% from 58%. Rakuten SWE Bench results showed Opus 4.7 resolving 3x more production tasks than Opus 4.6. Factory Droids reported a 10% to 15% lift in task success. Databricks and Qodo both highlighted better precision and stronger issue detection. Across these reports, the common thread is not only better code generation, but better follow through.

That distinction matters. Older frontier models often perform well in short bursts and then degrade when tasks become multi step, tool heavy, or validation dependent. Anthropic claims Opus 4.7 “devises ways to verify its own outputs before reporting back.” Several partners describe exactly that behavior: the model catches logical faults during planning, validates more often, and stops less frequently halfway through the job.

2. Long horizon agent workflows

Anthropic’s more strategic claim is that Opus 4.7 is stronger at long horizon autonomy. This is the category that matters if you use agents for debugging, research, orchestration, workflow execution, or cross tool tasks that can run for hours.

Cognition said Opus 4.7 works coherently for hours in Devin and pushes through hard problems that older models abandoned. Notion reported 14% better performance on complex multi step workflows, with a third of the tool errors, and called it the first model to pass its implicit need tests. Ramp highlighted stronger role fidelity, coordination, and instruction following in agent team workflows.

Anthropic’s own research agent benchmark also points in that direction. Opus 4.7 tied for the top overall score across six modules at 0.715 and delivered what Anthropic calls the most consistent long context performance of any model it tested.

This is one of the most consequential differences from Opus 4.6. Many users do not need a model that answers a single question better. They need a model that can keep state, maintain intent, recover from tool failures, and finish the task. Opus 4.7 appears more optimized for that mode of work.

3. Knowledge work in finance, legal, and document analysis

The most important non coding improvement may be in economically valuable knowledge work. Anthropic says Opus 4.7 is state of the art on GDPval AA, a third party evaluation focused on work in finance, legal, and other professional domains. VentureBeat reports an Elo score of 1753 for Opus 4.7, ahead of GPT 5.4 at 1674 and Gemini 3.1 Pro at 1314.

That benchmark matters because it focuses less on model theatrics and more on output that would be valuable in actual professional settings.

The finance signals are also strong. On Anthropic’s General Finance module, Opus 4.7 scored 0.813 versus 0.767 for Opus 4.6. Anthropic also says it is state of the art on its Finance Agent evaluation. Early testers describe more rigorous analyses, more professional presentations, and stronger discipline around disclosure and data handling.

Legal and document reasoning also improved. Harvey reports 90.9% on BigLaw Bench at high effort, with better reasoning calibration on review tables and better handling of ambiguous document editing tasks. Databricks says Opus 4.7 produces 21% fewer errors than Opus 4.6 on OfficeQA Pro when working from source information.

These gains map directly to the kind of model use many knowledge workers already prioritize: reviewing contracts, comparing clauses, redlining documents, editing slide decks, extracting figures, and creating structured analyses from source materials.

4. Vision and high resolution image understanding

Opus 4.7 is Anthropic’s first Claude model with high resolution image support. The maximum image resolution increases to 2576 pixels on the long edge, or about 3.75 megapixels. The prior limit was 1568 pixels or about 1.15 megapixels. That is more than a threefold increase in image detail.

This is not a marginal enhancement. It changes what the model can reliably inspect. Anthropic says the gains show up in screenshot reading, technical diagrams, chart analysis, computer use, and document workflows that depend on fine visual detail. The company also highlights improved low level perception such as pointing, measuring, counting, and image localization.

XBOW’s benchmark is the most dramatic example. It reports 98.5% on a visual acuity benchmark for Opus 4.7 versus 54.5% for Opus 4.6. VentureBeat also cites a visual reasoning gain from 84.7% to 91.0% with tools on arXiv Reasoning.

If you use Claude for dense screenshots, forms, engineering diagrams, chemical structures, slide layout checking, or artifact understanding, this is one of the strongest reasons to upgrade.

5. Better instruction following

This is both an improvement and a migration risk. Anthropic states clearly that Opus 4.7 is substantially better at following instructions. That sounds straightforward. It is not.

With Opus 4.6 and earlier models, many prompts relied on the model to generalize, fill in gaps, infer implied requests, or ignore inconsistent wording. Opus 4.7 behaves more literally. Anthropic says the model will not silently generalize one instruction from one item to another, and will not infer requests you did not make. It also tends to calibrate response length more closely to the perceived complexity of the task.

That means older prompt libraries can produce unexpected results. The problem is not lower intelligence. The problem is stricter compliance. Prompts that depended on loose interpretation now need explicit structure. If your workflow was built around “the model knows what I mean,” Opus 4.7 may expose every ambiguity you left in the prompt.

For production teams, this is probably the single biggest migration gotcha.

6. Better file based memory across long work

Anthropic also points to stronger memory behavior in long, multi session work. More precisely, Opus 4.7 is better at writing and using file system based memory. If your agent keeps notes, a scratchpad, or a structured memory file across turns, the new model should use that memory more effectively.

This matters for practical agent deployment. Many long running systems depend less on raw context window size and more on whether the model can maintain a useful external memory trail. Anthropic says Opus 4.7 remembers important notes across long, multi session work and uses them to continue with less up front context. That can reduce prompt overhead and improve continuity across sessions.

Where Claude Opus 4.7 changes behavior rather than just improving scores

The model is more direct and less validation forward

Anthropic notes a more direct and more opinionated tone, with less validation forward phrasing and fewer emoji than Opus 4.6. That is not a core capability change, but it does affect user experience and product behavior. Teams that tuned around the warmer style of older Claude models may notice the difference immediately.

Tool use shifts with effort settings

Opus 4.7 tends to make fewer tool calls by default and reason more internally. Raising effort increases tool usage. That can be a strength if your problem is over eager tool invocation. It can also change orchestration patterns if your current harness expects a certain tool calling cadence.

Progress updates appear more regularly

Anthropic says the model gives more regular progress updates throughout long agent traces. If you previously added custom scaffolding to force status messages, you may be able to simplify prompts and re baseline the flow.

The cost and migration implications are real

New tokenizer means token counts can rise

Anthropic’s biggest operational warning concerns tokenization. Opus 4.7 uses a new tokenizer that can map the same input to roughly 1.0x to 1.35x as many tokens depending on content type. Count tokens results will differ from Opus 4.6, and input cost can shift even if list pricing stays the same.

Output token usage can also rise, especially at higher effort levels and in later turns of agentic workflows, because the model thinks more and often verifies more.

So the upgrade path is not simply “same price, better model.” For some workloads, the bill will move. Anthropic recommends measuring the difference on real traffic, adjusting max token headroom, tuning effort, using task budgets, and prompting for more concise outputs where appropriate.

New effort tuning and task budgets

Anthropic adds a new xhigh effort level between high and max. The company recommends starting with high or xhigh effort for coding and agentic use cases. It also introduces task budgets in public beta on the API, so developers can provide an advisory token target across a full agent loop.

Task budgets are not a hard cap. They act as a token awareness mechanism that helps the model prioritize and finish gracefully as the budget is consumed. For open ended tasks where quality matters more than speed, Anthropic says not to set a task budget.

Some API behaviors break

There are hard migration issues on the Messages API. Extended thinking budgets are removed. Adaptive thinking becomes the only supported thinking on mode, and it is off by default unless you enable it explicitly. Non default values for temperature, top_p, or top_k now return a 400 error. Thinking content is omitted by default unless you opt back in.

For teams with mature production stacks, these are not footnotes. They require code changes, testing, and in some cases product level adjustments.

Is Opus 4.7 better at everything

No. The improvement pattern is broad, but not universal. VentureBeat notes that competitors still lead in some categories such as agentic search and multilingual Q and A, and its summary argues that Opus 4.7 is best understood as a specialized model optimized for reliability and long horizon autonomy rather than an across the board winner.

Anthropic’s own material also frames Opus 4.7 as less broadly capable than Claude Mythos Preview. Safety results are mixed but broadly similar to Opus 4.6. Anthropic reports low rates of concerning behavior overall, with improvements in honesty and resistance to malicious prompt injection, but modest weakness on some other measures.

There is also at least one practical caution in benchmark interpretation. A better score does not always justify a full migration if your existing prompts, tools, and agent harnesses are already stable. The more deeply integrated your current system is, the more the prompt literalism and tokenization changes matter.

Who should upgrade first

Strong candidates for immediate testing include teams doing:

- complex coding and debugging

- long running agent workflows

- finance analysis and professional document work

- slide, spreadsheet, and contract review tasks

- vision heavy workflows with dense screenshots or diagrams

- multi session agent systems that rely on external notes or scratchpads

Teams that should migrate more carefully include those with:

- fragile legacy prompts that rely on inference rather than explicit instructions

- tight token budgets and highly optimized cost assumptions

- API integrations that use removed sampling or thinking settings

- orchestration layers tuned to the tool use habits of Opus 4.6