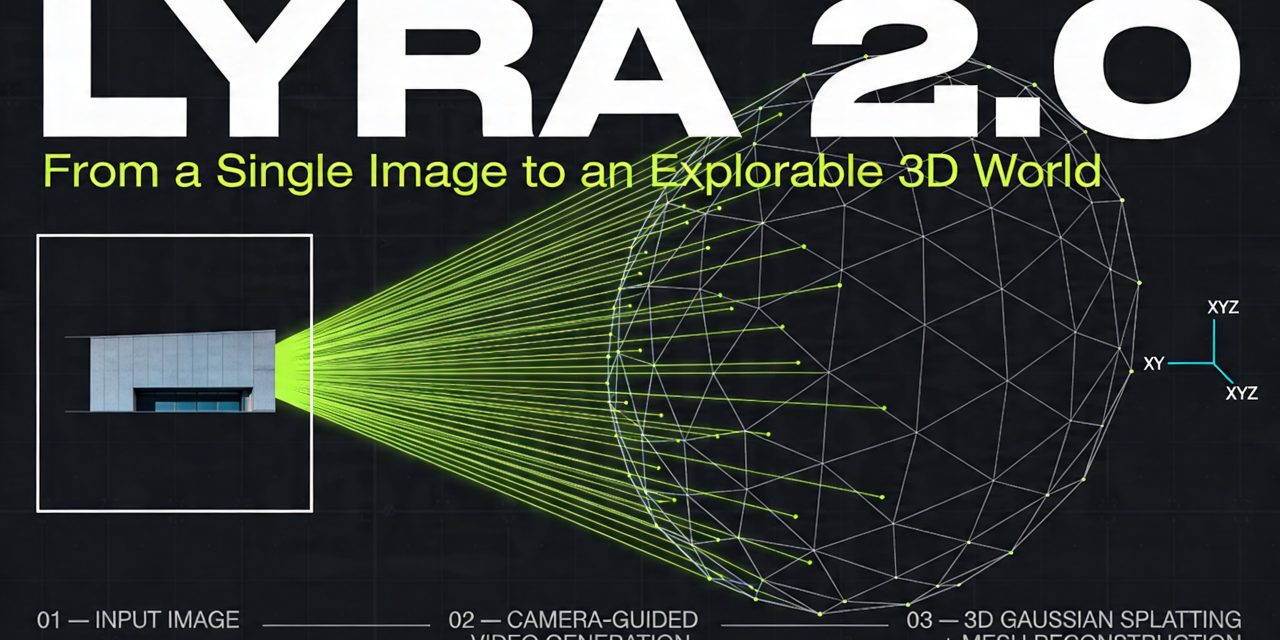

Lyra 2.0 is Nvidia’s generative reconstruction system for building large, explorable 3D environments from a single image. The core idea is straightforward. Instead of trying to generate a full 3D world directly, the system first generates a long, camera controlled video that behaves like a walkthrough. It then lifts that video into explicit 3D representations such as 3D Gaussian Splatting and meshes for real time rendering and simulation.

That framing matters because Lyra 2.0 sits at the intersection of video generation, 3D reconstruction and simulation. Nvidia positions it as a way to create coherent virtual spaces for immersive rendering and embodied AI. In the paper, the researchers also show export to Isaac Sim. A second report describes generated scenes with an extent of roughly 90 meters from a single photo.

The technical contribution is narrower and more important than the headline. Lyra 2.0 tries to solve two persistent weaknesses in long horizon scene generation. The first is spatial forgetting. The second is temporal drifting. The system’s architecture revolves around those two failure modes.

What Lyra 2.0 is

Lyra 2.0 is a framework for camera controlled long video generation with 3D persistence. It starts from one input image and a user defined camera trajectory. The model generates video segments autoregressively, meaning it extends the sequence chunk by chunk. As it does that, it stores geometry estimates for generated frames, retrieves relevant historical views when needed, and uses those views to keep the scene coherent when the camera revisits earlier regions or moves into new ones.

After video generation, Lyra 2.0 reconstructs the sequence into explicit 3D outputs. Nvidia uses a feed forward 3D Gaussian Splatting pipeline fine tuned on generated sequences so it can better tolerate the small inconsistencies that generative models still produce. It also extracts a surface mesh through a hierarchical sparse grid approach.

In practice, that means Lyra 2.0 is not just a video model and not just a 3D model. It is a pipeline with three linked stages:

- Generate a long camera guided video from one image

- Maintain consistency with geometry aware memory and drift mitigation

- Reconstruct the output into 3D Gaussians and meshes

Why long horizon 3D generation breaks down

Most camera controlled video models can maintain local consistency between nearby frames. Problems emerge when exploration becomes long, nonlinear and revisits earlier places. That is the problem Lyra 2.0 tries to solve.

Spatial forgetting

Video models work with a limited temporal context window. Once an earlier region falls outside that window, the model loses direct access to it. If the camera later returns to that location, the model often reconstructs it from scratch. The result is a mismatch in geometry, layout or texture. A hallway changes shape. A facade shifts. Objects move or disappear.

Earlier approaches tackled this by either keeping old frames in memory or by accumulating a global 3D representation and rendering it back into the model as guidance. Nvidia argues both routes have limits. Pure frame memory lacks robust geometric grounding under large viewpoint changes. Global 3D memory creates a second problem. Errors in generated frames contaminate the reconstructed geometry, and that corrupted geometry then feeds new errors back into future generation.

Temporal drifting

Autoregressive generation accumulates small mistakes. Minor blur, color shifts or geometric distortions compound across segments. Over time, the scene drifts away from the original image and from its own earlier state. A long exploration can end with strong degradation even if the first frames look convincing.

Nvidia identifies a training mismatch as the root cause. During training, the model sees clean history frames. During inference, it conditions on its own imperfect outputs. That difference makes the model brittle once generation runs for hundreds of frames.

How Lyra 2.0 works

Lyra 2.0 uses a retrieve generate update loop. The user provides a camera trajectory and optionally a text prompt for outpainting. Then the system retrieves relevant history frames, generates the next segment, and updates memory with newly generated content.

The paper describes two central mechanisms inside that loop. Nvidia calls them anti forgetting and anti drifting.

1. Anti forgetting through per frame 3D memory

Lyra 2.0 stores geometry for each generated frame in a growing 3D cache. Each cache entry includes the full resolution depth map, camera parameters and a downsampled point cloud created by unprojecting depth into world coordinates.

The design choice is critical. Lyra 2.0 does not fuse all history into one global point cloud. It keeps geometry per frame. Nvidia’s argument is that depth estimates on generated frames degrade over long runs. If you merge them all into one global structure, misalignments accumulate and corrupt the memory. Per frame storage contains that damage.

This geometry cache is used only for information routing. That phrase captures the main architectural distinction. Lyra 2.0 does not use noisy 3D geometry to directly render future RGB content. It uses geometry to answer two narrower questions:

- Which historical frames are most relevant for the next target view?

- How do pixels and structures in those frames correspond geometrically to the next view?

That separation matters because it reduces error amplification. Geometry guides retrieval and alignment. The video model still synthesizes appearance using its learned generative prior.

2. Geometry aware retrieval

At each autoregressive step, Lyra 2.0 retrieves the history frames whose 3D content is most visible from the target camera viewpoint. It projects the stored downsampled point clouds into the target image plane, computes visibility under occlusion handling, and assigns each historical frame a visibility score.

During inference, the system greedily selects frames that cover the most not yet covered target pixels. The goal is not just similarity. The goal is spatial coverage. That helps when the camera revisits a place hundreds of frames later. Even if those frames are far outside the temporal context window, retrieval can still bring them back through 3D overlap.

In the implementation details, Nvidia sets the number of retrieved spatial memory frames to 5. The point cloud subsampling factor is 8, and the visibility threshold is 0.1 in normalized depth units.

3. Dense correspondences without warped RGB artifacts

Retrieval alone is not enough. The model also needs to know how old views align with the new target view. Lyra 2.0 therefore establishes dense correspondences via canonical coordinate warping.

Instead of warping RGB history images into the new viewpoint, the system warps canonical coordinate maps and depth. Nvidia gives a practical reason for that choice. Warped RGB carries disocclusion holes, stretching and depth boundary artifacts. If the generator conditions on those images, it tends to copy the artifacts. Canonical coordinates keep geometric correspondence but remove appearance content. That lets the diffusion model synthesize pixels itself rather than inheriting hard rendering defects.

The video model therefore sees three complementary signals at once:

- Retrieved history frames as spatial memory slots

- Warped canonical coordinate and depth maps for correspondence

- Compressed temporal history from recent video generation

4. Anti drifting through context compression and self augmentation

To reduce temporal drift, Lyra 2.0 combines a long history mechanism with a training scheme that exposes the model to its own errors.

For history compression, Nvidia adopts FramePack. The idea is to compress older frames more aggressively than recent ones so the model can attend to a longer temporal horizon within a fixed token budget. The initial input frame remains in the context at full resolution as an anchor. That anchor helps keep appearance tied to the original scene.

FramePack extends context. It does not close the train inference gap. Lyra 2.0 addresses that with self augmentation training. With probability 0.7, the system corrupts history latents during training, performs one step of denoising, and then feeds the partially degraded result back as conditioning context. The target remains the clean latent for the next chunk. That forces the model to learn recovery rather than simple continuation.

In effect, Lyra 2.0 trains on the kind of imperfect context it will actually face at inference time. Nvidia reports that removing self augmentation improves per frame subjective quality but hurts long range consistency, style consistency and camera controllability. That tradeoff is one of the more useful findings in the paper. It suggests that long horizon stability requires training for robustness, not just local image quality.

The generative backbone under the hood

Lyra 2.0 builds on the Wan 2.1 14B DiT video diffusion backbone. Videos are encoded by a causal VAE into a latent space with 8x spatial and 4x temporal downsampling and latent channel dimension 16. Training and inference run at 832 by 480 resolution.

Generation uses a DiT based latent video diffusion setup with flow matching. The model generates fixed length segments autoregressively. Camera control enters through two modules:

- Depth warped conditioning, where the most recent frame is forward warped to target viewpoints using estimated depth and then concatenated with the denoising latent

- Plücker ray injection, where 6D ray coordinates provide per pixel camera information and are injected into transformer attention

Nvidia says the warped depth mechanism already gives strong camera control in Wan 2.1. The Plücker ray signal acts as a backup when viewpoint changes are so large that warped pixels no longer land on the target view.

How Lyra 2.0 turns video into 3D

Generated videos rarely have perfect multi view consistency. Traditional reconstruction pipelines can fail on these imperfections and create floaters or noisy structures. Lyra 2.0 addresses that with a feed forward 3D Gaussian Splatting model based on Depth Anything v3.

Nvidia modifies the Gaussian prediction head to reduce the number of output Gaussians by a factor of 4 using a downsampling factor of 2. That makes the representation more compact for real time rendering and streaming. The company also fine tunes the reconstruction model on generated scenes. For the fine tuning set, Nvidia autoregressively generates 3,000 one minute videos.

After the 3D Gaussian representation is built, the pipeline extracts a mesh through a hierarchical sparse grid approach based on OpenVDB and related tooling. Fine cells cover areas near generation viewpoints, while coarse cells model distant background. Marching cubes then produces a surface mesh for downstream use.

What the system can do in practice

Lyra 2.0 supports interactive scene exploration through a GUI. Users can inspect accumulated point clouds, define trajectories that revisit old regions or push into unseen space, and expand the scene progressively. That interaction model is a key part of the system. The scene is not generated in one shot. It grows through iterative exploration.

The outputs can be exported as 3D Gaussian Splatting models and meshes. Nvidia demonstrates import into Isaac Sim for robot navigation and interaction tasks. The practical implication is clear. If generated scenes become coherent enough, synthetic world generation could supplement or reduce real world 3D capture for embodied AI training.

The current scope remains narrower than the use case suggests. Lyra 2.0 focuses on static scenes. It does not explicitly model dynamic environments.

How fast is Lyra 2.0

Nvidia reports that each autoregressive step covers 80 frames. On a single NVIDIA GB200 GPU, the full model takes about 194 seconds per step, including depth estimation, spatial memory retrieval and denoising. A distilled version reduces that to about 15 seconds per step.

The speedup comes from Distribution Matching Distillation. The student model generates in 4 denoising steps instead of 35 and removes the need for separate classifier free guidance passes. Nvidia states that this cuts per step generation time by roughly 13 times while maintaining comparable visual quality, though camera controllability drops moderately.

How Lyra 2.0 performs against other methods

Nvidia evaluates Lyra 2.0 against six long video generation baselines, including GEN3C, Yume 1.5, CaM, VMem, SPMem and HY WorldPlay. Testing uses DL3DV Evaluation for in domain performance and Tanks and Temples for out of domain generalization.

The paper reports that Lyra 2.0 achieves the best results on both datasets across nearly all metrics. Those include standard image and video measures such as SSIM, LPIPS and FID, but also longer horizon metrics such as subjective quality, style consistency, camera controllability and reprojection error.

The comparison also clarifies the design tradeoffs in competing approaches:

- GEN3C achieves strong camera controllability and reprojection error through explicit depth warped conditioning, but loses image quality

- CaM and SPMem score well on quality but fall short on camera controllability

- SPMem suffers from stronger drift due to global point cloud conditioning

- VMem struggles across most long horizon metrics

- Yume 1.5 and HY WorldPlay lack explicit trajectory conditioning and fail to follow specified viewpoints reliably

That pattern supports Nvidia’s main claim. Lyra 2.0 tries to combine visual fidelity with accurate camera control by separating geometry routing from pixel synthesis and by training against drift.

What matters most about Lyra 2.0

The most consequential idea in Lyra 2.0 is not that it generates 3D from one image. Several systems now move in that direction. The sharper contribution is the decoupling of geometric memory from appearance generation.

Instead of trusting reconstructed geometry as a direct rendering source, Lyra 2.0 treats geometry as a retrieval and alignment layer. That limits the damage from imperfect depth. At the same time, self augmentation acknowledges a basic fact about autoregressive generation. If a model will consume its own outputs at inference time, training on only clean history is an avoidable mistake.

Together, those decisions make Lyra 2.0 less a standalone model than a systems level answer to long horizon consistency.

Limits and open questions

The paper also makes clear where Lyra 2.0 still falls short. The system focuses on static environments. Photometric instability in the training data can also carry over into generated scenes and then into reconstruction artifacts. Nvidia notes that exposure variation in the DL3DV dataset can affect consistency.

There is also a practical boundary around compute. Training uses a batch size of 64 across 64 NVIDIA GB200 GPUs for 7,000 iterations. That makes Lyra 2.0 a research scale system rather than a lightweight deployment recipe.