GPT Rosalind is OpenAI’s new domain specific reasoning model for biology, drug discovery, and translational medicine. OpenAI positions it as a research model for scientific workflows rather than a general chatbot for broad public use. GPT Rosalind is built to synthesize evidence, generate biological hypotheses, plan experiments, and use scientific tools across multi step workflows. Access is restricted to qualified enterprise research organizations, starting in the United States, under a trusted access program.

What GPT Rosalind is

OpenAI describes GPT Rosalind as a frontier reasoning model built for research across biology, drug discovery, and translational medicine. It is the first release in a broader life sciences model series. The core claim is that it handles scientific reasoning tasks more effectively than mainline general models in workflows that combine literature, databases, tools, sequence analysis, and experimental design.

OpenAI frames the model around a structural problem in biopharma R&D. Drug development typically takes 10 to 15 years from target discovery to regulatory approval in the United States. Early stage gains matter disproportionately because they shape target selection, biological hypotheses, and downstream experiments. OpenAI argues that current scientific workflows remain fragmented and difficult to scale. Researchers move between literature, public databases, lab tools, sequence resources, and experimental outputs. GPT Rosalind is meant to act as an intelligence layer across that stack.

The naming is deliberate. OpenAI says the model is named after Rosalind Franklin, whose work helped reveal DNA structure and laid foundations for molecular biology.

How GPT Rosalind differs from a general LLM

The difference is not only fine tuning. It is also workflow design. OpenAI says GPT Rosalind is optimized for long horizon, tool heavy research tasks. That includes:

- Evidence synthesis across scientific literature and databases

- Hypothesis generation in biology and chemistry

- Experimental planning and follow up design

- Sequence to function reasoning in genomics and RNA work

- Tool use across public multi omics and scientific resources

That framing matters because much of the current public debate around AI in health still centers on consumer chatbots. The use case here is different. A Nature Health study on public health queries in Microsoft Copilot analyzed 617,827 health related conversations and showed how generalist systems are used across symptom checks, emotional well being, paperwork, and research support. The largest category, Health Information and Education, accounted for 40.8% of conversations. Nearly one in five involved personal symptom assessment or condition discussion. On mobile, symptom questions reached 15.9% of conversations, versus 6.9% on desktop. That study underlines the breadth of public health use, but also its safety complexity. GPT Rosalind sits elsewhere in the stack. It is not positioned as a broad public health assistant. It is built for governed scientific research.

What OpenAI says the model can do

OpenAI makes four capability claims.

Deeper reasoning across biology and chemistry

The model is optimized for work involving molecules, proteins, genes, pathways, and disease relevant biology. OpenAI highlights chemistry, protein engineering, genomics, and biochemistry as core domains.

Better support for multi step scientific workflows

OpenAI says GPT Rosalind performs better when the task requires literature review, sequence interpretation, tool selection, database access, experimental planning, and data analysis in one chain rather than as isolated prompts.

Stronger tool use

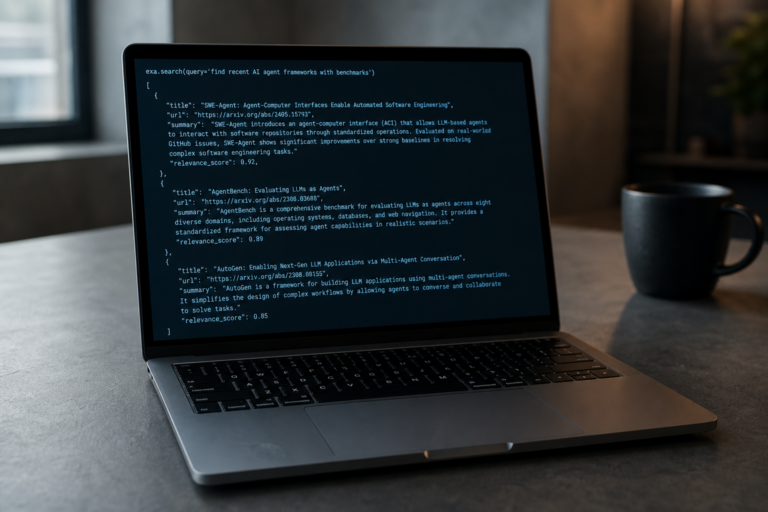

The company pairs the model with a new Life Sciences research plugin for Codex. The plugin is available on GitHub and connects models to more than 50 public scientific tools and data sources. OpenAI describes it as an orchestration layer for research tasks such as protein structure lookup, sequence search, literature review, and public dataset discovery.

Higher performance on selected scientific benchmarks

OpenAI reports that GPT Rosalind achieved leading published performance on BixBench, a benchmark focused on real world bioinformatics and data analysis. The company also says the model outperformed GPT 5.4 on 6 of 11 tasks in LABBench2, with the largest gain on CloningQA, a task centered on end to end reagent design for molecular cloning protocols.

What the evaluation data shows

The strongest performance signal in OpenAI’s release does not come from a public leaderboard alone. It comes from an industry evaluation with Dyno Therapeutics. OpenAI says GPT Rosalind was tested on RNA sequence to function prediction and generation using unpublished, uncontaminated sequences. That point is critical because contamination from public training data remains a standing issue in model evaluation.

In that setup, OpenAI reports that best of ten model submissions ranked above the 95th percentile of human experts on the prediction task and around the 84th percentile on the sequence generation task when evaluated in Codex. If those figures hold up across broader external validation, they suggest that GPT Rosalind is not only competent at literature retrieval or summarization, but competitive on narrow, expert level sequence tasks.

The plugin may matter as much as the model

Scientific work often fails to scale not because reasoning is absent, but because context is distributed across too many systems. OpenAI’s Life Sciences research plugin addresses that by bundling modular skills for:

- Human genetics

- Functional genomics

- Protein structure

- Biochemistry

- Clinical evidence

- Public study discovery

For many research teams, that may be the practical entry point. OpenAI says all users can use the plugin package with mainline models, while eligible enterprise users can combine it with GPT Rosalind for deeper biological reasoning. That creates a two tier structure. The plugin broadens access to life sciences workflows. The specialized model remains gated.

Who GPT Rosalind is for

GPT Rosalind is for qualified enterprise life sciences organizations operating in controlled research settings. OpenAI explicitly mentions pharmaceutical, biotechnology, research, and life sciences technology organizations. Named collaborators include Amgen, Moderna, Thermo Fisher Scientific, Oracle Health and Life Sciences, NVIDIA, Allen Institute, Benchling, Novo Nordisk, and UCSF School of Pharmacy.

This is for teams that already manage regulated data, structured scientific workflows, and formal governance. It is not meant for consumers seeking health advice. It is not a general release model for hobby biology or open experimentation.

OpenAI’s access model also excludes large parts of the market for now. The research preview is available to qualified customers through a trusted access program and starts with Enterprise customers in the U.S. Organizations must pass qualification and safety review. They must demonstrate legitimate scientific research with public benefit, maintain governance and misuse prevention controls, and restrict access to approved users in secure environments.

Why OpenAI is restricting access

The life sciences category has a dual use problem. Models that help legitimate research can also lower barriers for harmful biological misuse. OpenAI’s deployment model reflects that tension. The company says access decisions follow three principles: beneficial use, strong governance and safety oversight, and controlled access with enterprise grade security.

For users, that translates into several operational conditions:

- Restricted access to approved users

- Use inside secure, managed environments

- Compliance with preview terms and OpenAI policies

- Potential onboarding and ongoing review requirements

OpenAI also says use during the preview will not consume existing credits or tokens, subject to abuse guardrails. That removes some short term budget friction for qualified organizations, but it does not change the core reality. Access remains scarce by design.

How industry partners are positioning it

OpenAI’s launch leans heavily on ecosystem validation. Amgen’s Sean Bruich says the collaboration could help accelerate medicine delivery. NVIDIA’s Kimberly Powell links domain reasoning with accelerated computing to compress R&D timelines. Moderna CEO Stéphane Bancel points to reasoning across complex biological evidence and translation into experimental workflows. The Allen Institute highlights more consistent and repeatable agentic workflows for tasks like finding and aligning data.

These statements are predictable in tone, but they still indicate where early demand sits. Large organizations want AI that reduces friction in evidence gathering, protocol planning, sequence analysis, and cross tool orchestration. They do not primarily want another general chatbot interface.

OpenAI also points to prior work with Ginkgo Bioworks, where AI models helped achieve a 40% reduction in protein production costs. That figure does not refer specifically to GPT Rosalind, but it supports the broader commercial argument that domain models can produce measurable lab and process gains.

What this means for the AI market

General systems attract broad real world usage quickly, often in contexts that are personal, ambiguous, and safety sensitive. Specialized systems, by contrast, can be tuned for narrower tasks, evaluated on domain benchmarks, paired with purpose built tools, and deployed under stronger access controls.

The tradeoff is obvious. Narrower deployment reduces reach and immediate adoption. It may also increase trust among institutions that care less about open access and more about auditability, security, and reproducibility.