The 2026 AI Index Report from Stanford offers one of the clearest snapshots of the current AI landscape. What makes it useful is not just the volume of data, but the way it connects technical progress with economics, infrastructure, education, policy, and public opinion. If you want to understand where AI stands in 2026, this report is one of the most valuable reference points available.

The big takeaway is straightforward. AI is improving fast, adoption is widening, and the conversation has moved beyond model rankings alone. The real questions now are about who builds AI, who controls the infrastructure, who benefits from it, and whether governance can keep pace.

AI performance is still accelerating

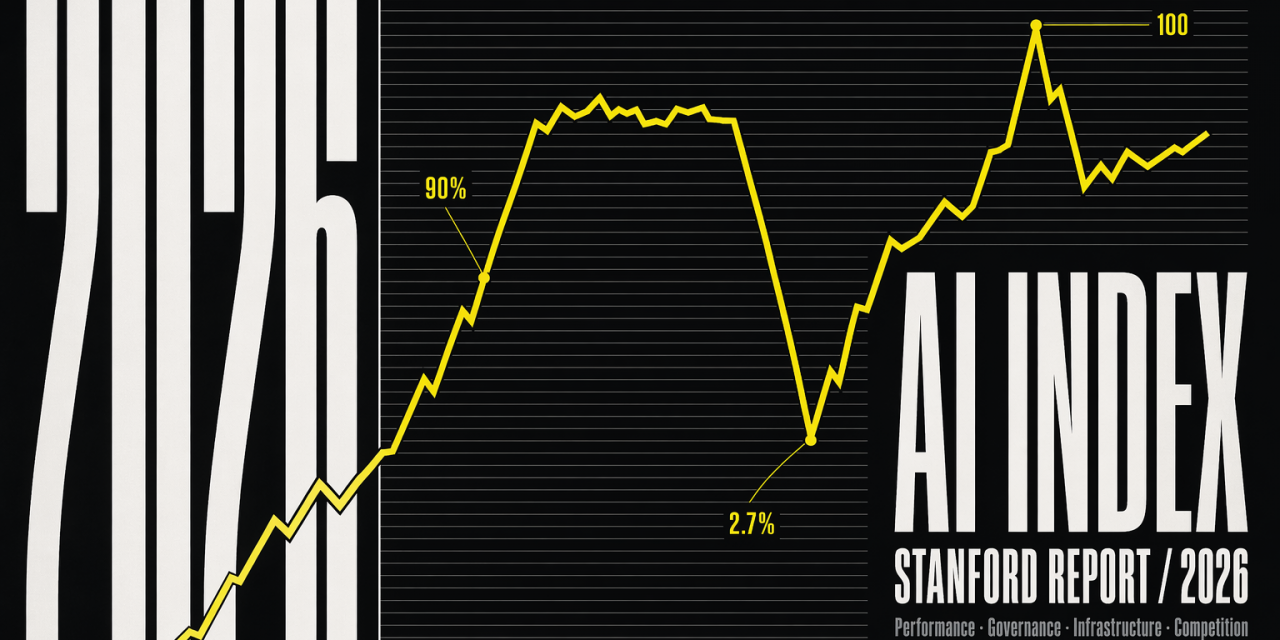

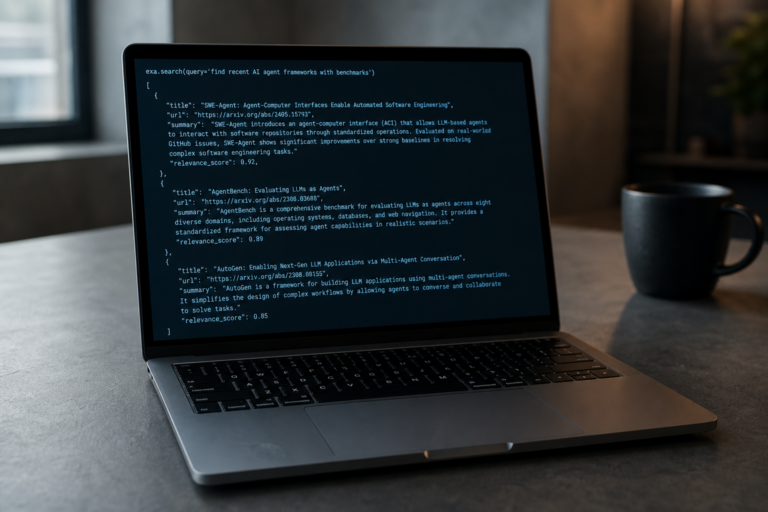

A central message in the 2026 AI Index Report is that frontier AI capability is not flattening out. In 2025, industry produced more than 90% of notable frontier models. Several of those models now reach or exceed human baselines on demanding tasks such as PhD level science questions, multimodal reasoning, and competition mathematics.

Some benchmark gains are especially striking. On SWE bench Verified, a key coding benchmark, performance jumped from around 60% to near 100% in a year. That kind of movement matters because it signals that progress is not limited to impressive demos. It is increasingly visible in structured evaluations that test practical capability.

At the same time, the report avoids a simplistic success narrative. AI can achieve an International Mathematical Olympiad gold medal level result and still fail at something basic like reading an analog clock reliably. Stanford frames this as the jagged frontier of AI. In other words, top models can be exceptionally strong in some domains and unexpectedly weak in others.

The U.S. and China race is tighter than many expected

Another major finding is that the performance gap between leading U.S. and Chinese models has largely narrowed. According to the report, the lead changed hands multiple times since early 2025. By March 2026, Anthropic’s top model reportedly led by just 2.7%.

That does not mean both countries are identical in every dimension. The U.S. still leads in the number of top tier AI models and in higher impact patents. China, however, leads in publication volume, citations, patent output, and industrial robot installations. South Korea also stands out with the highest AI patents per capita.

This matters for anyone still viewing AI leadership as a one country story. The competitive landscape is now more distributed and more dynamic. It is also more sensitive to industrial policy, supply chains, and talent flows than a narrow benchmark comparison would suggest.

AI infrastructure has become a strategic issue

The report highlights a less glamorous but critical layer of the AI stack, infrastructure. The United States hosts 5,427 data centers, far more than any other country. It also consumes more energy than any other country. Yet much of the global AI hardware chain depends on a single manufacturing bottleneck. Almost every leading AI chip is fabricated by TSMC in Taiwan.

That concentration makes AI infrastructure a geopolitical and economic concern, not just a technical one. It also explains why governments are increasingly talking about compute capacity, semiconductor resilience, and AI sovereignty. Model performance depends on hardware access, energy, and supply stability. Without those foundations, AI leadership becomes fragile.

Adoption is spreading faster than formal systems can adapt

The 2026 AI Index Report shows that adoption is moving at historic speed. Generative AI reached 53% population adoption within three years, which is faster than the PC or the internet. Adoption rates differ across countries, but the broader point is clear. AI is no longer an early adopter tool.

In organizations, adoption reached 88%. Among university students, 4 in 5 now use generative AI. In U.S. high schools and colleges, more than 80% of students use AI for school related tasks.

What is lagging is the institutional response. Only half of middle and high schools have AI policies in place, and just 6% of teachers say those policies are clear. That gap between usage and guidance is one of the report’s most important signals. People are integrating AI into daily work and learning faster than schools, employers, and public institutions are adapting their rules and practices.

Responsible AI is not keeping pace

This is where the report becomes more cautionary. Most frontier model developers publish results for capability benchmarks, but reporting on responsible AI benchmarks remains inconsistent. At the same time, documented AI incidents rose sharply to 362, up from 233 in 2024.

The tension is not just about disclosure. Recent research cited in the report suggests that improving one dimension of responsible AI, such as safety, can reduce another, such as accuracy. That does not mean safety work is futile. It means trade offs are real, and measurement remains incomplete.

This is also where policy becomes central. The EU AI Act, for example, reflects a risk based approach with different obligations for prohibited, high risk, transparency related, and minimal risk systems. Its rollout timeline through 2026 and 2027 shows how regulation is trying to catch up with deployment realities, especially for general purpose AI and high risk use cases. Whether that framework becomes a model for others will depend not only on enforcement, but also on whether it remains workable in a market defined by fast moving general purpose models.

Investment is strong, but talent patterns are shifting

The United States remains the investment leader. Private AI investment reached $285.9 billion in 2025, compared with $12.4 billion in China according to the report, while noting that official Chinese figures may not capture the full picture because of government backed funding structures. The U.S. also led in newly funded AI companies, with 1,953 in 2025.

But there is a catch. America’s ability to attract global AI talent appears to be weakening. The number of AI researchers and developers moving to the U.S. has dropped sharply since 2017. That should not be treated as a footnote. Talent mobility is a long term determinant of innovation capacity, especially in a field where breakthroughs often cluster around a relatively small global pool of highly skilled researchers and engineers.

AI is reshaping work, skills, and value creation

Stanford’s findings on AI adoption and economic value line up with broader signals from the labor market. The report estimates the value of generative AI tools to U.S. consumers at $172 billion annually by early 2026. That is a remarkable figure, especially since many users access these tools for little or no direct cost.

Other industry research points in a similar direction. PwC’s 2025 AI Jobs Barometer found that industries more exposed to AI saw faster revenue growth per employee, quicker wage growth, and significantly faster skill change. Workers with AI skills also earned a sizable wage premium.

Taken together, the message is nuanced. AI is not simply replacing work in a uniform way. It is changing the value of certain skills, increasing the importance of AI literacy, and pushing more roles toward augmentation rather than pure automation. That does not remove disruption. It does mean the labor story is more complex than straightforward job loss headlines suggest.

Public trust remains fragmented

One of the most revealing sections of the Stanford report is public opinion. Experts and the public do not see AI’s future in the same way. For workplace impact, 73% of experts expect AI to be positive, compared with only 23% of the public. Similar gaps appear in views on the economy and healthcare.

Trust in institutions to regulate AI is also uneven. Among surveyed countries, the United States showed the lowest trust in its own government’s ability to regulate AI. Globally, the EU is trusted more than the U.S. or China to regulate AI effectively.

That matters because AI adoption at scale is not just a question of capability or investment. It also depends on whether institutions can establish legitimacy, enforce standards, and explain trade offs in ways people find credible.

Why the 2026 AI Index Report matters

The strength of the 2026 AI Index Report is that it refuses to reduce AI to a single storyline. AI is becoming more capable and more widely used. But it is also exposing weak points in governance, education, infrastructure, and public trust.

If there is one practical lesson in the report, it is this. The next phase of AI will be shaped less by whether models improve and more by whether societies can build the surrounding systems that make those improvements useful, reliable, and broadly beneficial. That includes better benchmarks for safety, clearer education policies, stronger compute resilience, and governance that is specific enough to matter without becoming obsolete on arrival.

Model performance still grabs the headlines. In 2026, the harder question is whether the institutions around AI can keep up.