Google just did something Nvidia hasn’t: it split its AI accelerator into two purpose-built chips. The eighth generation of Google’s Tensor Processing Unit arrives as a pair, TPU 8t for training and TPU 8i for inference, each tuned for a fundamentally different half of the modern AI workload. The company previewed both at a private gathering in Las Vegas and then formalised the announcement at Google Cloud Next ’26. Shipping is planned for later this year.

It signals that Google believes the one chip a year cadence the rest of the industry follows is no longer enough. With reasoning models, agentic workflows and reinforcement learning now dominating frontier workloads, Google is betting that specialisation beats general-purpose silicon, and that vertical integration of the stack beats renting capacity from Nvidia.

Why split training and inference into two chips

For years, a single TPU generation handled both pre-training and serving. That worked while workloads were relatively uniform. It stopped working once frontier labs started deploying trillion-parameter Mixture-of-Experts models and production agents that reason over long context windows in continuous feedback loops.

Amin Vahdat, Google’s SVP and chief technologist for AI and infrastructure, said the decision to split the roadmap was made back in 2024, a year before the rest of the industry pivoted hard toward reasoning and agents. The reasoning is simple: pre-training is a bandwidth and throughput problem, whereas agentic serving is a latency and memory problem. Forcing both onto the same silicon means neither runs at peak efficiency.

Google’s answer is two chips that share a software stack but diverge sharply at the hardware level.

TPU 8t the training powerhouse

TPU 8t is designed for massive-scale pre-training and embedding-heavy workloads. It keeps the 3D torus network topology Google has refined across previous generations, but pushes it to 9,600 chips in a single superpod, up from 9,216 on the seventh-generation Ironwood.

The headline numbers, as Google presents them:

- 2.8x the FP4 ExaFlops per pod compared to Ironwood, rising from 42.5 to 121 ExaFlops.

- 2x scale-up bandwidth on the inter-chip interconnect, now 19.2 Tb/s per chip.

- Up to 4x scale-out bandwidth over the data centre network, reaching 400 Gb/s per chip.

- Two petabytes of shared high-bandwidth memory across a single superpod.

The architectural advances behind those numbers are where the engineering story gets interesting.

SparseCore, native FP4 and balanced vector scaling

TPU 8t keeps the SparseCore accelerator that offloads irregular memory access patterns, which are typical of embedding lookups. The Matrix Multiply Unit handles the dense matrix math, while SparseCore takes care of data-dependent all-gather operations and other collectives. This stops the “zero-op” bottlenecks that slow down general-purpose GPUs on embedding-heavy workloads.

Google also introduced native 4-bit floating point. Dropping precision from FP8 to FP4 doubles throughput on the Matrix Multiply Unit while keeping accuracy acceptable for large models, and it halves the bits moved per parameter. Less data movement means less energy burned shuffling bytes across the chip. Combined with better Vector Processing Unit scaling, which overlaps quantisation, softmax and layernorm operations with matrix multiplies, the chip spends less time waiting and more time computing.

Virgo Network and the million-chip cluster

To feed a pod that large, Google built a new scale-out fabric called Virgo Network. It uses high-radix switches and a flat, two-layer non-blocking topology, which reduces latency by cutting network tiers. A single Virgo fabric can link more than 134,000 TPU 8t chips with up to 47 petabits per second of non-blocking bi-sectional bandwidth, delivering over 1.6 million ExaFlops with near-linear scaling.

Combined with JAX and Pathways, Google says TPU 8t clusters can scale beyond one million chips in a single logical training job. That is the number that matters most for labs training frontier models. No other commercial provider is publicly claiming anything close.

TPUDirect RDMA and TPUDirect Storage

A common bottleneck on large training clusters is not the silicon, it’s getting data to the silicon. TPU 8t introduces direct memory access between the TPU’s high-bandwidth memory and the Network Interface Card, bypassing the host CPU and DRAM entirely. A parallel feature, TPUDirect Storage, routes data straight from managed storage like 10T Lustre into the TPU, effectively doubling bandwidth for massive data transfers.

Google claims this delivers 10x faster storage access compared to Ironwood. For long training runs where wall-clock hours translate directly into dollars, collapsing the data path is one of the biggest efficiency wins in the whole system.

Goodput and the reliability story

At million-chip scale, hardware failures are not edge cases, they are constant. TPU 8t targets over 97% goodput, meaning useful productive compute time, through real-time telemetry, automatic detection and rerouting around faulty inter-chip interconnect links, and Optical Circuit Switching that reconfigures hardware around failures without human intervention. Every percentage point of goodput at frontier scale saves days of training time.

TPU 8i the reasoning engine

If TPU 8t is an evolutionary step, TPU 8i is the more architecturally adventurous chip. It targets the world of real-time agent serving, where millions of agents may be running concurrently, each producing tokens in a latency-sensitive chain of thought.

The generational jumps are dramatic:

- 9.8x the FP8 ExaFlops per pod, rising from 1.2 to 11.6.

- 6.8x the HBM capacity per pod, from 49.2 TB to 331.8 TB.

- Pod size grows 4.5x, from 256 chips to 1,152 chips.

- 384 MB of on-chip SRAM per chip, triple the Ironwood figure.

- 288 GB of HBM per chip at 8,601 GB/s.

Breaking the memory wall

During autoregressive decoding, processors spend a lot of their time waiting on memory rather than computing. Large on-chip SRAM lets TPU 8i host a bigger KV cache entirely on silicon, which matters enormously for long-context reasoning. Nvidia is heading in the same direction with its upcoming Groq 3 LPU silicon, which also leans heavily on SRAM, and Cerebras has built its whole pitch around this approach. Google sized the 8i’s SRAM specifically for the KV cache footprint of reasoning models at production scale.

The Collectives Acceleration Engine

Each TPU 8i chip includes two Tensor Cores and one Collectives Acceleration Engine on a chiplet die, replacing the four SparseCores found on Ironwood. The CAE aggregates results across cores with near-zero latency, specifically accelerating the reduction and synchronisation steps that dominate auto-regressive decoding and chain-of-thought processing. Google claims it cuts on-chip collective latency by 5x. For agent workloads that spend most of their time waiting on small collective operations, that translates directly into higher throughput per watt.

Boardfly topology

The 3D torus is excellent for neighbour-to-neighbour communication in dense training, but it imposes a latency tax on the all-to-all patterns that dominate Mixture-of-Experts routing and reasoning. In a 1,024-chip torus, the worst-case network diameter is 16 hops. Google’s new Boardfly topology, inspired by Dragonfly principles, flattens this dramatically.

Boardfly builds up hierarchically. Four chips form a building block with internal inter-chip interconnect links. Eight boards are fully connected via copper cabling into a group. Thirty-six groups are linked through Optical Circuit Switches into a full pod. The result: maximum network diameter drops from 16 hops to just seven, a 56% reduction. That matters because tail latency in a distributed sampling job is set by the slowest hop, not the average.

Axion, the host that finally keeps up

Both TPU 8t and TPU 8i are paired with Google’s custom Arm-based Axion CPU hosts. This solves a chronic bottleneck in previous generations: data preparation latency on the host side would stall the TPUs even when the silicon itself was idle-ready. TPU 8i doubles the physical CPU hosts per server compared to Ironwood and uses a non-uniform memory architecture for isolation. For the first time, both the host and the accelerator are designed together, which lets Google optimise system-level energy efficiency in ways that are genuinely out of reach for anyone using off-the-shelf x86 hosts alongside third-party accelerators.

Why Google doesn’t pay the Nvidia tax

The commercial subtext of this launch is impossible to miss. OpenAI, Anthropic, xAI and Meta all train their frontier models predominantly on Nvidia silicon, which means they pay Nvidia’s data-centre gross margin on every H200 and Blackwell they buy or rent. That margin, informally called the “Nvidia tax,” has been flagged by analysts as a structural cost disadvantage for anyone renting rather than designing their own accelerators.

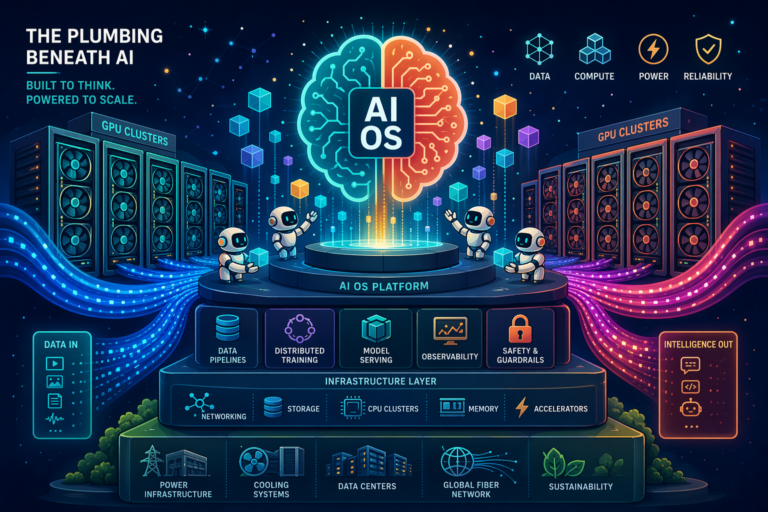

Google pays fabrication, packaging and engineering costs on its TPUs. It does not pay that margin. When Vahdat pointed at Google’s six-layer AI stack diagram during the fireside, the argument was clear: designing each layer in isolation forces every layer down to the lowest common denominator. Designing them together, from energy at the foundation through data centre, hardware, software, models and services, lets the whole stack converge on better cost-per-token economics.

Google claims concrete gains against Ironwood:

- TPU 8t delivers up to 2.7x better performance-per-dollar for large-scale training.

- TPU 8i delivers up to 80% better performance-per-dollar, particularly at low-latency targets for large MoE models.

- Both chips deliver up to 2x better performance-per-watt.

Notably, Google did not publish direct comparisons against Nvidia’s Blackwell or the forthcoming Groq 3 LPU. That is partly strategic and partly because the workloads are genuinely different. Independent benchmarks from early cloud customers and third-party evaluators over the coming quarters will be the real test.

How this compares to Nvidia’s latest silicon

Nvidia still sets the pace for raw accelerator supply and for the breadth of its software ecosystem. CUDA and PyTorch remain the default path for most AI teams, and the H200 and Blackwell generations dominate frontier training outside Google. Nvidia’s upcoming Groq 3 LPU, built on technology from the 20 billion dollar Groq acquisition, leans heavily on SRAM to serve low-latency inference, which mirrors the direction TPU 8i is taking.

Where the comparison tilts is in two places. First, vertical integration. Nvidia designs world-class silicon but does not own the surrounding stack end-to-end the way Google does. Second, scale of a single training cluster. A million-chip logical cluster is not something anyone else is publicly claiming to offer, and for labs training models above the trillion-parameter mark, that ceiling matters more than any individual chip spec.

On software, Google has closed part of the gap. Native PyTorch support for TPUs is now in preview, complete with Eager Mode. JAX, Keras, MaxText, vLLM and SGLang all run natively. Pallas gives teams a Python-based custom kernel language for squeezing performance out of the CAE and SparseCore. The portability tax of moving from CUDA to XLA is lower than it used to be, but it is not zero, and it is still worth factoring into any multi-year commitment.

Who is actually buying

Adoption is ramping. Citadel Securities runs quantitative research software on TPUs. All 17 US Department of Energy national laboratories use AI co-scientist software built on them. Anthropic has committed to multiple gigawatts of Google TPU capacity, a striking move given that Anthropic also trains on Nvidia. DA Davidson analysts estimated in September that the TPU business, combined with Google DeepMind, would be worth roughly 900 billion dollars as a standalone entity.

The bigger signal

The frontier compute race used to be a question of who could buy the most H100s. It is now a question of who controls the stack. TPU 8t and TPU 8i are Google’s clearest statement yet that the answer, for the moment, is a shortlist of two: Google and Nvidia. Everyone else is either a customer or a distant third.