When enterprises talk about their AI ambitions, the conversation usually drifts toward models, GPUs or applications. What rarely gets the spotlight is the messy plumbing underneath: the data itself. Petabytes of it, scattered across storage silos, databases and pipelines that were never designed for the kind of real-time reasoning that modern AI agents demand. That gap is exactly where Vast Data has planted its flag, and it’s the reason the company just closed a Series F round that values it at $30 billion.

What is Vast Data

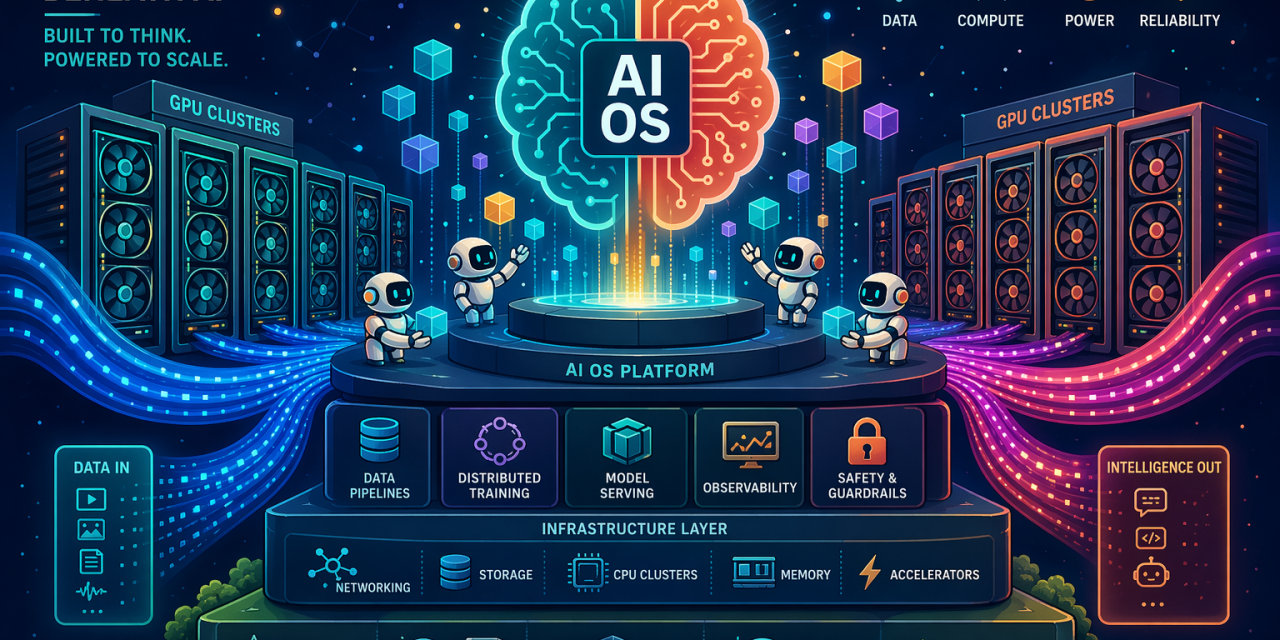

Vast Data positions itself as an AI operating system company. That framing matters, because Vast started life looking like a storage vendor and spent years being compared to the likes of Pure Storage, Dell or HPE. Today the company describes its product as a unified software stack that brings together data, compute and agentic execution on one platform, so organisations can build, train and run AI models while powering the applications and agents that depend on them.

The technical foundation is an architecture Vast calls DASE, short for Disaggregated Shared Everything. In simple terms, DASE separates the layers that normally live tightly coupled inside traditional systems and reconnects them with ultrafast links. Every server in the cluster can reach the same central pool of data with low latency, which means organisations no longer have to pick between scale and simplicity, or between performance and cost. On top of that foundation, Vast has layered a growing family of services: a database, a DataEngine, a DataSpace, an InsightEngine, an AgentEngine and, more recently, a PolicyEngine and a TuningEngine.

Put together, the platform is designed to let AI agents reason about and act on a company’s real-time context, rather than waiting for data to be copied, transformed and shipped between systems before it becomes useful.

Who sits behind the company

Vast Data was founded in 2016 by Renen Hallak, Jeff Denworth, Shachar Fienblit and Alon Horev. Hallak serves as chief executive officer, Denworth as co-founder and public face of much of the technical strategy, Fienblit as chief research and development officer and Horev as chief technology officer. The leadership team has stayed largely intact since the earliest days, which Hallak credits as one of the reasons the company has been able to make consistent architectural bets over a decade.

Hallak often traces the origin of Vast’s thinking to advances in neural networks at Google DeepMind around 2014. The question the founders kept returning to was simple: what happens if you give these systems faster access to far more information? Could that bring machine reasoning closer to, or eventually beyond, the capabilities of the human brain? That question became the north star of the company’s architecture.

The broader leadership bench includes president Michael Wing, chief financial officer Amy Shapero, who joined from Shopify, chief marketing officer Marianne Budnik and chief revenue officer Rick Scurfield. Shapero’s arrival is widely read as a signal that Vast is preparing for a possible IPO, although Hallak has said the company has not yet hired bankers and is focused on being ready rather than setting a date.

The problem Vast is trying to solve

Enterprises generate enormous amounts of data, and that data can be a goldmine for AI models that need rich context. The catch is that the same volume clogs the pipes. Legacy infrastructures were designed for predictable, siloed workloads, not for training frontier models on exabyte-scale datasets or for agents that need to read, reason and act on live information in milliseconds.

Vast’s core argument is that the layers of the modern AI stack, namely applications, models and infrastructure, no longer operate as independent domains. They behave as one system, tied together through data. If the data layer is slow, fragmented or untrusted, everything on top suffers. That’s why Vast has progressively absorbed functions that used to sit in separate products: structured and unstructured storage, database services, compute orchestration, retrieval pipelines, agent deployment and now policy enforcement and model tuning.

The company also frames trust as a first-class infrastructure problem. Models need to be trained on data that is verified. Agents need guardrails around which tools they can call and which memories they can retain. Every action needs to be auditable. With its PolicyEngine, Vast sits between agents and the tools, memory stores and retrieval systems they touch, mediating each interaction according to rules set by the organisation. The companion TuningEngine closes the loop by collecting agent behaviour, running it through an ETL pipeline and feeding it into tuners, including low-rank adaptation, supervised fine-tuning and reinforcement learning, so that improved models can be redeployed through the AgentEngine.

What Vast has achieved so far

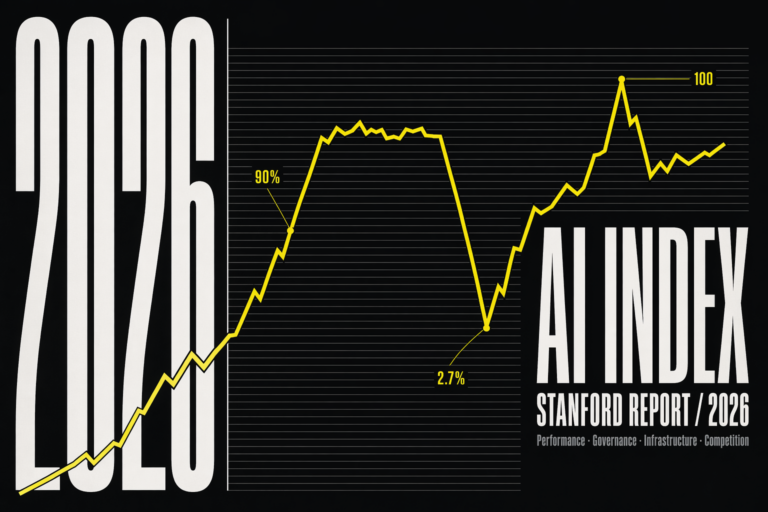

The headline number is the one the industry is talking about: a Series F round that brings in roughly $1 billion in primary and secondary capital at a $30 billion valuation, more than three times the $9.1 billion valuation set during the Series E. The round was led by Drive Capital with Access Industries as co-lead, joined by existing backers including Fidelity, NEA and Nvidia, plus several new strategic investors.

The financial milestones behind that valuation are significant. Vast has surpassed $4 billion in cumulative software bookings since shipping its first product in 2019. It exited its last fiscal year with more than $500 million in committed annual recurring revenue, positive operating margin and positive free cash flow. Hallak has publicly shared a Rule of X score of 228 percent, a combined measure of growth and profitability that is extremely rare in infrastructure software.

Customer momentum is arguably more telling than the dollar figures. Vast’s platform underpins AI environments spanning millions of GPUs globally. The customer roster includes frontier model labs such as Mistral, GPU cloud providers like CoreWeave and Crusoe, hyperscalers including Microsoft and Google Cloud, enterprises such as JPMorgan Chase and Lowe’s, and public sector users including the US Air Force. More than a quarter of the Fortune 100 are customers. Crusoe alone has grown its partnership with Vast more than tenfold in a single year.

On the technology side, Vast has expanded from a single storage product into a full AI operating system in roughly seven years, adding every layer in-house. Hallak is explicit that the company has avoided acquisitions of outside intellectual property because every component is built on the DASE architecture and has to be designed from scratch to fit. The only exception so far has been a small acqui-hire in Iceland. Recent releases include the CNode-X, an Nvidia-certified system that runs Vast’s storage services on GPU clusters, integrations with Nvidia libraries such as cuVS for vector search, NIM microservices for retrieval-augmented generation pipelines and Sirius for accelerated SQL, and a redesigned Polaris control plane that unifies management across on-premises clusters, public clouds and sovereign neoclouds.

Where the company is heading next

Hallak has been clear that the ambition for the year ahead is to triple again. Vast has reportedly tripled year over year on a consistent basis, and the company expects the demand curve to hold as enterprises move AI from experiments into production.

Several strategic directions stand out:

- Deeper enterprise penetration. Vast began its commercial life serving a narrow niche of research-heavy organisations, including groups working on autonomous systems, medical imaging and quantitative trading. The company then rode the generative AI wave with model builders and GPU clouds. The next leg is mainstream enterprise adoption, often through partnerships with hyperscalers that resell Vast’s services.

- Geographic expansion. New countries are being added to the footprint, which aligns with the rise of sovereign neoclouds and regional AI factories that prefer to keep data under local jurisdiction.

- Moving up the stack. The addition of PolicyEngine, TuningEngine and AgentEngine shows Vast climbing from pure infrastructure toward the software layers that govern how AI systems behave. Security for AI is an area Hallak has openly flagged as a possible future acquisition target, if the right cultural, technical and financial fit appears.

- Accelerated data computing with Nvidia. More than two dozen joint engineering projects are underway, with the shared thesis that accelerated computing has not yet reached the data layer and that the next generation of AI workloads will require it to.

- IPO readiness. The company says it will be ready to go public later this year, though whether it pulls the trigger is a separate decision.

Why this matters beyond Vast

The story of Vast Data is useful as a lens on a broader shift. The AI stack is being redrawn around data rather than around compute alone. GPUs get the headlines and the capital expenditure, but the bottleneck for most organisations is no longer raw processing power. It is whether the right data can reach the right model or agent at the right moment, with the right permissions, and whether every step of that journey can be trusted and audited.

If Vast is correct that applications, models and infrastructure are collapsing into a single system mediated by data, then the companies that own the data layer will influence how AI is actually built and deployed inside enterprises. That is a more consequential position than being a storage vendor, and it explains both the valuation and the speed at which the rest of the industry is trying to respond. The interesting question for the next few years is not whether Vast can keep growing, but whether the category it is defining, the AI operating system, becomes the standard shape of enterprise infrastructure, or whether hyperscalers and model providers build competing versions of their own.