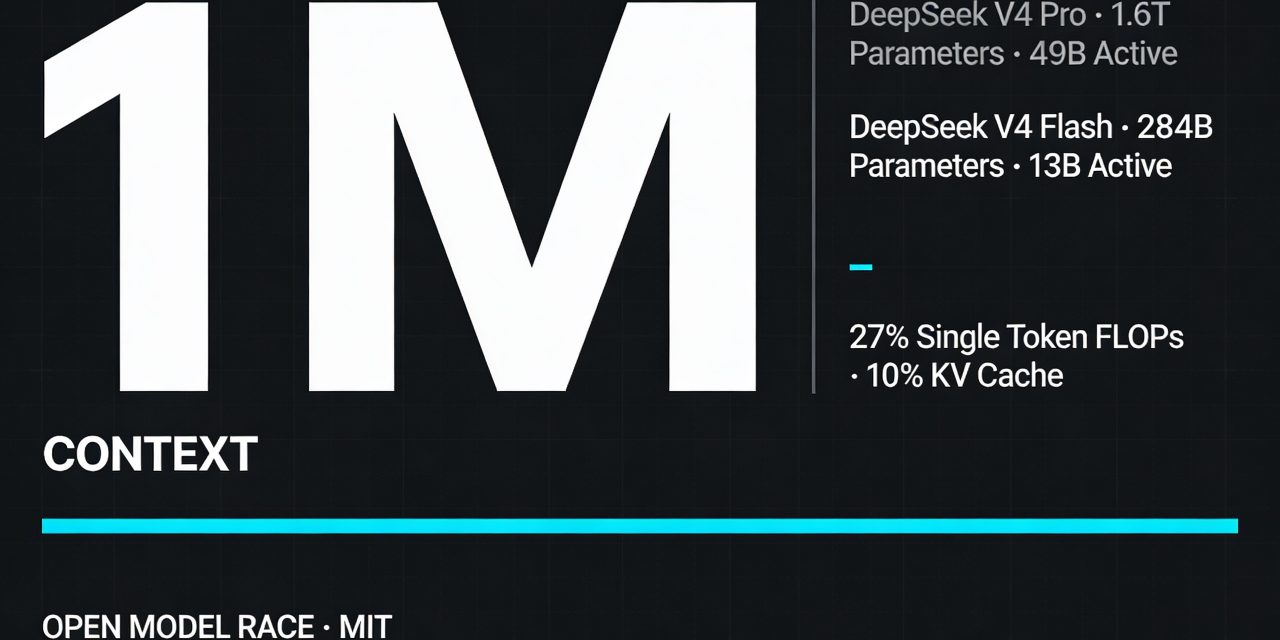

DeepSeek V4 enters the market with a clear proposition: push open models closer to frontier performance while cutting the cost of long context inference. The preview release includes two Mixture of Experts models, DeepSeek V4 Pro and DeepSeek V4 Flash. Both support a 1 million token context window. That single design choice shapes the whole release, from architecture to pricing to deployment strategy.

The numbers define the split. DeepSeek V4 Pro has 1.6 trillion total parameters with 49 billion active parameters. DeepSeek V4 Flash has 284 billion total parameters with 13 billion active parameters. DeepSeek positions Pro as the top tier option for reasoning, coding and harder agentic tasks. Flash targets speed, lower cost and broader deployment. Both are open weights under the MIT License.

What DeepSeek V4 changes

Most model launches talk about benchmark gains. DeepSeek V4 pushes a different axis into the foreground. It treats long context efficiency as the key systems problem. That matters because agentic workloads carry far more than a simple prompt and response. They accumulate tool outputs, retrieved files, code, logs, memory and reasoning traces. As sequence lengths expand, attention cost and KV cache size turn into hard bottlenecks.

DeepSeek claims the new architecture cuts those bottlenecks sharply. In a 1M token context setting, DeepSeek V4 Pro uses only 27% of the single token inference FLOPs and 10% of the KV cache relative to DeepSeek V3.2. DeepSeek V4 Flash goes further, reaching 10% of the single token FLOPs and 7% of the KV cache compared with V3.2. NVIDIA frames the same shift as a 73% reduction in per token inference FLOPs and a 90% reduction in KV cache memory burden versus V3.2. The wording differs, but the direction is consistent. DeepSeek designed V4 to make million token inference economically workable.

The architecture behind the efficiency claims

DeepSeek ties those gains to a set of architectural changes. The most important is a hybrid attention architecture that combines Compressed Sparse Attention and Heavily Compressed Attention.

Compressed Sparse Attention reduces memory pressure in two steps. It compresses KV entries and then sparsifies the attention matrices. Heavily Compressed Attention pushes compression further by consolidating KV entries across token groups into a single compressed representation. The goal is simple: preserve useful long range signal while reducing the amount of memory and compute the model burns on context handling.

DeepSeek also adds Manifold Constrained Hyper Connections to strengthen signal propagation across layers, while keeping model expressivity intact. Training uses the Muon optimizer, which DeepSeek says improves convergence speed and stability. On the data side, the company reports pretraining on more than 32 trillion tokens, followed by a two stage post training pipeline. First, domain specific experts are cultivated through supervised fine tuning and reinforcement learning with GRPO. Then DeepSeek consolidates those skills into a unified model through on policy distillation.

That stack matters because DeepSeek is not just shipping larger parameter counts. It is trying to align three layers at once: model architecture, post training and inference economics.

Pro and Flash serve different operating points

DeepSeek V4 Pro is the flagship. DeepSeek says it rivals top closed models on reasoning and leads current open models across math, STEM and coding. It also describes Pro Max, the highest reasoning effort mode, as the strongest open source model in its lineup and positions it near the top closed systems on coding, reasoning and agentic work.

DeepSeek V4 Flash plays a different role. DeepSeek says Flash approaches Pro on reasoning when given a larger thinking budget and performs on par with Pro for simpler agent tasks. The tradeoff is visible. Flash carries fewer active parameters, lower cost and faster responses. It sits slightly behind Pro on pure knowledge tasks and the hardest agent workflows, but its efficiency profile makes it the more practical default for many production setups.

This split reflects a broader market pattern. Large model vendors increasingly expose multiple reasoning budgets and latency tiers rather than a single monolithic model experience. DeepSeek now does the same across both product and inference mode.

Reasoning modes are part of the product

DeepSeek V4 supports multiple reasoning effort modes. One mode targets fast, intuitive answers for routine tasks. Another slows down for more deliberate analysis on complex problem solving and planning. A third pushes reasoning further through a dedicated system prompt and expanded thinking summary. The practical point is not branding. It is cost control. Reasoning tokens improve difficult task performance, but they also change latency and token consumption. DeepSeek treats that tradeoff as a first class product setting.

That also explains one deployment recommendation from the Hugging Face release. For Think Max, DeepSeek recommends a context window of at least 384K tokens. The model can stretch to 1M tokens, but not every reasoning mode or inference setup will exploit that full range in the same way.

Benchmarks point to a narrow but real gap with frontier closed models

DeepSeek presents V4 as highly competitive with top closed systems, but the company also leaves a measurable gap in place. In its own summary, DeepSeek V4 Pro Max surpasses GPT 5.2 and Gemini 3.0 Pro on standard reasoning benchmarks when reasoning tokens expand. At the same time, it still trails GPT 5.4 and Gemini 3.1 Pro. Simon Willison reads that as a model family that sits roughly 3 to 6 months behind the state of the art frontier.

That gap matters, but so does the price attached to it. Open models rarely win by matching the absolute best proprietary system on every benchmark. They win when the performance discount is smaller than the cost discount. DeepSeek is making exactly that bet.

The benchmark snippets disclosed on Hugging Face reinforce the point. DeepSeek V4 Pro posts 90.1 on GPQA Diamond, 92.6 on GSM8K, 37.7 on HLE, 87.5 on MMLU Pro, 55.4 on SWE Bench Pro, 80.6 on SWE Bench Verified and 67.9 on TerminalBench 2. Those numbers do not settle the full comparison on their own, but they place the model in serious territory for technical and coding heavy workloads.

Pricing is the real competitive signal

The strongest immediate signal in the DeepSeek V4 release is not parameter count. It is pricing. According to Simon Willison’s summary of DeepSeek’s pricing page, DeepSeek V4 Flash costs $0.14 per million input tokens and $0.28 per million output tokens. DeepSeek V4 Pro costs $1.74 per million input tokens and $3.48 per million output tokens.

That puts Flash among the cheapest small model options and Pro among the cheapest higher end frontier class options in the market. The release therefore pressures two segments at once. It undercuts low cost utility models on price while also squeezing premium reasoning models on cost per useful output.

This is where DeepSeek’s efficiency work connects directly to business logic. Lower FLOPs and smaller KV cache requirements do not just improve engineering elegance. They open room for lower API prices and broader deployment margins. DeepSeek appears to treat inference economics as a product feature, not as a backend optimization hidden from users.

Why 1M context matters for agents

DeepSeek frames V4 as an agent ready model family. The company says it integrates with tools such as Claude Code, OpenClaw and OpenCode, and that the model already drives in house agentic coding workflows. NVIDIA extends that view and points to long context coding, document analysis, retrieval and orchestration as the natural use cases.

The relevance is straightforward. An agent rarely works from a single prompt. It operates over a rolling state. A million token context window makes it easier to keep large codebases, long documents, tool traces and planning state in one session. That does not eliminate retrieval, memory systems or tool design. It reduces the frequency of context eviction and lowers the overhead of stitching fragmented state back together.

Still, long context should not be confused with guaranteed long horizon reasoning. A model can ingest far more material than it can consistently prioritize. DeepSeek’s architectural compression aims to improve that balance, but practical performance will still depend on prompt structure, tool use and task decomposition.

Deployment paths show the model is built for infrastructure scale

The release lands with immediate API support and local deployment options. DeepSeek says users can keep the base URL and switch the model name to deepseek-v4-pro or deepseek-v4-flash. The API supports OpenAI ChatCompletions and Anthropic APIs. That reduces switching cost for teams already standardized on those schemas.

For self hosting, the model cards provide encoding scripts, output parsing guidance and inference documentation. DeepSeek does not ship a Jinja format chat template in this release. Instead, it supplies a dedicated encoding folder with Python scripts and test cases for OpenAI compatible message formatting.

NVIDIA’s response adds another layer. The company positions Blackwell as a natural target for V4 scale inference and reports more than 150 tokens per second per user for DeepSeek V4 Pro on NVIDIA GB200 NVL72 in out of the box tests. It also highlights support through NIM, SGLang and vLLM, including recipes for low latency, balanced throughput, long context workloads and prefill decode disaggregation up to 100 plus GPUs.

That framing matters because it reveals where the economic battle is moving. As open models converge toward high capability, infrastructure strategy becomes a larger differentiator. Model quality still matters. Deployment efficiency increasingly matters just as much.

Open weights keep DeepSeek relevant beyond its own API

The MIT License is not a side note. It is central to the DeepSeek V4 proposition. Open weights let developers download, modify and run the model locally or on their own clusters. That creates a second competitive lane beyond hosted API pricing. It enables internal deployment, fine tuning, private coding workflows and experimentation with quantization.

Willison notes that DeepSeek V4 Pro is 865GB on Hugging Face and Flash is 160GB. Those sizes put real hardware limits on local use today, especially for Pro. But they also define the next optimization frontier. If quantized community builds arrive quickly, Flash in particular could become attractive for serious local deployment on high memory workstations and prosumer setups.

The geopolitical layer is present but not decisive for the product analysis

Coverage around DeepSeek still pulls in a second narrative: chip sovereignty and Chinese AI competition. CNBC highlights that Huawei said its latest Ascend based AI cluster can support V4. It remains unclear how extensively domestic chips were used for training relative to NVIDIA hardware. That uncertainty matters for industrial policy and supply chain analysis. It matters less for the immediate technical reading of the release.

The more relevant market point is competitive pressure. DeepSeek no longer operates as a surprising outlier. It now sits in an increasingly crowded field of Chinese open model competitors, while also competing directly with US closed model vendors on cost performance. That changes the framing. The issue is no longer whether low cost open models can be good. The issue is how far they can compress the gap to top proprietary systems while preserving deployment flexibility.

What to watch next

Three questions will determine how significant DeepSeek V4 becomes.

- First, whether independent evaluations confirm the long context and agentic gains outside DeepSeek’s own benchmarks.

- Second, whether Flash develops into the practical default through quantization and local deployment recipes.

- Third, whether pricing remains stable once demand, infrastructure cost and competitive responses settle.

There is also a platform transition to track. DeepSeek says deepseek-chat and deepseek-reasoner will be fully retired after July 24, 2026 at 15:59 UTC. At the moment they route to deepseek-v4-flash in non thinking and thinking modes. That signals a consolidation around the V4 family as the default surface for both chat and reasoning use cases.