Introduction

The landscape of artificial intelligence is shifting rapidly from simple chatbots to autonomous agents capable of complex reasoning and execution. In early 2026, a new contender emerged from the competitive Chinese AI sector that challenges the notion that “bigger is always better.” That contender is Step 3.5 Flash.

Developed by the Shanghai-based AI lab StepFun, Step 3.5 Flash positions itself as a high-performance, open-source foundation model designed not just to read and write, but to think and act. While the industry has been obsessed with trillion-parameter behemoths, StepFun has taken a different approach, focusing on “intelligence density” and architectural efficiency. The result is a model that claims to rival top-tier proprietary systems in reasoning depth while maintaining the agility required for real-time interaction.

But what exactly is under the hood of this model? Who are the minds behind it, and does it truly live up to the hype of delivering frontier capabilities on consumer hardware? In this deep dive, we explore the architecture, capabilities, and potential drawbacks of Step 3.5 Flash.

What is Step 3.5 Flash?

Step 3.5 Flash is a large language model (LLM) built on a sparse Mixture of Experts (MoE) architecture. Unlike dense models that activate every parameter for every calculation, an MoE model acts like a team of specialists, activating only the relevant “experts” for a specific task.

The specifications of Step 3.5 Flash are particularly intriguing for developers and hardware enthusiasts:

- Total Parameters: 196 Billion.

- Active Parameters: 11 Billion per token.

- Context Window: 256,000 tokens.

- Throughput: 100–300 tokens per second (peaking at 350 tok/s).

This specific configuration—activating only 11B parameters out of a massive 196B pool—is what StepFun calls “intelligence density.” It allows the model to access a vast reservoir of knowledge and reasoning patterns without the computational penalty usually associated with models of this size. Essentially, it runs with the speed of a lightweight model but thinks with the depth of a heavyweight.

Who is Behind It?

Step 3.5 Flash is the flagship product of StepFun, an artificial intelligence startup based in Shanghai. In the intensely competitive “War of a Hundred Models” in China, StepFun has carved out a niche by focusing on the intersection of advanced reasoning and practical efficiency.

While competitors like Moonshot AI (with their Kimi K2.5 model) and DeepSeek (with V3.2) have pushed for massive parameter counts reaching into the trillions, StepFun aims to democratize intelligence. Their philosophy centers on making elite-level AI accessible not just via cloud APIs, but also in local environments. By releasing Step 3.5 Flash under the Apache 2.0 license, they have signaled a strong commitment to the open-source community, inviting developers to build upon their architecture rather than keeping it locked behind a paywall.

What Makes It Different? The Architecture

To understand why Step 3.5 Flash stands out, we have to look at the unique engineering choices made during its development. It is not simply a shrunk down version of a larger model. It is a purpose-built engine for speed and reasoning.

1. Multi-Token Prediction (MTP-3)

One of the most significant bottlenecks in LLMs is the serial nature of text generation, predicting one word at a time. Step 3.5 Flash utilizes a 3-way Multi-Token Prediction mechanism. This allows the model to predict four tokens simultaneously in a single forward pass. This is a major factor in how it achieves generation speeds of up to 350 tokens per second, enabling complex reasoning chains to be generated almost instantly.

2. Hybrid Sliding Window Attention

Handling long contexts (like reading a whole book or a massive codebase) usually requires immense computational power. Step 3.5 Flash addresses this with a 3:1 Sliding Window Attention (SWA) ratio. It integrates three SWA layers for every full-attention layer. This hybrid approach significantly reduces the memory overhead (VRAM usage) while ensuring the model doesn’t forget information across its 256K context window. It allows the model to maintain consistent performance whether it is processing a short query or analyzing a massive dataset.

3. Sparse MoE Routing

Unlike traditional dense models, Step 3.5 Flash uses a fine-grained routing strategy. By selectively activating only 11B parameters, it decouples global model capacity from inference cost. This is crucial for local deployment, as it lowers the hardware barrier for running a model with 196B parameters worth of knowledge.

What Does It Do Better?

StepFun has optimized Step 3.5 Flash for specific high-value tasks, moving beyond casual conversation into the realm of professional Agentic workflows.

Agentic Capabilities and Think-and-Act

While chatbots are built for reading, agents must reason and act. Step 3.5 Flash is engineered for what StepFun describes as a Think-and-Act synergy. It doesn’t just execute commands. It orchestrates tools.

In demonstrated scenarios, such as an open-world stock investment task, the model acted as a central controller. It orchestrated over 80 different tools to aggregate market data, executed raw Python code to calculate financial metrics, and then triggered cloud storage protocols to archive the results. This ability to pivot seamlessly between reasoning, coding, and API calls is a significant step forward for open-source models.

Coding and Development

For developers, Step 3.5 Flash offers a compelling alternative to closed-source giants. It achieves a score of 74.4% on the SWE-bench Verified benchmark, a rigorous test of a model’s ability to solve real-world software engineering problems. It is compatible with industry-standard environments like Claude Code and Codex, allowing it to handle repository-level tasks, map out dependencies, and navigate deep structural codebases.

Use cases highlighted by the team include generating a Tactical Weather Intelligence Dashboard using WebGL 2.0 and creating a Three.js Procedural Ocean Engine. These aren’t just snippets of code. They are complex, multi-file engineering objectives that require maintaining a mental model of the entire project.

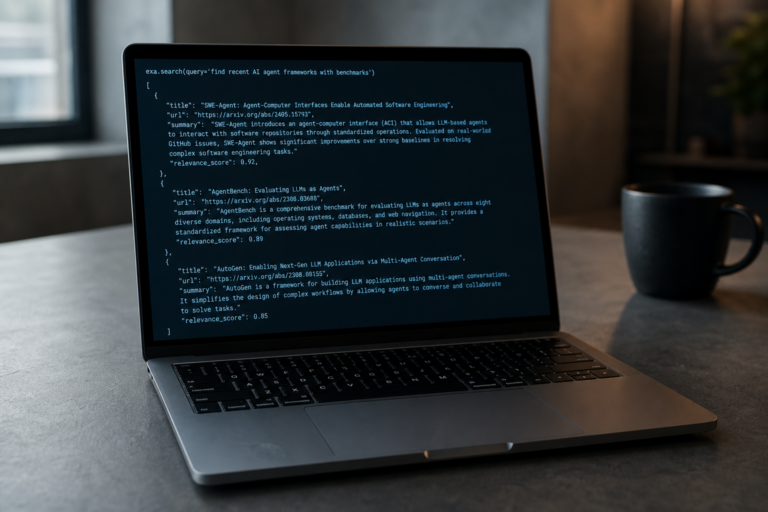

Deep Research

The model excels at long-form content creation and research. In tests using the Scale AI Research Rubrics, Step 3.5 Flash demonstrated the ability to synthesize comprehensive research reports of approximately 10,000 words. By utilizing a ReAct architecture (Reasoning + Acting), it can plan a research workflow, search the web, reflect on the findings, and write expert-grade guides, rivaling the quality of proprietary Deep Research tools from major US tech firms.

Local Deployment on Consumer Hardware

Perhaps the most exciting feature for privacy-focused users is the ability to run Step 3.5 Flash locally. Because it only activates 11B parameters, it can run securely on high-end consumer hardware, such as the Mac Studio with an M4 Max chip or NVIDIA DGX Spark systems. This brings elite-level intelligence to local environments, ensuring data privacy without sacrificing the performance typically reserved for cloud-based supercomputers.

Criticism and Challenges

Despite the impressive specifications and benchmark scores, Step 3.5 Flash is not without its challenges and criticisms. As with any technology in the rapidly evolving AI space, potential users should be aware of the nuances.

The Active vs. Total Parameter Confusion

While the marketing highlights the efficiency of the 11B active parameters, the model still consists of 196B total parameters. For local deployment, this presents a storage and memory bandwidth challenge. Even if the compute requirement is low (11B), the VRAM required to load the full 196B weights (even if quantized) is substantial. This limits the consumer hardware claim to the very top tier of enthusiast equipment like the M4 Max with 128GB+ unified memory, leaving standard gaming PCs out of the equation.

Software Integration Teething Issues

As a relatively new architecture, software support is playing catch-up. The documentation notes that full support for its signature Multi-Token Prediction (MTP-3) is not yet fully integrated into popular inference engines like vLLM. While the team is actively working on Pull Requests to integrate these features, early adopters may face friction when trying to deploy the model with standard, off-the-shelf tooling compared to more established architectures like Llama.

The Chinese Model Context

For Western enterprises, the origin of the model can sometimes be a point of friction regarding data governance and regulatory compliance. While the model is open-source (Apache 2.0) and can be run locally, which effectively nullifies data privacy concerns since no data leaves the premise, organizations with strict procurement policies regarding software origin may still hesitate. However, for individual developers and researchers, the technical merits often outweigh these geopolitical considerations.

Benchmark vs. Real World

While Step 3.5 Flash performs exceptionally well on benchmarks like SWE-bench and AIME 2025, it is worth noting that it trails behind the absolute pinnacle of proprietary models (like the theoretical GPT-5 series or full-sized Gemini 3 variants) in certain pure reasoning tasks. It is positioned as a Flash model, optimizing for the balance of speed and intelligence rather than raw, unconstrained cognitive power. Users requiring the absolute maximum reasoning depth regardless of latency or cost might still look elsewhere.

Conclusion

Step 3.5 Flash represents a mature phase in the open-source AI revolution. It moves the conversation away from raw parameter counts and towards architectural efficiency and practical utility. By combining the speed of a small model with the knowledge base of a large one, StepFun has created a versatile tool that is equally at home powering a real-time coding agent as it is generating deep research reports.

For developers, the ability to run a model of this caliber locally on an M4 Max is a liberating prospect. It offers a glimpse into a future where high-level intelligence is decentralized, private, and incredibly fast. While it demands high-end hardware and is still maturing in terms of software ecosystem support, Step 3.5 Flash is a compelling proof of concept that intelligence density is the metric to watch in 2026.