Periodic Labs has entered the conversation around artificial intelligence with an unusually ambitious goal: building an AI scientist. An AI system that can form hypotheses, run experiments through autonomous laboratories and learn from results grounded in the physical world.

That vision places Periodic Labs at the intersection of several fast moving fields: generative AI, robotics, autonomous labs, materials science and industrial R&D. It also puts the company in the middle of a deeper debate about what scientific discovery really is. Can science be accelerated the way software development has been accelerated? Can machine intelligence move beyond pattern recognition on internet data and become an active participant in experimentation? And if it can, what do we gain and what might we lose?

These are not abstract questions. They go to the heart of how future breakthroughs in semiconductors, superconductors, energy systems and advanced materials may be discovered.

What Periodic Labs is trying to build

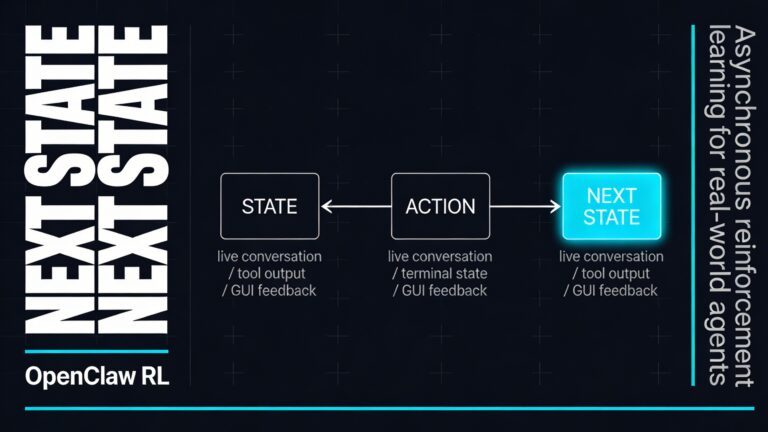

Periodic Labs presents science as a loop of conjecture, experiment and learning. That framing matters. It suggests that intelligence alone is not enough. A model can generate plausible ideas, but knowledge only becomes useful when those ideas are tested against reality. In other words, discovery requires action, feedback and iteration.

This is where the company’s central idea comes in. Periodic Labs is not only building AI models for science. It is also investing in autonomous laboratories that allow those models to run experiments and gather new data. That is a major shift from the current generation of frontier AI systems, which have largely been trained on the internet and other finite digital corpora.

From Periodic Labs’ perspective, the web has already been mined heavily. Better training techniques can still extract more value from existing data, but there is a limit to what can be learned from text alone. Scientific progress eventually depends on testing new ideas in the world. Autonomous labs therefore become more than a nice add on. They become the mechanism for generating fresh, high quality data that does not already exist online.

This also includes something traditional science often underreports: negative results. Failed experiments are rarely published, yet they are invaluable for learning. An AI scientist that has access to both successful and unsuccessful outcomes may be able to navigate research spaces faster than systems trained only on polished scientific literature.

Why the physical sciences come first

Periodic Labs is starting in the physical sciences, and that choice is strategic. Physics, chemistry and materials science offer a relatively structured environment for machine driven discovery. Experiments often produce measurable signals. Simulations can approximate many systems before real world testing begins. Results can be checked against nature in a fairly direct way.

That makes these disciplines more machine compatible than areas where outcomes are ambiguous, slow to emerge or highly context dependent. AI has already shown strong performance in domains with verifiable answers such as mathematics and code generation. In the physical sciences, nature itself becomes the verifier.

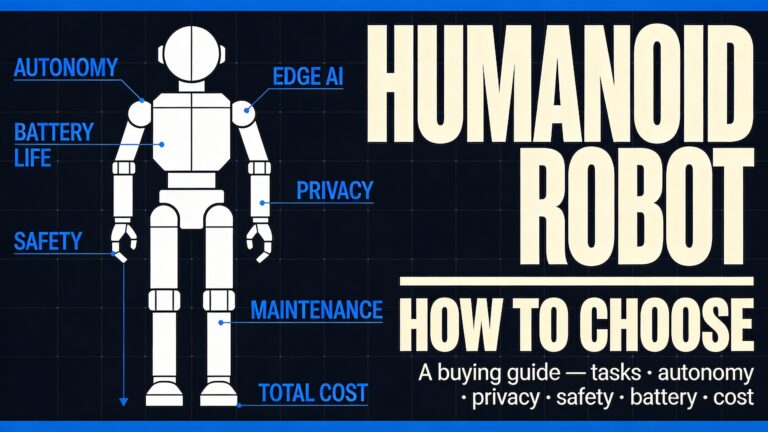

There is another reason this matters. Many of the bottlenecks in modern technology are physical. Better batteries, more efficient chips, improved catalysts, heat resistant materials and next generation superconductors all depend on our ability to design and manipulate matter. If AI can help researchers move through this design space more efficiently, the downstream consequences could be enormous.

Periodic Labs points to high temperature superconductors as one of its ambitions. That is a compelling example because the payoff would be transformative. More practical superconducting materials could reduce energy loss in grids, improve transportation systems and unlock entirely new engineering possibilities. But the broader play is even larger. Automating parts of materials discovery could affect semiconductors, fusion research and aerospace engineering.

From AI model to scientific agent

Much of the AI industry still treats models as prediction engines. You prompt them, they respond. In science, that approach has obvious uses, from literature review to data analysis. But Periodic Labs is aiming for something more agentic. Its systems are meant to operate with tools, interact with instruments and support faster cycles of experiment and interpretation.

That idea mirrors a larger trend in artificial intelligence: the move from static language models toward AI agents that can plan, act and adapt. In a scientific setting, such agents could propose candidate materials, design experiments, analyze measurement data and suggest the next step in a sequence.

The company has also signaled that it is already applying this approach in industry. One example involves helping a semiconductor manufacturer struggling with chip heat dissipation. In practice, that means training custom agents to help engineers make sense of experimental data and iterate more quickly. This is important because it shows how the AI scientist concept may first succeed, not in dramatic moonshots, but in focused industrial workflows where data complexity slows decision making.

Why autonomous labs matter so much

The concept of autonomous laboratories has been around for some time, but AI gives it new momentum. In a traditional lab, scientific progress depends heavily on people moving between instruments, notebooks, software tools and interpretation. In an autonomous or semi autonomous lab, much of that loop can be integrated. Instruments collect data, software analyzes it, models suggest follow up experiments and the cycle continues with less friction.

For Periodic Labs, autonomous labs are not simply about automation for efficiency’s sake. They are the source of original data and the bridge between digital intelligence and physical reality. Each experiment can generate large volumes of structured and unstructured information. Over time, that creates a feedback rich environment for training scientific systems.

There is a powerful flywheel here. Better AI can propose better experiments. Better experiments generate better data. Better data improves the AI. If this loop works at scale, it could become one of the most important architectures for scientific discovery in the coming decade.

The team behind the company

Periodic Labs has attracted attention partly because of the experience of its founding team. According to the company, the team includes people involved in ChatGPT, DeepMind’s GNoME, OpenAI’s Operator, the neural attention mechanism, MatterGen and autonomous physics labs, alongside researchers connected to major materials discoveries.

That matters for two reasons. First, it suggests the company understands both modern AI system design and the realities of scientific experimentation. Second, it reflects a broader migration of talent from major AI labs into startup environments focused on domain specific applications. The next phase of AI may not be defined only by larger general models. It may also be shaped by highly specialized teams building deep vertical systems for science, robotics and advanced manufacturing.

The company’s investor list reinforces that sense of momentum. Backing from major venture firms and well known technology figures indicates confidence that scientific AI could become a commercially and strategically important category.

The promise of the AI scientist

The phrase AI scientist is provocative because it implies more than assistance. It implies partial autonomy in discovery. If such systems mature, the benefits could be substantial.

Faster iteration

Many scientific fields are bottlenecked by the speed of trial and error. A system that can analyze data continuously and propose the next experiment without long delays may compress research cycles dramatically.

Better use of negative results

Human research culture often rewards positive findings and clear narratives. Machine driven systems can learn just as effectively from failure, provided the data is captured properly.

More efficient search in vast design spaces

Materials science involves exploring huge numbers of possible structures and compositions. AI can help prioritize where to look, reducing wasted effort.

Closer integration between simulation and experiment

One of the most promising workflows combines computational modeling with real world validation. AI agents can move between these layers more fluidly than traditional fragmented processes.

Industrial relevance

Unlike some AI science narratives that stay theoretical, Periodic Labs is linking its work to concrete industrial problems. That increases the chance that early value will be measurable.

The limits and criticisms are real

Still, the rise of AI scientists should not be treated as an uncomplicated triumph. Critics have raised serious concerns about what gets lost when science is framed mainly as an optimization problem.

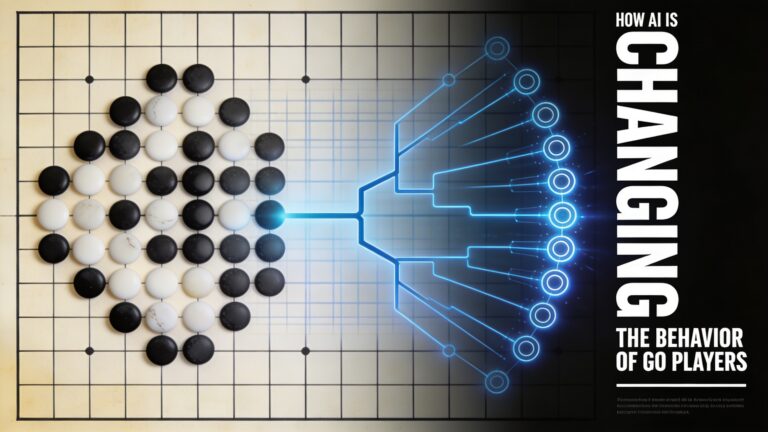

One criticism is that AI excels most in what philosopher Thomas Kuhn described as normal science, the kind of puzzle solving that works within an established paradigm. Systems can fill in missing pieces, classify patterns and optimize known objectives. But major scientific revolutions do not always emerge from smoother puzzle solving. They often come from reframing the puzzle altogether.

That kind of shift requires imagination, doubt, intuition and sometimes resistance to prevailing assumptions. It is not obvious that autonomous systems, especially those optimized for measurable performance, are well suited to that role.

Another concern is that science is not just a production line for useful outputs. It is also a cultural and public practice of understanding the world. If scientific work becomes increasingly machine generated, privately controlled and evaluated mainly through market value, the meaning of knowledge itself could narrow. Efficiency may improve while public interpretability and intellectual openness decline.

There is also the question of visibility. Laboratory work often contains tacit knowledge, improvisation and judgment that are difficult to formalize. Even highly automated environments still rely on human expertise, maintenance, interpretation and creative problem solving. The story of fully automated science can therefore become misleading if it hides the people doing invisible but essential work.

The sovereignty question

A less discussed but highly important issue is knowledge sovereignty. As research increasingly depends on advanced AI infrastructure, ownership and control become strategic concerns. If scientific capability shifts from public institutions toward private platforms, societies may become dependent on companies for the production and interpretation of knowledge.

This matters in universities, national labs and industry alike. Data pipelines, models, cloud infrastructure and lab automation systems are not neutral. They embody governance choices, economic incentives and political pressures. A future in which scientific discovery is deeply mediated by proprietary AI systems raises difficult questions about openness, accountability and resilience.

For that reason, the rise of companies like Periodic Labs should be watched not only as a technology story but also as a governance story. The AI scientist may become an extraordinary research instrument, but who builds it, who controls it and who benefits from it will shape its long term impact.

What to watch next

Periodic Labs is still early, so the most important question is not whether the vision sounds impressive. It is whether the company can demonstrate repeatable scientific and industrial outcomes.

There are a few signs worth tracking.

- Published discoveries or validated breakthroughs that show the system can contribute more than workflow automation.

- Evidence of successful autonomous experimentation where AI meaningfully improves the design and interpretation of lab work.

- Industrial case studies showing measurable acceleration in areas such as semiconductors, materials optimization or thermal management.

- Integration of simulation, lab robotics and foundation models into a coherent platform rather than isolated tools.

- Scientific transparency around how results are validated, reproduced and shared.

If the company can deliver across these dimensions, it may help define a new category in AI driven R&D. If it cannot, it may still contribute useful tools while reminding the industry that the hardest part of science is not generating text or predictions. It is confronting reality.