If your prompts felt rock solid on Claude Opus 4.6 and now produce slightly off results on 4.7, you are not imagining it. The model did not get worse. It got more literal. Where 4.6 happily inferred your intent and filled in the blanks, 4.7 reads your instruction as a contract and sticks to it. That single behavioral shift changes how you should write almost every prompt.

This guide walks through what actually changed, why it matters, and how to adapt your prompting across the four contexts most people work in: the chat interface, Claude Code, the API, and long-running co-work projects.

What changed in Claude Opus 4.7

Anthropic tuned 4.7 for predictability. The model interprets prompts more literally, scopes its responses tighter, and volunteers less unsolicited information. It is more direct, less validation-heavy, and uses fewer emoji by default. On benchmarks it is genuinely stronger, scoring 87.6% on SWE-bench Verified compared with 80.8% on 4.6, and the new xhigh effort level at 100k tokens already beats 4.6 max at 200k tokens.

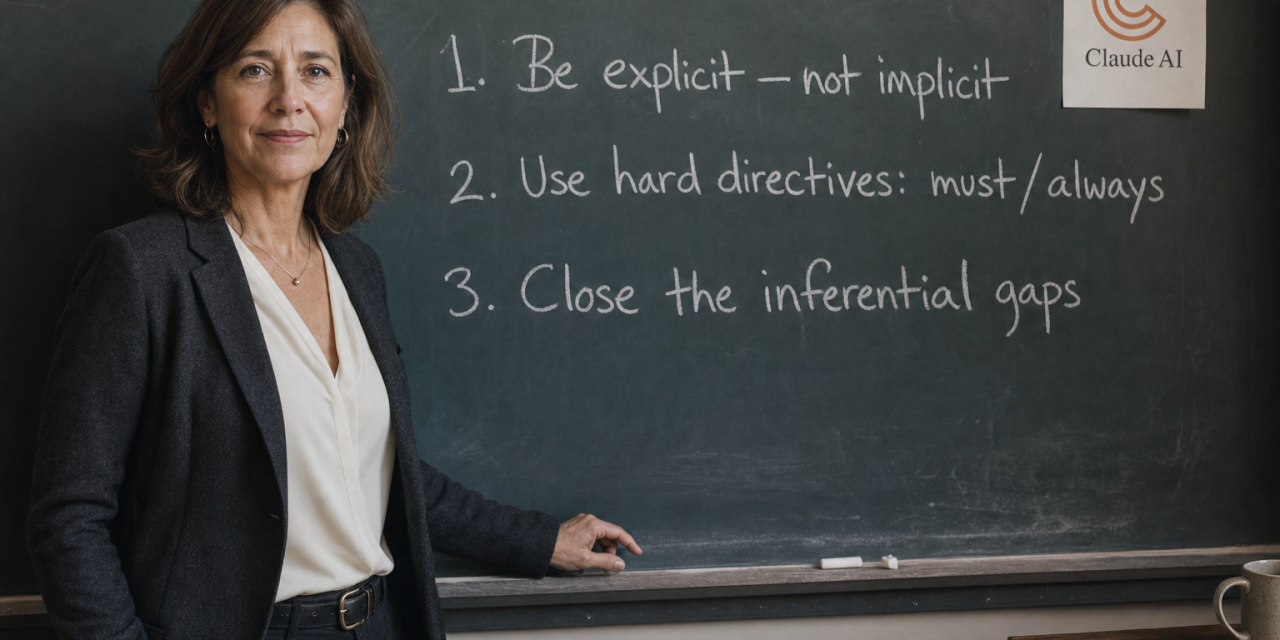

A few behaviors are worth flagging before you touch a prompt:

- Literal instruction following. Soft language like consider, you might, or feel free to is now interpreted as optional. Directives like you must or always are interpreted as required.

- Length calibrated to perceived complexity. Without explicit guidance, 4.7 produces shorter outputs on tasks it judges simple.

- Stricter scope. If you list three tasks, it does three tasks. It will not generalize to a fourth that seems related.

- Adaptive thinking, off by default. Extended thinking with fixed token budgets is gone. Sampling parameters like temperature, top_p, and top_k now return a 400 error if set to non-default values.

The fix across every surface is the same idea expressed four different ways: close the inferential gaps that 4.6 used to close for you.

Prompting 4.7 in chat

The chat interface is where the difference shows up first because casual prompts used to work fine. Now they often produce minimal edits when you wanted a real rewrite.

State scope, not just the task. Improve this proposal will get you a few word swaps. Instead, describe the outcome: rewrite this proposal from scratch, tighten the argument, cut anything that does not support the main recommendation, and make the opening hook stronger in the first sentence.

Use negative constraints. 4.7 takes them seriously. Lines like do not change the tone, do not add new sections, or do not explain what you changed actually hold.

Ask the model to ask first. For complex work where you are not sure what scope to specify, try: before you start, ask me any clarifying questions you need to produce the best output. Do not assume. 4.6 often skipped this step. 4.7 follows it reliably.

Invite the observations you want. The proactive flagging of issues that 4.6 did by default is gone. Bring it back on demand: after completing the task, tell me anything you noticed that I should be aware of but did not ask about.

Prompting 4.7 in Claude Code

Coding is where literal instruction following is both the biggest superpower and the easiest place to trip up. The model will not touch adjacent files unless you say so, and it will not infer test coverage you did not request.

Make CLAUDE.md do real work. Anything you used to repeat mid-session belongs in your standing instruction file: preferred language version, file structure conventions, when to run tests versus just write them, whether to refactor adjacent code, and how to handle uncertainty. 4.7 will follow those defaults from the start as long as they are written down.

Describe the completed outcome. Add authentication is underspecified. A useful prompt names the endpoint, the verification mechanism, the error path, the test coverage, and the scope fence. That last piece, do not touch any other endpoints, is exactly what 4.6 used to assume and 4.7 needs to be told.

Use effort levels intentionally. Anthropic recommends starting at xhigh for coding and agentic work, with high as the minimum for most intelligence-sensitive tasks. Low effort suits short, scoped fixes where speed matters more than reasoning. Medium is fine for standard generation. For long refactors, xhigh gives the model room to think across subagents and tool calls. Pair high or xhigh with a generous max output budget, around 64k tokens, so the model is not forced to wrap up early.

Plan separately from execute. For larger refactors, run a planning pass with Opus 4.7 and route execution to a cheaper model. The benefit is not just cost. A separate planning pass lets you catch a literal interpretation that misses the bigger picture before any code gets written.

Prompting 4.7 via the API

If you ship 4.7 inside a product, the literal-instruction behavior is what you want. The work is rewriting system prompts to specify everything you used to leave for the model to figure out.

Audit your system prompts. Look for undefined output formats, vague scope language like be helpful and thorough, and missing fallback behavior for requests outside the intended scope. Replace each with concrete instruction. Always answer the direct question first, then offer relevant context only if it materially changes the answer is far more useful than be thorough.

Define what to volunteer. If your product needs proactive follow-ups, write the rule into the system prompt. After answering the user’s question, check whether a closely related fact would change how they interpret the answer. If yes, add it as a brief follow-up note. If no, add nothing.

Specify response length concretely. Be thorough does almost nothing. Responses should be three to five sentences unless the question genuinely requires more or less is enforceable.

Handle the API breaking changes. Remove temperature, top_p, and top_k from your requests. Drop fixed thinking budgets and let adaptive thinking handle reasoning, controlled through the effort parameter. If you display reasoning progress in your UI, opt in explicitly or your users will see a long silent pause before output begins.

Prompting 4.7 in long-running projects

Co-work projects expose a different facet of the change. 4.6 would happily reconstruct context from earlier conversations. 4.7 operates on what is literally in front of it.

Treat each session like a brief. A short reference to last week’s discussion will not carry the model. Pin a project document with the current goal, decisions already made, decisions still open, and today’s specific task. Two minutes of upkeep saves a lot of drift.

Separate thinking from executing. 4.7 responds well to explicit mode shifts. Tell it: right now I want you to think with me, push back, propose alternatives, do not produce final output yet. Then later: now execute, take everything we discussed, produce the deliverable, no more questions.

Precision beats verbosity

The temptation is to write longer prompts for everything. That misses the point. A short, precise prompt outperforms a long, vague one every time. What you are doing when you write more for 4.7 is closing inferential gaps, not adding padding. Replace consider with you must. Specify scope completely. Define length and format upfront. Test prompts at high effort before moving to xhigh, since the cost difference matters more than people expect.