If your trusted prompts suddenly feel sluggish, mechanical or weirdly off-target, you are not imagining it. GPT-5.5 rewards a different writing style than GPT-5, GPT-5.2 or GPT-5.4. The instructions that used to squeeze quality out of older models can now actively hold this one back. The fix is not to write more, but to write differently.

Below is a practical guide to prompting GPT-5.5, built around the patterns OpenAI recommends and the lessons developers are already learning the hard way.

Start fresh, do not port your old prompt stack

The first piece of advice is the one most people skip. Treat GPT-5.5 as a new model family, not a drop-in replacement for the previous generation. Older prompts tend to over-specify the process because earlier models needed more steering to stay on track. With GPT-5.5, that same hand-holding adds noise, narrows the search space and produces overly mechanical answers.

Begin with the smallest prompt that still preserves what your product needs to deliver. Then tune from there: reasoning effort, verbosity, tool descriptions and output format. If you migrate an old prompt line by line, you are essentially asking a faster, smarter model to wear shoes two sizes too small.

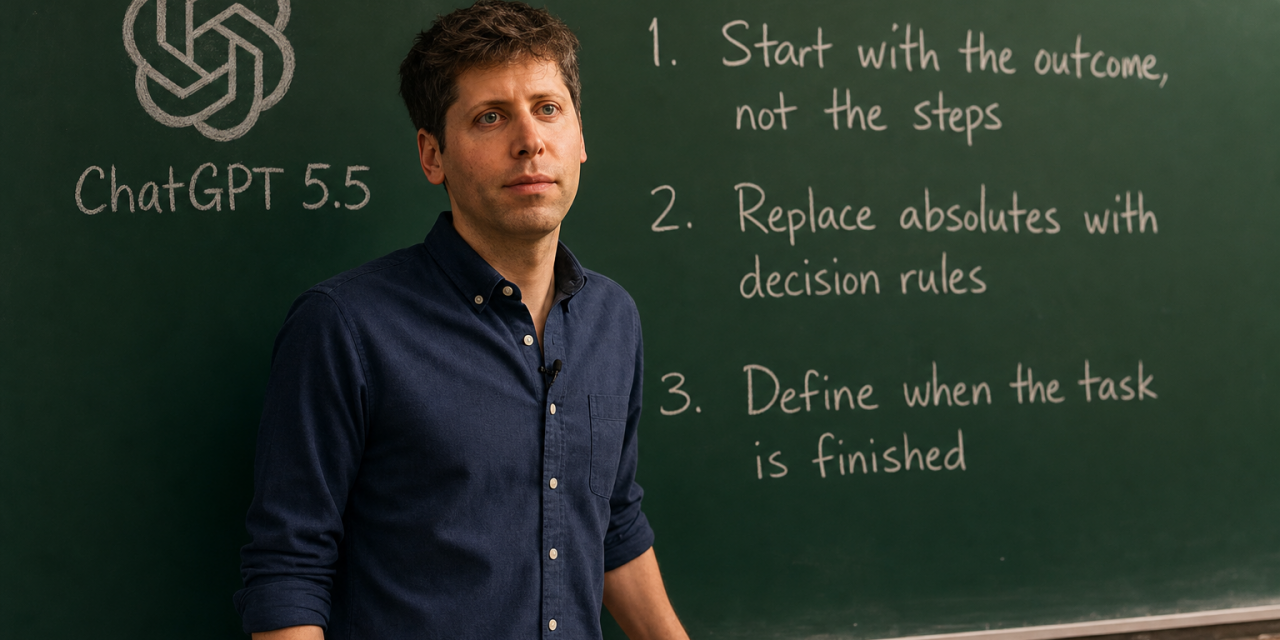

Write outcome-first prompts

The single biggest shift is moving from process-heavy instructions to outcome-first prompts. Tell the model what good looks like, what constraints matter, what evidence is available and what the final answer should contain. Then let it choose the path.

A clean outcome-first prompt looks something like this:

Resolve the customer’s issue end to end. Success means the eligibility decision is made from the available policy and account data, any allowed action is completed before responding, and the final answer includes completed_actions, customer_message and blockers. If evidence is missing, ask for the smallest missing field.

Notice what is missing. There is no numbered list of steps, no reminder to think carefully, no nudge to search first and answer second. The destination is clear, so the model picks an efficient route.

Ease up on absolutes like always, never and must

Older prompts are often packed with capitalised commands: ALWAYS check the database, NEVER ask clarifying questions, you MUST cite a source. GPT-5.5 follows these literally, which is exactly the problem. Hard rules belong to true invariants such as safety constraints, required output fields or actions that should never happen.

For everything else, prefer decision rules. Instead of NEVER ask clarifying questions, try:

Ask a clarifying question only when missing information would materially change the answer or cause a high-risk mistake.

That single rephrasing turns a blunt prohibition into useful judgement. The model now knows when to ask and when to proceed, instead of binary obedience.

Define when the task is finished

One subtle failure mode of capable reasoning models is the loop that never quite ends. The model keeps digging, keeps refining, keeps adding caveats and burns tokens without improving the answer. Explicit stopping conditions solve this.

A well-shaped stopping rule might read:

Resolve the user query in the fewest useful tool loops, but do not let loop minimisation outrank correctness, fallback evidence, calculations or required citation tags. After each result, ask whether the core request can be answered now with useful evidence. If yes, answer.

You are giving the model permission to stop, plus a checkpoint that protects quality. That balance matters more than either instruction alone.

Add a short preamble for long-running tasks

In streaming or agentic applications, perceived latency matters as much as actual latency. GPT-5.5 may spend real time reasoning, planning or preparing tool calls before any visible text appears. To users, silence often reads as a crash.

Ask the model to emit a one or two sentence preamble before any tool calls in a multi-step task: a brief acknowledgement of the request and the first concrete step. This is the same pattern that makes Codex feel responsive even when it is doing heavy work in the background. The task itself does not change, but the experience does.

Set guardrails for creative drafting

GPT-5.5 is confident, and that confidence cuts both ways. For creative or generative work such as slides, launch copy, leadership blurbs, summaries or narrative framing, you need to tell the model which parts must be grounded in facts and which parts may be written freely.

A useful guardrail looks like this:

Use retrieved or provided facts for concrete product, customer, metric, roadmap, date and capability claims, and cite those claims. Do not invent specific names, first-party data, metrics, roadmap status or customer outcomes to make the draft sound stronger. If citable support is thin, write a useful generic draft with placeholders or clearly labelled assumptions rather than unsupported specifics.

This separates style from substance. The model can still be persuasive and well written without smuggling in fabricated details.

Separate personality from collaboration style

For customer-facing assistants, support flows or coaching products, it helps to define two things explicitly and keep both short.

- Personality shapes how the assistant sounds: tone, warmth, formality, humour, level of polish.

- Collaboration style shapes how it works: when it asks questions, when it makes assumptions, how proactive it is, how it handles risk.

Neither should replace clear goals, success criteria or stopping conditions. Personality is for the experience. Collaboration style is for the behaviour. Goals are for the work.

Treat reasoning effort as a last-mile knob

It is tempting to crank reasoning effort up whenever quality dips, but higher is not always better. Stronger prompts, clear output contracts and lightweight verification loops usually recover more performance than turning the dial.

Default to none or low for execution-heavy work like field extraction, structured transforms or routine triage. Reach for medium when the task involves real synthesis, ambiguity or multi-document reasoning. Save high and xhigh for genuinely hard, long-horizon work where evals show a clear benefit.

A simple checklist before you ship a prompt

- Does it describe the outcome rather than the process?

- Are absolutes reserved for true invariants only?

- Is there an explicit stopping condition?

- For creative work, is it clear what must be grounded versus what can be invented?

- For long tasks, does the model emit a short preamble?

- Is reasoning effort matched to the task shape, not to a hunch?