Both Anthropic and OpenAI shipped official prompting guides within weeks of each other. Read them side by side and something strange happens. The advice contradicts itself on the surface, yet points to the same underlying conclusion: the prompts that carried you through 2025 are quietly sabotaging your output in 2026.

Claude Opus 4.7 became more literal. ChatGPT 5.5 became more autonomous. The two models moved in opposite directions, but both now punish the exact same thing, prompts written without clear thinking behind them.

What actually changed under the hood

Opus 4.6 used to fill in your blanks. You could write improve this proposal and get a meaningful rewrite because the model inferred what you probably meant. Opus 4.7 reads that same instruction as a contract. It will swap a few words, tighten a sentence, and stop. Not because it got dumber. Because Anthropic tuned it to stop compensating for sloppy thinking.

GPT-5.5 went the other way. OpenAI’s guide is unusually direct about it: do not carry over instructions from older prompt stacks. Legacy prompts over-specify the process because earlier models needed hand-holding. GPT-5.5 does not. All that extra detail you carefully tuned for GPT-5.2 now creates noise and drags output toward something mechanical and flat.

One developer on Reddit summarised it after reading hundreds of community complaints. The frustration tracked almost perfectly with prompt specificity. Precise prompts got better results on 4.7. Vague prompts got worse. The model did not regress. The prompts did. The same pattern shows up in GPT-5.5 threads, just inverted: people pasting in their old elaborate scaffolding and wondering why the output suddenly feels lifeless.

Two philosophies, one bottleneck

OpenAI’s framework for GPT-5.5 is outcome-first prompting. You describe what good looks like, define success criteria, set the constraints that actually matter, and then get out of the way. The model picks the path. Telling it how to walk is now counterproductive.

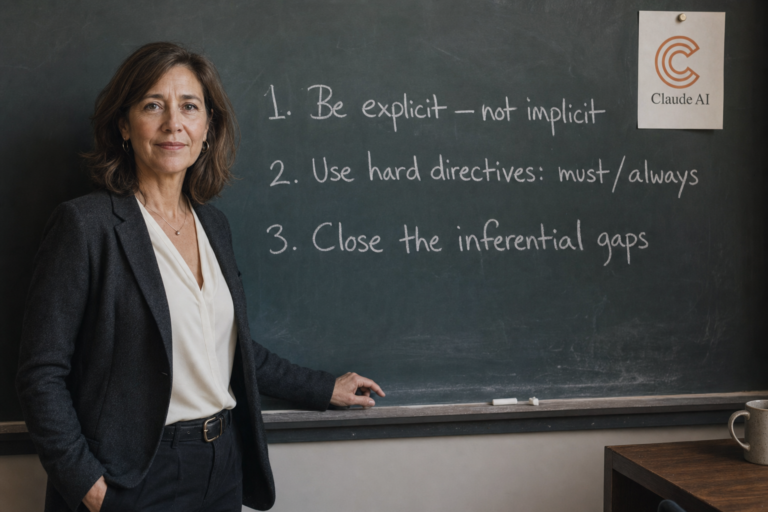

Anthropic’s framework for Opus 4.7 is the inverse. Be surgically specific about what you want, because the model will not fill in your blanks anymore. Soft language like consider or you might is now interpreted as optional. Directives like you must or always are treated as binding.

Two architectures. Two philosophies. One identical conclusion: the person writing the prompt is now the bottleneck, not the model.

How to prompt Claude Opus 4.7

The mental shift is closing inferential gaps. Anything 4.6 used to assume, 4.7 needs you to spell out.

State scope, not just the task

Replace improve this proposal with something the model can actually execute against. Rewrite the proposal from scratch, tighten the central argument, cut anything that does not support the main recommendation, make the opening hook land in the first sentence. The destination is now unambiguous.

Use negative constraints, they hold

Lines like do not change the tone, do not add new sections, or do not touch adjacent files are no longer suggestions. 4.7 takes them as hard rules. This is especially valuable in Claude Code, where the model will not refactor neighbouring functions unless you explicitly invite it to.

Ask the model to ask first

For complex work where you are not sure what scope to specify, try: before you start, ask me any clarifying questions you need to produce the best output, do not assume. 4.6 often skipped this step. 4.7 follows it reliably.

Invite the observations you want

The proactive flagging that 4.6 did by default is gone. If you want it back, request it explicitly: after completing the task, tell me anything you noticed that I should be aware of but did not ask about.

Use effort levels intentionally

Anthropic recommends starting at xhigh for coding and agentic work, high as the minimum for most reasoning-heavy tasks, medium for standard generation, low for short scoped fixes where speed matters more than depth. Pair high or xhigh with a generous output budget so the model does not wrap up early.

How to prompt ChatGPT 5.5

The mental shift here is the opposite. Stop telling the model how to think. Tell it what done looks like.

Start fresh, do not port your old prompt stack

Treat 5.5 as a new model family. Begin with the smallest prompt that still preserves what your output needs to deliver, then tune from there. If you migrate an old prompt line by line, you are asking a faster, smarter model to wear shoes two sizes too small.

Write outcome-first

A clean 5.5 prompt names the destination, the constraints, the available evidence, and the required shape of the final answer. It does not include a numbered list of steps, a reminder to think carefully, or a nudge to search before answering. The model picks an efficient route once the destination is clear.

Ease up on absolutes

Older prompts are packed with capitalised commands. ALWAYS check the database. NEVER ask clarifying questions. 5.5 follows these literally, which is the problem. Reserve hard rules for true invariants like safety, required output fields, or actions that must never happen. For everything else, use decision rules. Ask a clarifying question only when missing information would materially change the answer turns blunt prohibition into useful judgement.

Define when the task is finished

Capable reasoning models can loop forever, refining and adding caveats without improving the answer. Give the model permission to stop, with a checkpoint that protects quality. After each tool result, ask whether the core request can be answered now with useful evidence. If yes, answer.

Treat reasoning effort as a last-mile knob

Cranking effort up whenever quality dips is the lazy fix. Stronger prompts, clear output contracts, and lightweight verification loops usually recover more performance than turning the dial. Default to low for execution-heavy work, medium for genuine synthesis, high or xhigh only when evals show a clear benefit.

The real economics of laziness

Anthropic raised rate limits across all subscriber tiers when 4.7 launched, partly because the new tokenizer uses up to 35% more tokens on the same input. The model is more expensive to run lazily and cheaper to run precisely. GPT-5.5 produces roughly 72% fewer output tokens than Opus 4.7 on equivalent coding tasks, which compounds violently in agentic loops where every narration token is a billable token.

Boris Cherny, the engineer who built Claude Code, posted on launch day that even he needed a few days to adjust his own prompting habits. If the person who built the product needs an adjustment period, the rest of us are not going to wing it.

The skill is the thinking, not the prompt

The models are converging in capability. The gap between good output and bad output is no longer about which one you pick. It is about the two minutes of structured thinking you do before you type anything. Define the outcome. Decide what must be invariant. Decide what the model is allowed to choose. Decide when it should stop.

That thinking system is the skill. The prompt is just what it produces. The cheapest upgrade you can give yourself in 2026 is not a better model, it is the discipline to think clearly for 120 seconds before the cursor starts moving.