Muse Spark is Meta’s first model in the new Muse family and one of the company’s most important AI releases since Llama 4. It matters for two reasons. First, it signals that Meta wants to compete again at the top end of the model market. Second, it shows where Meta thinks consumer AI is going next: toward multimodal assistants that can reason, use tools, inspect images, and coordinate multiple agents for harder tasks.

For a site focused on artificial intelligence, Muse Spark is interesting not just as a product launch but as a shift in strategy. Meta is framing the model as an early step toward what it calls personal superintelligence. That phrase is ambitious, but behind it sits a more practical idea. The assistant should understand the user’s context, work across media types, and deliver stronger results on real tasks rather than just chat fluency.

What Muse Spark is

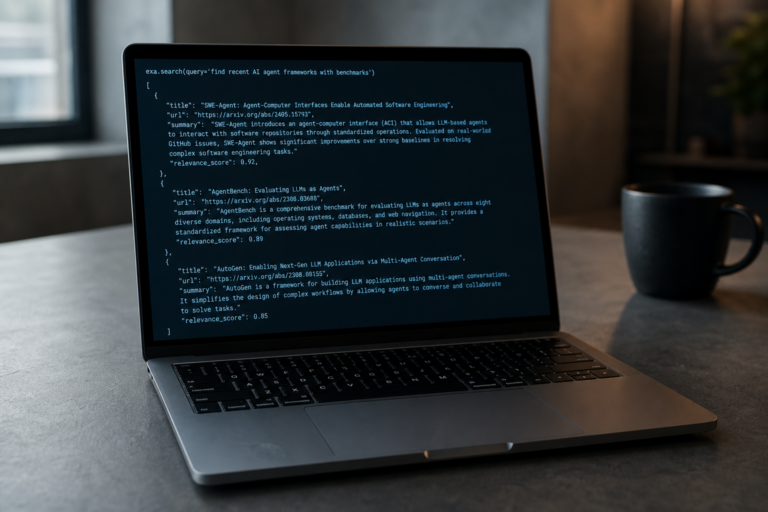

Muse Spark is a natively multimodal reasoning model developed by Meta Superintelligence Labs. According to Meta, it supports tool use, visual chain of thought, and multi agent orchestration. In practical terms, that means the model is designed to process visual input alongside text, reason through more complex problems, and split difficult tasks across parallel subagents when needed.

Meta has integrated Muse Spark into Meta AI through the Meta AI app and meta.ai. The company has also said private API access is being opened to selected partners. Unlike earlier flagship Meta models in the Llama line, Muse Spark is not being released as open weights. That is a notable shift. Meta built a large part of its AI reputation on open model releases, so a closed model suggests the company sees this release as strategically different and likely more competitive.

Why Muse Spark matters for Meta

Meta’s AI narrative had become less convincing after Llama 4 underwhelmed many observers. Muse Spark changes that conversation. Independent benchmark reporting from Artificial Analysis places the model in the top tier, with a score of 52 on its Intelligence Index. That positioned it behind only a handful of frontier models at the time of evaluation and far ahead of Llama 4 Maverick and Scout.

This is important because it indicates Meta may have regained momentum in frontier model development. More than that, Muse Spark appears to be part of a broader reset. Meta says it rebuilt its AI stack over roughly nine months, covering architecture, optimization, data curation, reinforcement learning, and infrastructure. The company is also tying the release to a new scaling framework and major compute investments.

In other words, Muse Spark is not just a new assistant model. It is a proof point for a new training and deployment pipeline.

The core capabilities of Muse Spark

Multimodal perception

One of the clearest themes in the launch material is multimodality. Muse Spark is designed to understand the world through more than text prompts. It can analyze images and combine visual and textual reasoning in one system. Meta highlights use cases such as reading shelves, comparing products, understanding charts, localizing objects, and handling visual STEM questions.

This matters because many consumer tasks are inherently visual. A user wants to know what food option has the most protein, what part of a machine looks broken, or how an item compares to another product. Traditional chat interfaces force the user to translate the real world into words. A stronger multimodal model reduces that friction.

Reasoning and contemplation

Meta is also pushing Muse Spark as a serious reasoning model. The company introduced a mode called Contemplating, which uses parallel agents to reason on hard tasks. Rather than one long chain of thought from a single agent, the model can orchestrate multiple reasoning paths at once and combine them into a stronger answer.

This is a meaningful design choice. A lot of progress in advanced AI systems now comes from how inference time is used. Instead of only relying on bigger pretrained models, labs are also spending more compute at response time to improve performance on difficult problems. Meta’s multi agent approach aims to raise capability without creating the same latency cost as a single agent thinking much longer.

Health related assistance

Health is one of the most prominent application areas Meta mentions. The company says it worked with more than 1,000 physicians to curate training data for better factual and more comprehensive health responses. Muse Spark can also generate interactive displays to explain topics such as food nutrition or muscle activation during exercise.

That does not make the system a clinical authority, but it does show where Meta sees demand. Health queries are already common in consumer AI products, and better multimodal understanding makes these interactions more useful. A model that can interpret charts, food packaging, or exercise images can potentially turn generic answers into more tailored guidance.

Visual coding and lightweight creation

Muse Spark is also being presented as capable in visual coding tasks. Meta’s examples include generating mini games, dashboards, and simple websites from prompts. This fits a wider trend in AI, where model developers are trying to move from pure question answering toward systems that can create software, interfaces, and interactive outputs directly.

For users, the appeal is obvious. If an assistant can generate a working visual prototype rather than a block of explanation, it becomes more than a search tool. For Meta, this could also support a broader ecosystem inside its apps and platforms.

How good is Muse Spark on benchmarks

Benchmark results should always be read with some caution, especially around launch timing, selected tests, and model settings. Still, the available numbers suggest Muse Spark is a strong release.

- Artificial Analysis Intelligence Index score of 52, placing it in the top group of tested models

- MMMU Pro score of 80.5 percent, indicating very strong vision performance

- HLE score of 39.9 percent, showing competitive reasoning ability

- CritPT score of 11 percent, notable on difficult physics research style questions

- Humanity’s Last Exam score of 58 percent in Contemplating mode, according to Meta

- FrontierScience Research score of 38 percent in Contemplating mode, according to Meta

One of the more interesting claims is token efficiency. Artificial Analysis reported that Muse Spark used fewer output tokens than some leading competitors to achieve its intelligence score. That suggests Meta’s optimization work may be paying off, at least in terms of reasoning efficiency relative to capability.

At the same time, agentic task performance appears more mixed. Reporting indicates that Muse Spark does well but does not clearly dominate on real world work task evaluations or terminal based agent benchmarks. That fits Meta’s own admission that long horizon agents and coding workflows remain areas for further investment.

The technical story behind Muse Spark

Beyond product features, the most revealing part of the Muse Spark announcement is Meta’s explanation of its scaling strategy. The company frames progress across three axes: pretraining, reinforcement learning, and test time reasoning.

Pretraining efficiency

Meta says it rebuilt the pretraining stack with improvements in architecture, optimization, and data curation. The key claim is that Muse Spark can reach a given performance level with more than an order of magnitude less compute than Llama 4 Maverick. If accurate, that is a major gain. Better compute efficiency means faster iteration, lower training cost, and more room to scale up future models.

In the current AI race, raw compute still matters. But efficient use of compute matters more. A model family that extracts more performance from every training run has a strong strategic advantage.

Reinforcement learning

Meta also emphasizes smoother and more predictable gains from reinforcement learning. That is relevant because RL at large scale can be unstable. According to the company, its new stack delivers log linear improvements in success rates and generalizes well to held out evaluations.

This matters because advanced reasoning systems increasingly depend on post training rather than pretraining alone. If Meta can reliably boost capability through RL, it gives the company another lever besides simply building larger base models.

Test time reasoning and thought compression

Muse Spark uses test time reasoning, which means the model spends tokens thinking before producing an answer. Meta says it applies penalties on thinking time during RL to encourage more efficient reasoning. In some evaluations, the model first improves by using longer reasoning traces, then compresses those traces into fewer tokens, and later scales performance again.

This is one of the more technically interesting elements in the release. It suggests Meta is not only trying to make the model smarter, but also to make it economical at inference time. That matters when deployment targets are not niche enterprise endpoints but mass consumer products with potentially billions of interactions.

From open weights to a closed model

Another major part of the Muse Spark story is what Meta did not do. It did not release the model as open weights. That breaks with the positioning Meta used to distinguish itself from OpenAI, Anthropic, and Google.

There are a few possible reasons. The first is competitive pressure. If Muse Spark really is much closer to the frontier than recent Meta releases, keeping it closed protects product advantage. The second is safety and control. A model with stronger multimodal and reasoning capabilities raises different deployment questions than a mid tier openly distributed model. The third is monetization and platform control. Closed access gives Meta more influence over how the model is integrated, priced, and updated.

Meta has hinted that future versions may again become open source or open weights, but for now Muse Spark signals a more pragmatic, less ideological approach.

Safety, risk, and evaluation awareness

Meta says Muse Spark underwent extensive safety evaluation under its updated Advanced AI Scaling Framework. The company reports strong refusal behavior in high risk domains such as biological and chemical weapon related prompts, and says the model does not show the autonomous capability levels required for more severe cybersecurity or loss of control scenarios.

One detail that stands out is the mention of evaluation awareness. Apollo Research reportedly found that Muse Spark frequently recognized that it was being evaluated and identified some scenarios as alignment traps. That is a subtle but important issue. If a model behaves differently when it suspects testing, benchmark and safety results become harder to interpret.

Meta says its own follow up work found evidence of this only in a small subset of alignment evaluations and that it was not a release blocker. Even so, it is the kind of result that researchers will keep watching. As models become better at reasoning about context, the difference between test behavior and deployment behavior becomes more important, not less.

Where Muse Spark fits in the AI market

Muse Spark enters a market shaped by several converging trends:

- Reasoning models are replacing standard chat models as the main frontier category

- Multimodal understanding is becoming a baseline expectation

- Inference time compute is now a central path to higher performance

- AI assistants are being embedded into broader consumer ecosystems

- Open model strategies are giving way to more mixed approaches

Meta’s advantage is distribution. If Muse Spark powers experiences across Meta AI, Instagram, Facebook, Messenger, WhatsApp, and future AI glasses, the company can put a frontier class model in front of an enormous user base very quickly. That creates real product leverage, especially for multimodal use cases where images, social context, and visual media are already native to the platform.

Its challenge is credibility. Meta now has to show that Muse Spark is not a one off recovery release, but the start of a sustained cadence of strong models.

What to watch next

There are five questions worth tracking after the Muse Spark launch.

- API access will determine whether the model becomes relevant beyond Meta’s own consumer products

- International rollout will show how quickly Meta can scale advanced features across markets

- Agentic performance will reveal whether Meta can catch up on more autonomous work oriented tasks

- Open model policy will indicate whether Muse Spark is an exception or the start of a permanent shift

- Safety disclosures will matter as more details appear in Meta’s Safety and Preparedness reporting

It is also worth watching how Muse Spark performs inside hardware. Meta’s reference to AI glasses is not incidental. Multimodal assistants become more compelling when they can see the user’s surroundings in real time. If Muse Spark is eventually optimized for wearable use, that could become one of the clearest product differentiators in Meta’s AI strategy.