MiMo-V2-Pro LLM from Xiaomi

MiMo-V2-Pro is Xiaomi’s flagship large language model for agent workflows. It first caught a lot of attention when an anonymous model called Hunter Alpha appeared on OpenRouter, climbed the usage charts, and sparked speculation that it might be a new DeepSeek release. Xiaomi later confirmed that Hunter Alpha was an early internal test build of MiMo-V2-Pro. That reveal mattered because it showed Xiaomi is no longer treating AI as a side project. It is building serious model infrastructure aimed at coding, tool use, planning, and workflow execution.

If you want the short version, MiMo-V2-Pro is a proprietary text based reasoning model built to act less like a chatbot and more like the brain inside an agent system. Xiaomi positions it for tasks such as software engineering, browser actions, document workflows, research chains, and long multi step jobs that need planning and tool calling. That focus is what makes it more interesting than a standard general purpose assistant.

MiMo-V2-Pro at a glance

- Developer Xiaomi MiMo Team

- Release March 2026

- Model type proprietary reasoning oriented LLM

- Input and output text in, text out

- Context window up to 1 million tokens

- Architecture over 1T total parameters with 42B active at inference, according to Xiaomi

- Main use case agent systems, coding, tool use, long context reasoning

- Positioning strong performance in agent benchmarks with pricing below premium frontier rivals

What MiMo-V2-Pro is

MiMo-V2-Pro is the top model in Xiaomi’s MiMo V2 lineup. It is the base model for text heavy agent tasks. That distinction matters, because Xiaomi also released MiMo-V2-Omni for text, vision, and voice. If you are looking at MiMo-V2-Pro specifically, you are looking at the model Xiaomi wants you to use when the job is complex, long running, and action oriented.

In plain terms, this is a model designed to do more than answer questions. It is supposed to take an instruction, break it into steps, use the right tools, keep track of the overall goal, recover from small failures, and finish the task with less manual babysitting. Xiaomi describes it as a foundation model for real world agentic workloads, and that description fits the launch materials much better than calling it just another chat model.

Third party tracking also supports that positioning. Artificial Analysis lists MiMo-V2-Pro as a proprietary reasoning model with a 1 million token context window. Xiaomi’s own benchmark claims place it in the upper tier of current models for coding agents, general agents, and tool use. Exact leaderboard positions can shift depending on the evaluation version and date, but the broader takeaway is consistent. MiMo-V2-Pro is being treated as a serious high end model, not a lightweight experiment.

Who is behind MiMo-V2-Pro

The company behind MiMo-V2-Pro is Xiaomi, best known globally for smartphones, consumer electronics, and an increasingly broad software and device ecosystem. What makes this launch notable is that Xiaomi is not only integrating AI features into products. It is also building its own foundation models and trying to compete at the model layer.

The model is developed by the Xiaomi MiMo Team. Reports around the launch identified Luo Fuli as the person leading the MiMo large model effort. She was previously associated with DeepSeek, which helps explain why some observers immediately took MiMo-V2-Pro seriously. Still, the more important point is not one person. It is that Xiaomi now appears willing to fund the talent, compute, and engineering needed to build agent grade models in house.

Xiaomi also has an advantage that pure model startups do not always have. It already owns a large product environment where AI can be deployed. That means MiMo-V2-Pro is not being designed in a vacuum. Xiaomi can test model behavior in browsers, productivity tools, search flows, document handling, coding environments, and other software scenarios where tool use and long context matter more than flashy demo prompts.

How MiMo-V2-Pro works

Built as an agent model, not just a chat model

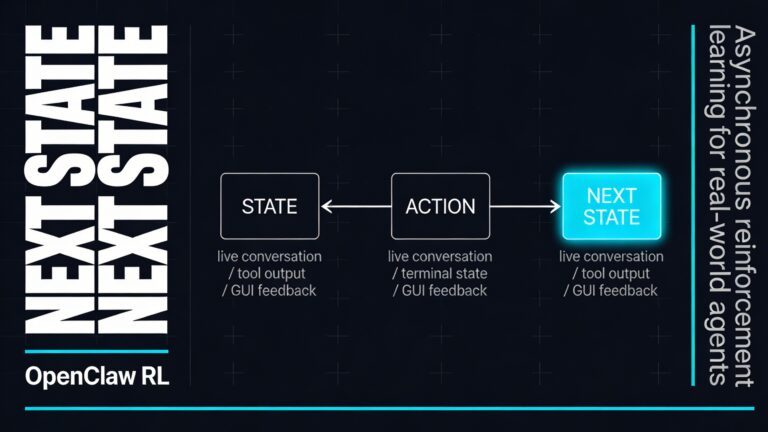

The central idea behind MiMo-V2-Pro is simple. A useful model should be able to complete tasks, not just produce polished answers. Xiaomi says the model was optimized specifically for agent scenarios, which means it was trained and tuned for environments where the model has to plan, call tools, reason across several steps, and stay coherent while working inside a larger system.

That is why Xiaomi keeps linking MiMo-V2-Pro to frameworks such as OpenClaw, OpenCode, KiloCode, Blackbox, and Cline. In those setups, the model is not sitting alone in a chat box. It is part of a software loop. It may read instructions, inspect files, write code, revise outputs, use a browser, or trigger tools. The better the base model is at deciding what to do next, the better the full agent behaves.

A large but efficient architecture

Xiaomi says MiMo-V2-Pro has more than 1 trillion total parameters, with 42 billion active at inference. That points to a sparse design where only part of the model is used for each step, which helps keep runtime efficient even when the full model is very large. In practice, that kind of setup tries to give you the benefits of scale without the full cost of running every parameter on every token.

The model inherits Xiaomi’s Hybrid Attention approach from the earlier MiMo-V2-Flash, with the hybrid ratio increased from 5 to 1 up to 7 to 1. You do not need to know the math to understand the goal. Xiaomi is trying to support a much larger model and a much longer context window while keeping inference practical. MiMo-V2-Pro also includes a lightweight Multi Token Prediction layer, which is meant to speed up token generation.

That mix matters because agent systems punish slow or unstable models. If a model must think across long prompts, inspect files, and generate a lot of intermediate text, small efficiency gains add up quickly.

Long context and tool use

One of the headline features of MiMo-V2-Pro is its 1 million token context window. That is enough for very large documents, long conversations, codebases, multi file instructions, or chained research material in a single request. For you, that means the model can keep more of the task in working memory instead of constantly forgetting earlier instructions or needing aggressive summarization.

Long context alone is not enough, though. Many models can accept large prompts and still fail once the task becomes messy. Xiaomi says MiMo-V2-Pro was tuned for stronger tool invocation and multi step reasoning, which is exactly what long context is supposed to support. The model is meant to not only read a lot, but also act on what it reads.

A public demo showed this idea clearly. In one example, the system was asked to build a website that regularly updates stock listing data. The model used a crawler, generated a static page, ran the workflow, noticed mismatches, and corrected missing data. That is not full autonomy in the science fiction sense, but it is a good example of how an agent model differs from a pure text generator. It can move through a chain of steps and adjust its own path.

Trained for multi step execution

Xiaomi says MiMo-V2-Pro went through supervised fine tuning and reinforcement learning across complex and diverse agent scaffolds. That is an important detail. A general LLM can look smart in chat and still perform badly in real software environments because it chooses the wrong tool, forgets the task structure, or makes brittle decisions after step three or four.

By training on agent shaped tasks, Xiaomi is trying to improve exactly those weak points. The goal is to make MiMo-V2-Pro more reliable when it has to do system design, task planning, code generation, debugging, and workflow control. This is also why Xiaomi keeps emphasizing real user experience over benchmark wins alone.

The main advantages of MiMo-V2-Pro

It is built for real workflows

The biggest advantage of MiMo-V2-Pro is its focus. Many models are marketed as universal assistants. Xiaomi is taking a narrower and more practical route. MiMo-V2-Pro is made for agent systems, which means it is optimized for environments where the model needs to finish work rather than simply sound helpful.

That makes it especially relevant for coding agents, research pipelines, browser automation, structured document tasks, and internal productivity tools.

It looks strong for coding and software engineering

Xiaomi’s internal evaluations say MiMo-V2-Pro feels close to Claude Opus 4.6 for advanced coding work, with stronger system design and task planning than many cheaper models. That kind of claim always needs real world testing, but the overall signal is credible because early usage around Hunter Alpha reportedly skewed heavily toward coding tools. In other words, developers were not just trying it once. They were actually using it in software workflows.

Xiaomi also claims the model performs very well in agent oriented coding benchmarks and can generate polished front end applications in one shot inside agent frameworks. Even if you discount the marketing spin, the launch evidence suggests coding is one of MiMo-V2-Pro’s clearest strengths.

The 1 million token context is genuinely useful

A huge context window is easy to dismiss as benchmark bait, but for agent workflows it is practical. You can feed in large documentation sets, long conversations, specification files, or a big chunk of a codebase and still leave room for instructions and output. That reduces the need for prompt chopping and helps the model preserve the bigger picture.

For long horizon tasks, that is a real advantage. It means fewer context resets, fewer summarization errors, and better continuity across steps.

It aims for a strong price to performance balance

Official launch pricing makes MiMo-V2-Pro one of the more interesting parts of Xiaomi’s push. Xiaomi listed pricing at 1 dollar per million input tokens and 3 dollars per million output tokens for contexts up to 256K. For the full 1 million token range, pricing was listed at 2 dollars input and 6 dollars output. Xiaomi also framed that as far cheaper than Claude Opus 4.6 for similar categories of use.

That matters because agent systems can burn through tokens very quickly. A model can be brilliant and still be hard to deploy if every workflow becomes too expensive. MiMo-V2-Pro’s pitch is that you can get high end agent behavior without premium frontier model pricing.

It is already being positioned as infrastructure

Another advantage is that Xiaomi is not presenting MiMo-V2-Pro as a research demo. It is being tied to developer frameworks, APIs, document workflows, and productivity scenarios from day one. That usually leads to faster practical iteration because the model gets tested where failures are easy to spot. Not every company has the product surface area to do that.

Where MiMo-V2-Pro fits best

MiMo-V2-Pro makes the most sense when your workload is text heavy, tool driven, and multi step. Good examples include code generation, debugging, long document analysis, research agents, browser based task execution, and structured workflow orchestration.

It is less suitable if your main need is native image, video, or audio understanding. For that, Xiaomi is clearly steering people toward MiMo-V2-Omni. It is also worth remembering that MiMo-V2-Pro is proprietary, so if open weights are a requirement for your stack, this is not the model for that job.

Why MiMo-V2-Pro matters

MiMo-V2-Pro matters because it shows Xiaomi wants to compete where advanced AI is becoming most useful, not only in chat, but in systems that actually do work. The model combines large scale architecture, long context, agent specific training, strong coding focus, and more manageable pricing than many top tier rivals. That is a meaningful combination.

The real test will be stability over time. Agent models succeed or fail in messy, long running tasks, not in clean benchmark screenshots. But if MiMo-V2-Pro keeps its promise in real deployments, Xiaomi will have something more valuable than a headline model. It will have a practical engine for developer tools, productivity software, and next generation AI agents.