What is K2 Think V2

K2 Think V2 represents a fundamental shift in how advanced AI reasoning systems are built. Developed by the Institute of Foundation Models at the Mohamed bin Zayed University of Artificial Intelligence, this model challenges the assumption that bigger always means better in artificial intelligence.

At its core, K2 Think V2 is a reasoning-focused language model with 70 billion parameters. That might sound large, but it’s actually compact compared to today’s flagship models from OpenAI and Google, which exceed a trillion parameters. What makes K2 Think V2 remarkable isn’t its size but what it achieves with that size.

The model excels at mathematical reasoning, long-context consistency, and complex task execution. It’s designed specifically for problems that require step-by-step logic and extended chain-of-thought reasoning. Unlike many models that struggle to maintain coherence over long contexts, K2 Think V2 stays reliable even when processing extensive information.

K2 Think V2 is built entirely on K2-V2, a 70-billion-parameter foundation model that serves as the UAE’s first fully sovereign base model. This means the entire system, from the foundation up through the reasoning layer, is developed domestically without reliance on foreign base models or proprietary systems.

Who developed K2 Think V2

The Institute of Foundation Models at MBZUAI led the development of K2 Think V2, working in partnership with G42 and Cerebras. The project was spearheaded by Hector Liu, Head of Technology at IFM, with strategic direction from Richard Morton, Managing Director of the Institute.

MBZUAI itself is a graduate-level research university focused exclusively on artificial intelligence, based in Abu Dhabi. The Institute of Foundation Models operates as what Morton describes as an AI factory, producing sovereign models that can underpin future AI systems across government, industry, and society.

The development approach reflects a deliberate strategy by the UAE to establish complete control over its AI infrastructure. Rather than depending on models trained elsewhere, the country is building what Morton calls a token factory and agent factory system. The token factory refers to the data center layer producing tokens at scale, while the agent factory builds AI agents that solve real-world problems inside organizations.

This infrastructure includes Stargate UAE, a 1-gigawatt AI data center cluster under construction in Abu Dhabi. The facility forms part of the wider UAE-US AI Campus, a 5-gigawatt, 10-square-mile infrastructure zone being developed with partners including OpenAI, Oracle, Nvidia, SoftBank, and Cisco.

The team behind K2 Think V2 conducted rigorous experiments throughout development to ensure that expanding the model’s capabilities never compromised its reliability. This methodical approach resulted in what Liu describes as industry-leading low hallucination rates.

What makes K2 Think V2 different

K2 Think V2 distinguishes itself through three core characteristics: efficiency, openness, and sovereignty. Each of these represents a deliberate design choice that sets it apart from competing models.

Efficiency through design

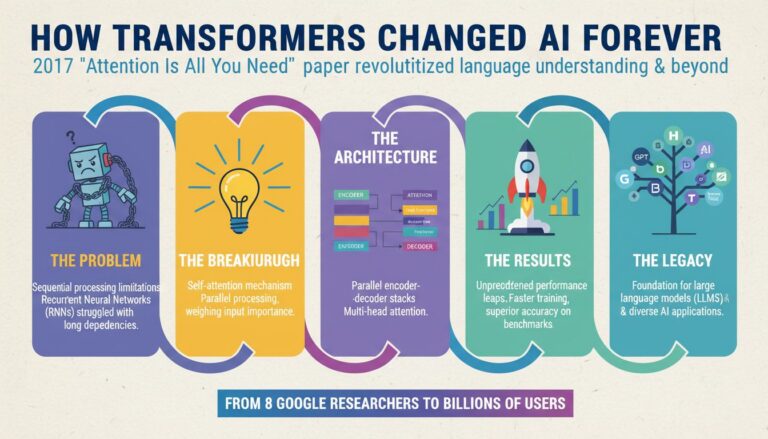

Most AI labs have pursued performance gains through parameter scaling, building ever-larger models. K2 Think V2 takes a different path. Instead of focusing on extreme parameter scaling, the model was built on the Instruct model, designed specifically for thinking and reasoning.

This approach delivers what MBZUAI calls “the world’s most parameter efficient advanced reasoning model.” At 70 billion parameters, K2 Think V2 achieves reasoning performance competitive with proprietary systems built on hundreds of billions of parameters. The model can do more with less, which translates directly into more cost-effective inference without sacrificing reasoning quality.

The efficiency gains show up clearly in benchmark results. K2 Think V2 saw substantial performance improvements on the American Invitational Mathematics Examination, Harvard-MIT Mathematics Tournament, the diamond tier of the Graduate-level Google-Proof Question Answering, and the Instruction-Following Benchmark.

Recent results from Artificial Analysis show the K2 family advancing 4 points on the Intelligence Index, powered by dramatically lower hallucination rates (dropping from 89% to 52%) and stronger long-context reasoning (improving from 33% to 53%). These improvements extend the core strengths of K2-V2 into more reliable, scalable intelligence.

End-to-end openness

While many models claim to be “open,” K2 Think V2 takes openness further than most competitors. The model is open end-to-end, offering transparency through every part of the stack. Users get access to weights, training data, code, intermediate checkpoints, and evaluation tooling.

This level of transparency matters because it allows organizations to know exactly what they’re getting when they deploy, audit, and build on K2 Think V2. The model’s reasoning outputs can be traced back to concrete training choices rather than black-box effects.

Popular open models like DeepSeek, Alibaba’s Qwen, and OpenAI’s GPT-OSS are actually open-weight rather than open-source. This means only their trained parameters are publicly available. K2 Think V2 goes further by releasing the full training pipeline, data composition, and fine-tuning recipes.

Liu describes the main benefit as “independence and credibility.” Organizations aren’t forced to trust claims about how a model was trained. They can verify everything themselves.

Full sovereignty

K2 Think V2 is what IFM calls the first “fully sovereign” reasoning model. This claim rests on complete end-to-end transparency with no proprietary sources or external pipelines.

Sovereignty here means more than just domestic development. It means IFM can continue to independently evolve and refresh the model as new architectures, data, and reinforcement learning methods are developed. There’s no dependency on external providers who might change terms, restrict access, or discontinue support.

For developers and organizations, this enables practical sovereignty: the ability to deploy the model on their own infrastructure, fine-tune it with domain-specific data, and apply their own governance and compliance standards. The model enables sovereignty by design, but how it’s applied depends on the user’s needs.

This makes K2 Think V2 particularly well-suited for regulated environments like research institutions, government agencies, or enterprises in healthcare and banking. These sectors need strong reasoning performance but also require full transparency, evaluation credibility, and control over deployment and adaptation.

What K2 Think V2 can do

K2 Think V2 excels at tasks requiring extended reasoning and mathematical thinking. The model is exceptionally good at math, showing substantial improvements over previous iterations on challenging mathematical benchmarks.

The system handles problems that require long chain-of-thought reasoning and step-by-step logic without losing coherence over extended contexts. This capability makes it valuable for complex analytical tasks where maintaining consistency across many reasoning steps is critical.

In practical terms, K2 Think V2 can tackle graduate-level mathematical problems, follow complex multi-step instructions, and maintain logical consistency across lengthy documents or conversations. The model’s low hallucination rates mean it’s less likely to confidently assert incorrect information, a crucial characteristic for applications where accuracy matters.

The training methodology focused on establishing what Liu calls a “foundation of truth by carefully curating our training datasets and strictly validating them for correctness.” This careful approach to data quality shows up in the model’s reliability.

Organizations can deploy K2 Think V2 for applications requiring transparent reasoning processes. Because the model’s outputs can be traced back to specific training choices, it’s possible to audit and verify how the system arrived at particular conclusions. This traceability is valuable in regulated industries where decisions need to be explainable.

The model’s efficiency also means it can run on less powerful hardware than competing systems with similar capabilities. This opens up deployment options that might not be feasible with larger models requiring massive computational resources.

Accessing K2 Think V2

K2 Think V2 is available through multiple channels designed to make the model accessible to different types of users. The model can be accessed via a web application or downloaded directly for local deployment.

For developers and researchers, the complete model is available on Hugging Face, including weights, training data, code, intermediate checkpoints, and evaluation tooling. This full release supports the model’s commitment to end-to-end openness.

The model is released under what MBZUAI calls a “360-open” approach, which means publicizing not just the final weights but the entire development process. This includes data composition, training methods, checkpoints, and fine-tuning recipes.

Organizations can deploy K2 Think V2 on their own infrastructure, giving them complete control over data handling, security, and compliance. This deployment flexibility is particularly valuable for enterprises with strict data governance requirements or organizations operating in jurisdictions with specific regulatory constraints.

Because the model is fully open-source, there are no licensing fees for the model itself. Organizations only need to cover their own infrastructure and deployment costs. This contrasts with proprietary models that typically charge based on usage or require ongoing subscription fees.

The absence of licensing costs makes K2 Think V2 particularly attractive for research institutions, government agencies, and organizations in developing regions that might not have budgets for expensive proprietary AI systems. The model’s efficiency also means lower ongoing operational costs compared to larger systems.

The broader context

K2 Think V2 arrives at a moment when the AI industry is grappling with questions about scale, cost, and control. As deployment costs rise, many developers and enterprises are seeking alternatives to major model providers.

The model represents a bet that efficiency and transparency can compete with raw scale. By demonstrating that a 70-billion-parameter model can match the reasoning performance of systems ten times larger, K2 Think V2 challenges assumptions about what’s necessary to achieve advanced AI capabilities.

For the UAE, K2 Think V2 is part of a broader strategy to establish the country as a regional and global AI service hub. MBZUAI has already developed national language models for other countries, including Nanda for India and SHERKALA for Kazakhstan, offered as open resources.

The model also reflects growing interest in AI sovereignty among nations and organizations that want to avoid dependence on a small number of large technology companies. By providing a fully transparent, independently deployable alternative, K2 Think V2 offers a path for organizations to maintain control over their AI infrastructure.

With its combination of efficiency, openness, and sovereignty, K2 Think V2 positions itself as both a technical achievement and a philosophical statement about how advanced AI systems should be built and shared. Whether this approach gains wider adoption will depend on how well the model performs in real-world deployments and whether its advantages in transparency and control prove compelling enough to compete with the marketing power and ecosystem advantages of larger proprietary systems.