AWS Graviton ARM chips started as Amazon’s effort to cut cloud costs and improve efficiency. They now sit much closer to the center of the AI infrastructure stack. That shift became clearer when Meta signed an agreement with AWS to deploy tens of millions of Graviton cores, with room to expand. The deal does not replace GPUs. It shows something else. AI infrastructure is becoming more heterogeneous, and the CPU is regaining strategic weight.

That matters beyond Amazon and Meta. It points to a broader change in how hyperscalers design systems for inference, orchestration, and persistent AI workloads. In that environment, ARM based server CPUs such as Graviton are no longer just an alternative to x86 for general cloud compute. They are becoming part of the control layer for AI systems that need scale, efficiency, and lower total cost of ownership.

What AWS Graviton is

AWS Graviton is Amazon’s custom CPU family for cloud workloads. Amazon designed the processors to improve price performance and energy efficiency across Amazon EC2 and adjacent infrastructure. According to Amazon, more than 90,000 customers use Graviton. The pitch has been consistent across generations. Applications run faster, infrastructure costs fall, and energy use drops.

Amazon positions Graviton as a broad purpose compute layer. The company highlights workloads where latency and throughput matter, including real time gaming, analytics, and large databases. Uber is one of the cited users. It relies on Graviton to match riders with drivers in milliseconds. That example captures the original Graviton thesis well. The CPU is not marketed as a niche product. It is built for mainstream cloud workloads where scale amplifies every performance and efficiency gain.

From Graviton to Graviton5

The Graviton line has advanced in short cycles. Each generation tightened the cost and efficiency case.

- Graviton in 2018 marked Amazon’s first custom cloud processor and tested whether energy efficient chip design associated with smartphones could support serious cloud workloads.

- Graviton2 in 2019 delivered up to 40 percent better price performance than comparable processors, according to Amazon.

- Graviton3 in 2021 used 60 percent less energy for the same performance as comparable Amazon EC2 instances, again according to Amazon.

- Graviton4 in 2023 tripled processing cores and targeted heavier workloads such as large databases and analytics.

- Graviton5 in 2025 doubled core count to 192 and delivered up to 25 percent better performance than Graviton4.

That trajectory shows a clear pattern. Amazon did not build Graviton as a symbolic in house chip effort. It kept pushing the architecture into more demanding enterprise and AI adjacent workloads. By the fifth generation, Graviton had become a high core count server CPU that Amazon describes as its most powerful and efficient CPU so far.

Why Graviton matters for AI

Most AI infrastructure coverage still centers on accelerators. That emphasis is justified for model training and large parts of inference. Nvidia continues to dominate training. AMD is gaining relevance at scale. Hyperscalers are building their own accelerators. Yet that focus can obscure a structural point. AI systems do not run on accelerators alone.

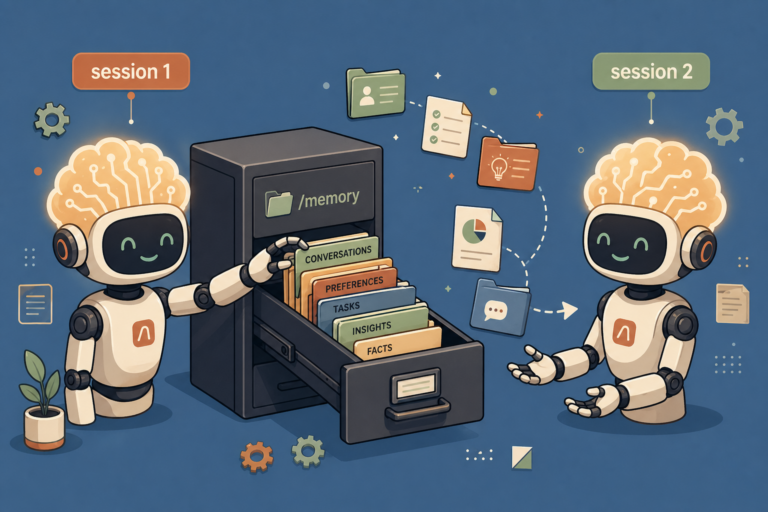

As AI workloads move from one shot prompts to more persistent, stateful, multi step tasks, the CPU becomes more important. Network World described the CPU role in this setting as the control plane. It handles orchestration, memory management, scheduling, and coordination across accelerators. In agentic systems, where workloads are less linear and more stateful, that role expands.

This is the context in which AWS and Meta frame Graviton5. AWS says the chip can support billions of interactions and coordinate complex, multi stage agentic tasks. That language reflects a shift in AI system design. The question is no longer only how fast a GPU can process tokens. It is also how efficiently the broader system can manage long running workflows, tool use, task decomposition, retrieval, memory, and service coordination.

That is where general purpose CPUs regain strategic significance. Not every part of an AI workload requires an accelerator. But many parts must run continuously, reliably, and at low cost. A CPU layer with strong price performance and better energy efficiency can therefore influence the economics of AI inference as much as raw accelerator performance does.

The Meta agreement signals a new role for Graviton

Meta’s agreement with AWS is the clearest recent indicator that Graviton has moved into a larger AI role. The deployment starts with tens of millions of Graviton5 cores. One chip contains 192 cores. The scale alone makes Meta one of the largest Graviton customers in the world, according to Amazon.

The strategic meaning lies in how Meta is using hardware overall. Meta is not backing one chip architecture. It is assembling a multi architecture stack across AWS, Nvidia, AMD, Arm, and its own silicon. It recently announced four new generations of its MTIA training and inference accelerator. It also signed a major deal with AMD for 6 gigawatts of CPUs and AI accelerators and entered a multi year partnership with Nvidia for millions of Blackwell and Rubin GPUs, alongside Nvidia Spectrum X Ethernet switches.

In that broader pattern, Graviton5 acts as a complement, not a substitute. Analysts quoted by Network World make that point directly. Meta is not moving off GPUs. It is building around them. The company appears to be optimizing for heterogeneity, supply resilience, and workload specific placement. That is a practical response to the current market. Different workloads require different compute profiles. Supply constraints remain real. No single chip architecture serves every layer efficiently.

Why ARM server CPUs are gaining ground

Graviton is part of a wider move toward ARM based custom CPUs in AI infrastructure. Counterpoint Research projects that Arm based CPUs will account for at least 90 percent of host CPU deployments in custom AI ASIC servers by 2029, up from around 25 percent in 2025. The projection does not say x86 disappears. It says the center of gravity inside custom AI server designs shifts sharply toward Arm.

The reasons are mostly economic and architectural. ARM based custom CPUs are seen as more cost and power efficient for data intensive AI workloads. AI server software stacks also have less dependence on long standing x86 backward compatibility than traditional enterprise environments do. That weakens one of x86’s historical advantages.

AWS is not alone here. Google uses its Axion Arm CPU with its next generation TPU infrastructure. Microsoft pairs Azure Cobalt with Maia accelerators. Meta is also set to deploy Arm CPUs in parallel with its other infrastructure bets. The pattern is broad. Hyperscalers are designing vertically integrated systems where the host CPU is no longer an interchangeable commodity.

This does not create a simple ARM versus x86 story. Many AI servers will continue to use off the shelf Xeon and EPYC processors. Traditional suppliers remain relevant, and x86 will still command a significant share. The real shift is from general purpose standardization toward semi customized infrastructure. In that shift, Arm gives hyperscalers more room to optimize around power, cost, and workload behavior.

What makes Graviton strategically different

Amazon’s advantage with Graviton lies in integration. The CPU is not a standalone product sold into the open market in the same way as a typical server processor. It sits inside AWS and works alongside Amazon’s broader infrastructure choices. That includes EC2 design, smart routing, custom AI chips, and the AWS Nitro System.

Network World notes that Graviton5 is built on Nitro to support performance, availability, and security. That matters because modern AI infrastructure depends less on isolated component specifications and more on system level behavior. A CPU that fits tightly into the cloud provider’s virtualization, networking, and security stack can deliver value that exceeds a narrow benchmark comparison.

This also helps explain why Graviton has scaled steadily from traditional cloud applications into AI supporting roles. Amazon controls the environment in which the chip runs. It can tune services, instances, and system software around it. That gives Graviton a practical edge in cloud deployment, even if external comparisons often focus on instruction sets or raw processor lineage.

The economics of inference favor efficient CPUs

As AI inference becomes persistent, the economic center shifts. One analyst cited by Network World argues that the market moves away from peak FLOPS and toward sustained efficiency and total cost of ownership. That is a useful framework for understanding Graviton’s relevance.

Persistent inference means the system stays active over long periods, often serving continuous sessions, background tasks, or agentic workflows. In that setting, the non accelerator share of the workload becomes expensive if it runs on inefficient infrastructure. Every scheduling task, memory operation, request routing decision, and service level control function compounds at scale.

For a company operating at hyperscale, even small per workload gains add up quickly. That is the core business logic behind Graviton. Amazon built it to improve price performance in cloud computing. AI now magnifies the same value proposition. If a CPU can run supporting layers of inference more cheaply and with less energy, it changes margin structure and capacity planning.

Why developers and infrastructure teams should pay attention

The rise of AWS Graviton ARM chips is not only a hyperscaler story. It changes how developers and enterprise infrastructure teams think about deployment.

First, workload placement becomes more granular. The relevant question is less which cloud wins in general and more where each part of an application runs most efficiently. Stateless and stateful tasks may land on different compute layers. Prefill and decode may map differently. Orchestration may sit on CPUs while model execution remains on accelerators.

Second, software stacks need to accommodate heterogeneity. The old assumption that one processor family underpins the whole server estate weakens in AI environments. Enterprises that want to control cost and latency will need better observability into workload behavior and better portability across architectures.

Third, procurement logic changes. If cloud providers and large platform companies increasingly rely on custom ARM CPUs, infrastructure choices become more dependent on provider specific integration. That can improve efficiency. It can also deepen ecosystem lock in. For buyers, the upside is performance per dollar. The tradeoff is that architecture decisions become more entangled with platform choices.

What Graviton does not mean

It is useful to define the limits of the Graviton story. Graviton does not mean CPUs are replacing GPUs for frontier model training. It does not mean x86 is irrelevant. It does not mean every enterprise should rewrite its stack around ARM tomorrow.

What it does mean is narrower and more important. The host CPU inside AI systems is becoming strategic again. Efficiency at the orchestration layer now matters more because AI workloads are becoming more continuous, more stateful, and more operationally complex. In that environment, ARM based custom CPUs have a stronger case than they did in earlier server transitions.

Graviton also shows that custom silicon strategy is no longer confined to accelerators. The CPU layer itself has become an area of competitive differentiation for hyperscalers. Amazon, Google, and Microsoft all treat it that way. Meta’s latest deal suggests major AI platform builders do too.

The larger infrastructure shift

AWS Graviton ARM chips matter because they sit at the intersection of three shifts. Cloud providers want tighter control over infrastructure economics. AI systems require more heterogeneous compute. ARM based custom CPUs fit both trends.

Amazon built Graviton to lower cost and power use across the cloud. The AI boom has expanded the chip’s relevance. Meta’s deployment of tens of millions of Graviton cores shows that the CPU has moved from background infrastructure to a deliberate part of AI system architecture. Counterpoint’s forecast suggests this is not an isolated case but part of a broader transition toward ARM inside custom AI servers.

The result is not a winner takes all market. It is a layered stack. GPUs remain central for training and heavy inference. Custom accelerators gain ground. Efficient CPUs such as Graviton handle orchestration, control, memory intensive support tasks, and persistent service logic. That division of labor is likely to define AI infrastructure more than any simple architecture battle.

The bottom line

Graviton started as Amazon’s cloud efficiency play. It is turning into something larger. The current AI cycle rewards not only raw compute but efficient system design across every layer. In that equation, Amazon’s ARM CPUs have become strategically relevant. The important shift is not that CPUs suddenly matter again. It is that AI has made the right CPU matter far more than many assumed.