Understanding artificial general intelligence

Artificial general intelligence represents one of the most ambitious goals in computer science and cognitive research. Unlike the narrow AI systems we interact with daily, from recommendation algorithms to voice assistants, AGI refers to machines that possess the ability to understand, learn, and apply knowledge across a wide range of tasks at a level comparable to human intelligence.

The concept has captivated researchers, philosophers, and technologists for decades. While Alan Turing pondered machine intelligence in 1950, and the term “artificial general intelligence” emerged in discussions during the 1990s, we’re now witnessing unprecedented progress in AI capabilities that brings the question of AGI from theoretical speculation into practical consideration.

But what exactly constitutes AGI? How close are we to achieving it? And what fundamental components must come together before we can claim to have built a truly general intelligence? These questions define one of the most fascinating frontiers in modern science.

The essential components of general intelligence

Creating AGI requires more than simply scaling up existing AI systems. Researchers have identified several critical cognitive abilities that any general intelligence must possess, drawing from both human psychology and computational theory.

Fluid reasoning and adaptability

At the core of general intelligence lies fluid reasoning, the ability to solve novel problems without relying on previously learned knowledge. The Cattell-Horn-Carroll theory of cognitive abilities, which has guided psychometric research for decades, identifies fluid reasoning as a fundamental component of human intelligence. An AGI system must demonstrate this same flexibility, adapting to entirely new situations without extensive retraining.

Current AI models excel at pattern recognition within their training domains but struggle when confronted with genuinely novel scenarios. The gap between narrow task performance and true adaptability remains one of the most significant challenges in AGI development.

Comprehensive knowledge integration

General intelligence requires the ability to acquire, store, and flexibly apply knowledge across diverse domains. This encompasses not just factual information but also procedural knowledge, spatial reasoning, and understanding of abstract concepts. Human intelligence seamlessly integrates information from multiple sources and modalities—visual, auditory, linguistic, and experiential.

Modern large language models demonstrate impressive knowledge breadth, yet they lack the deep understanding and flexible application that characterizes human cognition. They can retrieve and recombine information but often fail at tasks requiring genuine comprehension or reasoning about causality.

Long-term planning and goal-directed behavior

The capacity for long-horizon planning distinguishes general intelligence from reactive systems. Humans routinely set complex goals, break them into subgoals, and execute multi-step plans while adapting to changing circumstances. This requires maintaining coherent objectives over extended periods and reasoning about future states.

Current AI systems struggle with planning beyond relatively short time horizons. While reinforcement learning has produced impressive results in constrained environments like games, scaling these approaches to open-ended, real-world planning remains an unsolved challenge.

Perceptual and sensorimotor intelligence

Intelligence isn’t purely abstract—it’s grounded in perception and physical interaction with the world. Visual processing, spatial reasoning, and the ability to manipulate objects represent crucial components of general intelligence. The development of robotics and embodied AI addresses this dimension, though significant gaps remain between human and machine capabilities in physical reasoning.

Social and emotional intelligence

Human intelligence is fundamentally social. We understand others’ mental states, communicate through complex language, and navigate intricate social dynamics. Theory of mind, the ability to attribute mental states to others, represents a sophisticated cognitive capability that current AI systems lack. While models can simulate conversational behavior, they don’t possess genuine understanding of human psychology or emotions.

Current state of progress toward AGI

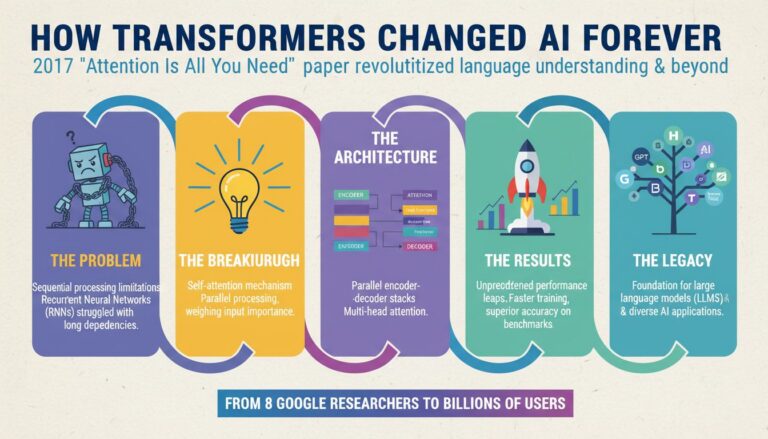

The AI field has witnessed remarkable advances in recent years, particularly with the emergence of large-scale neural networks and transformer architectures. Models like GPT-5 and Claude demonstrate capabilities that would have seemed impossible just a decade ago, performing well on tasks ranging from creative writing to mathematical reasoning.

However, these systems remain fundamentally narrow in important ways. They excel at pattern matching and statistical inference within their training distributions but lack several key attributes of general intelligence. Current AI systems don’t truly understand causality, struggle with systematic generalization, and cannot learn continuously from experience in the way humans do.

Benchmark performance tells a mixed story. On standardized tests of knowledge and reasoning, frontier AI models now approach or exceed human performance in many domains. Yet they fail spectacularly on tasks that humans find trivial, revealing fundamental differences in how they process information. The gap between impressive benchmark scores and genuine understanding remains substantial.

Why we haven’t achieved AGI yet

Several fundamental obstacles stand between current AI capabilities and true general intelligence. Understanding these challenges helps clarify what remains to be solved.

The learning efficiency problem

Human children learn remarkably efficiently from limited data, quickly grasping new concepts and generalizing them to novel situations. Current AI systems require massive datasets and enormous computational resources to achieve competence in narrow domains. This inefficiency suggests we’re missing key insights about how learning should work.

The human brain operates on roughly 20 watts of power, while training large AI models consumes megawatts. This dramatic difference in efficiency points to fundamental architectural differences that we haven’t yet bridged.

The symbol grounding problem

Language models manipulate symbols, words and tokens, but lack grounded understanding of what those symbols represent in the physical world. This disconnect between symbolic processing and embodied experience may limit their ability to achieve genuine comprehension. Humans learn language in the context of physical interaction and sensory experience, creating rich semantic representations that current AI systems lack.

Catastrophic forgetting and continual learning

Neural networks suffer from catastrophic forgetting when learning new information, they tend to overwrite previously learned knowledge. Humans, by contrast, continuously integrate new experiences while retaining old knowledge. Solving continual learning remains a critical challenge for developing systems that can accumulate knowledge over time like humans do.

Reasoning and common sense

Despite impressive performance on many tasks, AI systems lack robust common sense reasoning. They struggle with basic physical intuitions, social understanding, and causal reasoning that humans acquire naturally. This brittleness reveals that current approaches, while powerful, don’t capture essential aspects of intelligence.

Will we ever achieve AGI?

The question of whether AGI is achievable and when, generates intense debate among researchers. Perspectives range from confident predictions of AGI within decades to skepticism about whether current approaches can ever yield genuine general intelligence.

The optimistic view

Many researchers believe AGI is achievable through continued scaling and refinement of current approaches. They point to the rapid progress in recent years and argue that as models grow larger and training methods improve, emergent capabilities will eventually yield general intelligence. Some predict AGI could arrive within the next 10-20 years.

This perspective emphasizes that we may not need fundamentally new insights, just better engineering, more compute, and clever combinations of existing techniques. The success of large language models in acquiring broad capabilities through scale lends some support to this view.

The skeptical perspective

Critics argue that current AI systems, no matter how large or sophisticated, lack essential ingredients of intelligence. They contend that statistical pattern matching, however impressive, cannot yield genuine understanding or reasoning. This view suggests we need fundamental breakthroughs in our understanding of intelligence before AGI becomes possible.

Skeptics point to persistent failures in reasoning, the brittleness of current systems, and the lack of genuine understanding as evidence that we’re not on a direct path to AGI. They argue that scaling alone won’t solve these fundamental limitations.

The middle ground

A balanced perspective acknowledges both the remarkable progress and the significant remaining challenges. AGI may be achievable, but likely requires innovations beyond simply scaling current approaches. We may need new architectures that better capture causal reasoning, more efficient learning algorithms, and ways to ground symbolic processing in embodied experience.

The timeline remains highly uncertain. While rapid progress continues, predicting when, or if we’ll achieve AGI involves substantial speculation. The path forward likely involves both incremental improvements and potential paradigm shifts in how we approach artificial intelligence.

What’s needed to reach AGI

Achieving artificial general intelligence will likely require advances across multiple fronts, combining insights from neuroscience, cognitive science, computer science, and philosophy.

Architectural innovations

We may need new neural network architectures that better capture the structure of intelligence. This could include systems that explicitly represent and reason about causality, architectures that support continual learning without catastrophic forgetting, and designs that integrate symbolic reasoning with neural processing.

Embodied and multimodal learning

Grounding intelligence in physical experience may prove essential. Robots that learn through interaction with the world, integrating vision, touch, and motor control, could develop richer representations than purely language-based systems. Multimodal learning that combines different sensory modalities may be crucial for achieving genuine understanding.

Efficient learning algorithms

Developing learning methods that approach human efficiency remains critical. This includes few-shot learning, meta-learning, and approaches that can extract maximum insight from limited data. Understanding how humans learn so efficiently could inspire new algorithmic approaches.

Integration of multiple cognitive systems

Human intelligence involves multiple interacting systems, perception, memory, reasoning, planning, and motor control. AGI may require similar integration, combining specialized modules into a coherent whole rather than relying on a single monolithic system.

Theoretical understanding

We may need deeper theoretical insights into what intelligence actually is and how it emerges from computational processes. Better understanding of consciousness, reasoning, and learning could guide the development of more capable systems.

The path forward

Artificial general intelligence represents both an extraordinary scientific challenge and a potentially transformative technology. While we’ve made remarkable progress in narrow AI capabilities, the gap between current systems and true general intelligence remains substantial.

The components needed for AGI, fluid reasoning, comprehensive knowledge, long-term planning, perceptual intelligence, and social understanding, each present significant technical challenges. Current approaches have limitations that may require fundamental innovations to overcome.

Whether we achieve AGI in the coming decades or whether it remains a more distant goal, the pursuit itself drives valuable research and technological development. The question isn’t just whether AGI is possible, but how we can responsibly develop increasingly capable AI systems that benefit humanity.

As we continue pushing the boundaries of artificial intelligence, maintaining realistic assessments of both progress and limitations will be crucial. The journey toward AGI, regardless of its ultimate destination, promises to deepen our understanding of intelligence itself, both artificial and human.