Alpamayo 1.5 is not just another driving model

Alpamayo 1.5 is NVIDIA’s latest open vision language action model for autonomous driving. Its role is straightforward to describe but difficult to execute well. It takes in rich driving context, reasons about what is happening, and outputs both a planned trajectory and a human readable explanation of why that decision makes sense.

That combination matters because autonomous driving still struggles most in unusual, ambiguous, and low frequency situations. A child stepping off a curb while another car cuts across a lane. Temporary road markings near construction. An emergency vehicle approaching from an awkward angle. These are the moments where a system needs more than object detection and motion prediction. It needs something closer to structured driving judgment.

Alpamayo 1.5 is designed for that problem. It is part of a broader open platform that also includes the AlpaSim simulation framework and large scale physical AI datasets. Together, these components aim to give researchers and developers a full workflow for building, adapting, and evaluating reasoning based autonomous vehicle systems in closed loop settings.

What Alpamayo 1.5 actually is

At the model level, Alpamayo 1.5 is a 10B parameter reasoning VLA model. It combines an 8.2B parameter Cosmos Reason backbone with a 2.3B parameter action expert. In practice, that means it is built to connect perception and language grounded reasoning with the action side of driving, especially trajectory planning.

The model processes several forms of input, including:

- Video and multi camera input from the vehicle

- Ego motion history to understand recent movement

- Navigation guidance such as route intent

- Text prompts that can steer planning or query the scene

Its outputs are also more expressive than those of a typical end to end driving model. Alpamayo 1.5 can generate:

- Driving trajectories for vehicle motion

- Reasoning traces that explain the likely cause and logic behind the choice

- Answers to user questions about the scene for analysis or labeling workflows

This makes the model useful not only for planning, but also for debugging, interpretability, dataset curation, and model distillation.

Why reasoning matters in autonomous driving

A lot of autonomous driving progress has come from increasingly capable end to end systems. These models can map sensor input directly to control relevant outputs and often perform very well on average scenarios. But when something unusual happens, average performance is not enough.

Reasoning based autonomous driving tries to address that gap. The idea is not simply to classify more objects or predict more trajectories. It is to represent causal structure more explicitly. Why is the car slowing down? Because a cyclist may merge into the lane. Why should it wait before turning? Because an oncoming vehicle may still have right of way even if the path appears briefly open.

That kind of reasoning has several practical benefits:

- Interpretability. Engineers can inspect why the model acted a certain way instead of treating planning as a black box.

- Safety analysis. Teams can review whether the reasoning aligns with safe driving principles in edge cases.

- Model improvement. Reasoning traces can reveal whether an error started in perception, scene understanding, or action selection.

- Regulatory and validation value. Systems that explain their actions are easier to audit and compare.

That does not mean language like reasoning automatically solves safety. Explanations can still be wrong, incomplete, or loosely aligned with actual behavior. But as a research direction, it is more informative than systems that only output a path and nothing else.

What is new in Alpamayo 1.5

Alpamayo 1.5 expands the original platform in several ways that matter for real development work.

Text guided trajectory planning

One of the clearest upgrades is text guided planning. Developers can condition the model with natural language instructions such as turn left in 200 meters. That adds controllability to trajectory generation and makes it easier to test how route intent and scene understanding interact.

This may sound simple, but it is useful for more than navigation. It also creates a way to probe model behavior under alternate instructions. If the same traffic scene is paired with different route commands, teams can examine whether the planned motion remains safe and context aware.

Flexible multi camera support

Autonomous vehicle platforms do not all use the same camera count or layout. A model tied to one fixed sensor rig is harder to reuse. Alpamayo 1.5 adds support for variable numbers of cameras, which lowers the friction of adapting it to different vehicle configurations and research setups.

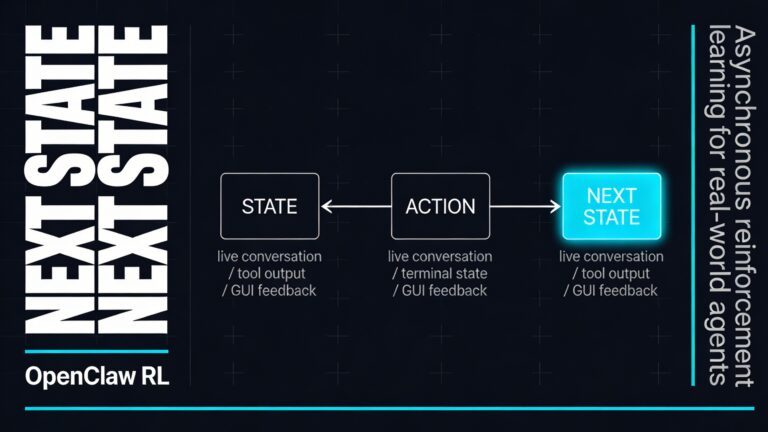

RL post training and adaptation tools

NVIDIA positions Alpamayo 1.5 as a steerable reasoning engine, not just a frozen demo model. The release includes or is paired with scripts for supervised fine tuning and reinforcement learning post training. That means teams can adapt the model with their own data and even customize reward functions to improve trajectory quality, reasoning quality, or alignment between both outputs.

This is important because AV development is always domain specific. Driving behavior, sensor setups, road design, and operational constraints differ widely across programs. A foundation model is useful only if it can be specialized.

Visual question answering for scene analysis

Alpamayo 1.5 can also answer scene specific questions. That opens up use cases beyond direct planning. For example, developers can query whether the model detected a hazard, understood an interaction, or noticed an unusual traffic agent. In data workflows, that can support automated labeling or triage for interesting clips.

The open platform matters as much as the model

Alpamayo 1.5 is easiest to understand as one part of an open development stack. NVIDIA is not only releasing a model. It is building a workflow around open weights, open datasets, and open simulation.

That matters because autonomous driving models are hard to compare in isolation. A planning model without data, evaluation tools, and closed loop testing only tells part of the story. Real progress depends on whether a team can move from training to simulation to analysis without rebuilding everything from scratch.

Physical AI datasets

The associated physical AI autonomous vehicle dataset is large and geographically diverse. NVIDIA cites 1,727 hours of driving data and roughly 100 TB of synchronized sensor recordings, including 360 degree coverage from seven cameras, lidar, and up to ten radars across 25 countries.

That scale matters for two reasons. First, reasoning based driving models need varied examples of complex situations, not just clean highway clips. Second, multimodal data helps teams study how language grounded reasoning relates to richer sensor input.

NVIDIA is also adding manually verified reasoning labels for part of the dataset, along with a chain of causation autolabeling pipeline. If those tools prove reliable, they could reduce one of the main bottlenecks in this area, which is the cost of producing high quality reasoning annotations at scale.

AlpaSim for closed loop evaluation

Open loop metrics only go so far in driving. A predicted trajectory can look plausible offline and still fail once the environment reacts to the vehicle. That is why AlpaSim is a central part of the platform.

AlpaSim is an open source simulation environment for testing AV policies in closed loop. The model’s decisions affect vehicle dynamics and future sensor input, which creates a more realistic feedback loop. That setup is essential for evaluating whether a reasoning model remains stable, safe, and robust as a scenario unfolds.

According to the available material, AlpaSim is becoming more flexible through a plugin based microservice system and integration with reconstructed scenarios via NuRec. For research teams, that means broader scenario coverage and more freedom to combine drivers, renderers, and data sources.

Where Alpamayo 1.5 could be genuinely useful

Not every team will deploy a 10B parameter model directly in a production vehicle. NVIDIA itself frames Alpamayo 1.5 more broadly, and that is where the model may be most valuable.

As a teacher model for distillation

A large reasoning model can act as an offline teacher for smaller runtime models. It can generate trajectories, explanations, and supervisory signals that help train compact models suited for onboard inference. This is one of the most practical paths from research model to deployable system.

For dataset labeling and curation

Interesting driving clips are expensive to find and annotate. A model that can describe causality, identify hazards, and suggest plausible future motion can help prioritize and label the data that matters most.

For debugging smaller edge models

When a smaller edge deployed model behaves oddly, Alpamayo 1.5 can serve as a comparison tool. Engineers can ask whether the larger reasoning model saw the scene differently, planned another path, or identified a different causal chain.

For evaluating long tail scenarios

The long tail is where AV systems earn or lose trust. Rare agent behavior, bad weather, unusual traffic geometry, sensor noise, and semantically strange scenes all stress generalization. A reasoning model paired with closed loop simulation is useful here because it offers both behavior and explanation.

What the performance claims suggest and what they do not

NVIDIA describes Alpamayo 1.5 as state of the art across reasoning quality, trajectory accuracy, and alignment, with gains from reinforcement learning post training. Those claims are promising, but they should be read as part of an early research and platform story, not as proof that general purpose autonomous driving is solved.

There are still hard questions that any reasoning based AV model needs to answer:

- How faithful are the reasoning traces to the model’s actual decision process?

- How robust is the model under sensor failures, missing cameras, or distribution shift?

- Can strong offline reasoning quality translate into safe closed loop driving across broad conditions?

- How well can a large open model be distilled without losing the very judgment that makes it useful?

These questions do not undermine the value of Alpamayo 1.5. They define the work that comes next.

Why open models are important in this part of AI

The autonomous driving world has often been split between highly proprietary stacks and academic prototypes that are difficult to reproduce. An open platform changes that balance.

When model weights, code, datasets, and simulation tools are available, researchers can test claims more directly. They can compare methods on shared benchmarks, adapt tools to their own vehicle platforms, and inspect failure modes in greater detail. For an area as safety critical as autonomous driving, that openness is not just convenient. It improves the quality of the conversation.

It also fits a broader trend in physical AI. Rather than treat robotics and autonomy as isolated products, companies are building reusable model families, simulation systems, and data pipelines that can be specialized across domains. In that sense, Alpamayo 1.5 is part of a larger move toward foundation style tooling for machines that have to perceive, reason, and act in the world.

What to watch next

The most interesting part of Alpamayo 1.5 may not be the release itself, but what developers do with it over the next year.

A few things are worth watching closely:

- Community validation of the model’s reasoning quality in independent tests

- Distillation results into smaller models that can run closer to the edge

- Benchmark design for out of distribution and safety critical scenarios

- Use of reasoning labels in improving generalization rather than just generating nicer explanations

- Closed loop evidence showing where reasoning traces actually help reduce failure rates

If progress shows up mainly in demos and offline examples, the impact will be limited. If it shows up in better closed loop behavior, easier debugging, and more reproducible evaluation, then Alpamayo 1.5 could become a meaningful reference point for reasoning based AV research.

A sharper way to think about Alpamayo 1.5

Alpamayo 1.5 is best seen as infrastructure for a different style of autonomous driving development. Instead of training a model that silently outputs motion, the platform pushes toward systems that can be steered, inspected, adapted, and tested in realistic loops. That is a more demanding standard, but probably the right one for any technology expected to handle rare and messy traffic situations.

The real significance of Alpamayo 1.5 is not that it makes cars think like humans. That phrase is too loose to be useful. Its significance is that it gives developers a concrete open framework for linking perception, language grounded reasoning, and action in a way that can actually be studied. In autonomous driving, that may be more valuable than bold claims about full autonomy, because it turns a hard problem into something teams can measure, challenge, and improve.