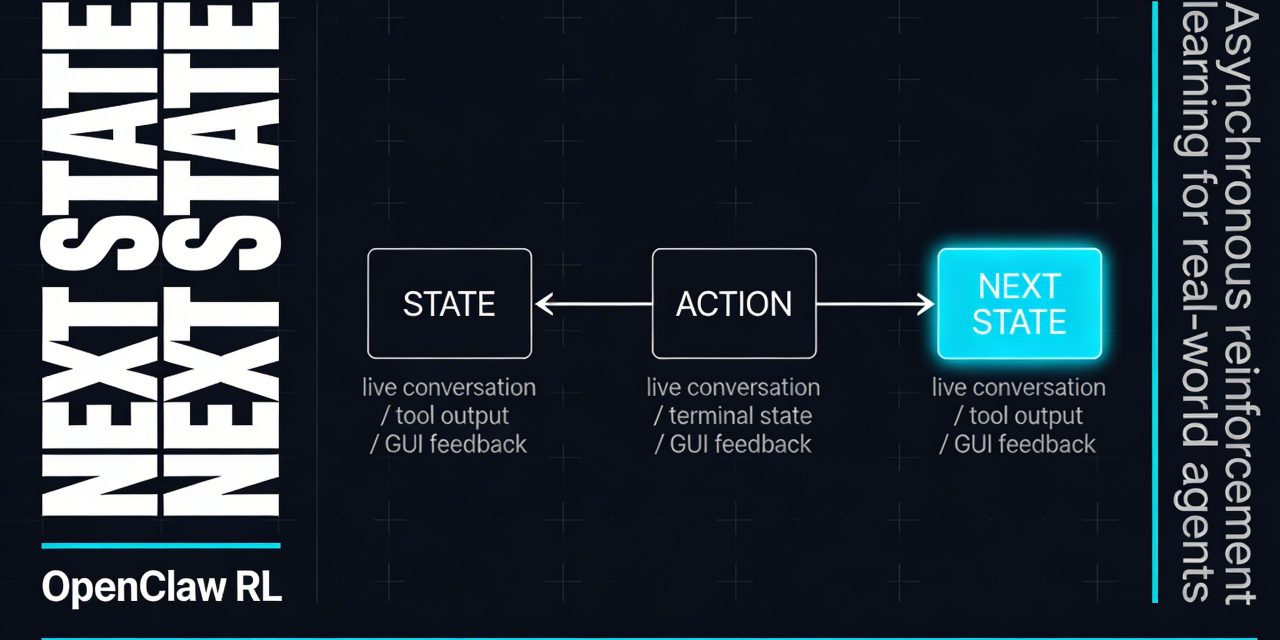

OpenClaw RL starts from a simple but useful idea

OpenClaw RL is built around a practical observation that many agent systems have ignored for too long. Every action an agent takes is followed by a next state. That next state can be a user reply, a tool result, a terminal output, a GUI change, a test verdict, or an error trace. In most systems, those signals are treated as runtime feedback only. In OpenClaw RL, they become training data.

That shift matters because it turns normal usage into a live reinforcement learning loop. Instead of collecting a static dataset, labeling it, and training in batches later, OpenClaw RL keeps the agent running while it learns in the background. If you correct the agent, ask it to try again, give a thumbs up or thumbs down, or let it interact with software tools, the framework can convert that sequence into optimization signals.

This is the core promise behind OpenClaw RL. It aims to train a personalized or general purpose agent simply through use, without forcing you into a separate annotation pipeline.

What OpenClaw RL actually is

At a technical level, OpenClaw RL is a fully asynchronous reinforcement learning framework for agentic systems. It can be used for personal conversational agents, but it also extends to more operational environments such as terminal agents, GUI agents, software engineering agents, and tool calling agents.

The framework wraps a self hosted model behind an OpenAI compatible API. As the model serves real requests, the system collects trajectories from live multi turn interaction. It then evaluates those interactions and updates the policy without interrupting the agent’s availability.

That architecture is notable for two reasons.

- It is online rather than batch first. The learning signal comes from ongoing interactions rather than a prebuilt reward dataset.

- It is asynchronous rather than sequential. Serving, judging, rollout collection, and training happen in separate loops.

In practice, this means the model does not need to stop working in order to improve. That is a meaningful design choice for real world agents, where uptime and responsiveness matter as much as model quality.

The four part asynchronous loop

OpenClaw RL separates the system into four independent components.

- Agent serving handles live requests from users or applications.

- Rollout collection turns those interactions into usable trajectories.

- Judge or PRM evaluation scores actions based on what happened next.

- Policy training updates the model continuously as samples are ready.

Because those loops are decoupled, none of them need to block the others. The agent can keep serving requests while the PRM judges prior turns and the trainer applies updates in parallel.

This setup is a better fit for agentic AI than traditional reinforcement learning pipelines that assume neatly packaged episodes. Real agents do not live inside tidy benchmark loops. They are interrupted, corrected, redirected, and exposed to tools and environments that generate messy but valuable signals. OpenClaw RL is designed around that messiness instead of trying to abstract it away.

Why next state signals are such a big deal

The idea of a next state is basic in reinforcement learning, but OpenClaw RL applies it in a broader and more operational way. In an agent system, the next state can reveal two different things.

- Evaluative information tells you whether the previous action worked well. A user saying that answer is wrong, a failed unit test, or an error code from a tool can all serve as evaluative signals.

- Directive information tells you how the action should have changed. A correction like you should have checked the file first is more than a reward signal. It contains direction.

This distinction is central to the framework’s design. Scalar reward alone often throws away the most useful part of feedback. A reward can say bad answer, but not why it was bad or how to repair the reasoning. OpenClaw RL tries to recover both the score and the instruction hidden in the next state.

The three optimization modes in OpenClaw RL

One of the more interesting parts of OpenClaw RL is that it does not rely on a single learning method. It supports three optimization approaches that can be used separately or together.

Binary RL

Binary RL uses a process reward model to score each turn from next state feedback. Those scores are then used with a GRPO style advantage estimate and a PPO like clipped objective. This is the more familiar reinforcement learning route. It turns the next state into a scalar value and trains the policy accordingly.

The advantage is simplicity and broad applicability. If you can tell whether an action helped or hurt, you can create a reward signal.

On policy distillation

On policy distillation, or OPD, tries to extract richer supervision. When hindsight becomes available in the next state, a judge model generates a textual hint. That hint is used to build an enhanced teacher context. The system then computes a token level log probability gap between teacher and student, using that as a directional advantage signal.

This gives the model more than a thumbs down. It gives it a shaped direction toward a better answer or action sequence. For complex agent behavior, that can be more useful than scalar reward alone.

The combination method

The combined method merges Binary RL and OPD. OpenClaw RL uses scalar supervision where that is helpful, while also injecting token level directional feedback when hindsight can explain what the agent should have done differently.

According to the project materials, this combined recipe is currently the strongest and most robust option in the framework. That makes sense. In many real world tasks, you want both signals. You want to know whether the action succeeded, and you want to know what a better action would have looked like.

Why this matters for personalized agents

A lot of reinforcement learning for language models is still built around centralized training and curated preference sets. That works for foundation model development, but it is less suitable for personal agents that adapt to one user, one team, or one workflow over time.

OpenClaw RL is more interesting in that setting because it learns from natural interaction. If your agent repeatedly misreads your intent, forgets your preferences, or uses the wrong tools in a recurring workflow, your own corrections become part of the optimization loop.

The project describes this as turning everyday conversations into training signals for personalized AI agents. That is a compelling direction because personalization rarely comes from benchmark scale data. It comes from repeated use, local context, and preference formation over time.

The fact that the stack is self hosted also matters here. Keeping the policy model, judge, and training loop on your own infrastructure reduces privacy exposure and gives organizations more control over adaptation.

Beyond chatbots to terminal, GUI, SWE, and tool calling agents

What separates OpenClaw RL from many RL for LLM systems is that it is not limited to conversational feedback. The same framework can train agents operating in more grounded environments.

- Terminal agents can learn from stdout, stderr, exit codes, and shell side effects.

- GUI agents can learn from screen state, accessibility trees, and visual changes.

- SWE agents can learn from repository diffs, unit tests, lint output, and test verdicts.

- Tool call agents can learn from return values, traces, and execution errors.

That broader framing is important. It suggests that agentic reinforcement learning should not be split into isolated niches. From the perspective of policy improvement, these are all action followed by next state transitions. The environment changes, but the learning logic can stay unified.

This is a useful conceptual contribution even if the framework itself continues to evolve. It pushes agent training away from benchmark specific recipes and toward a general infrastructure for online adaptation.

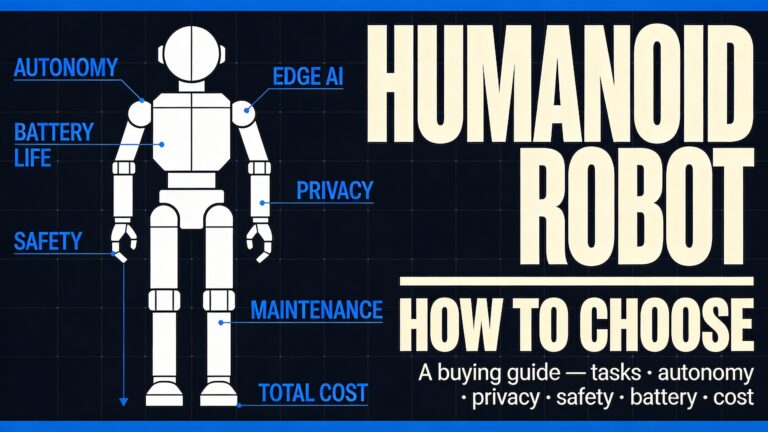

How OpenClaw RL fits into robotics and embodied AI

The source material around OpenClaw also points to a wider question. What happens when agent frameworks move from software tasks into robotics and embodied systems?

There is a temptation to treat agent frameworks as if they directly create robot intelligence. That is usually too simplistic. In many practical deployments, an agent layer acts more like an orchestration and coordination interface than a replacement for perception, planning, and control. It can manage task sequencing, tool invocation, or project workflows, but it does not automatically solve dexterous manipulation or autonomous motion.

That distinction matters.

In robotics, real capability still depends heavily on perception, control, environment modeling, and reinforcement learning at the embodiment level. Research on sim to real RL for humanoid dexterous manipulation shows how hard that remains. Training robust bimanual and vision based manipulation policies requires careful reward design, policy distillation, object representation, and tuning between simulation and reality.

OpenClaw RL belongs on a different but complementary layer. It is best understood as an agent training and orchestration framework that can learn from interactions across software and tool based environments. In robotics, its strongest role may be to coordinate workflows, call capabilities, recover from errors, and adapt how high level tasks are handled. It is not a replacement for the low level control stack.

That is also why lightweight, modular, and low cost robotic platforms remain relevant. Open source hardware such as dexterous robot hands lowers the barrier for reinforcement learning research. Meanwhile, frameworks like OpenClaw RL can help connect higher level decision making and user feedback to those systems. The two trends meet, but they are not the same thing.

Infrastructure choices that make the project practical

OpenClaw RL also pays attention to deployment realities. The project supports local GPU setups and cloud deployment, and it now includes LoRA training support. That matters because full fine tuning is often too costly for smaller teams, while parameter efficient adaptation opens the door to more experimentation.

The framework is also structured to encourage method level extension rather than constant changes to the core. New methods, deployment targets, or model variants can plug in through clear interfaces for custom loss functions, rollout logic, generation, and reward modeling.

That kind of modularity tends to matter more than flashy demos over time. If the ecosystem grows, it will be because researchers and engineers can add new optimization recipes, model families, and hardware configurations without breaking the rest of the stack.

OpenClaw RL is promising, but there are real caveats

The most interesting AI infrastructure projects are usually the ones that make strong ideas operational. OpenClaw RL does that well. Still, it comes with limits and risks that are worth stating clearly.

Live feedback is noisy

User replies and tool outputs are informative, but they are not always reliable ground truth. Some users are inconsistent. Some tools fail for unrelated reasons. Some next states only partially reflect action quality. Any system built on live signals must deal with noise and ambiguity.

Asynchrony helps throughput, not magic

A fully asynchronous loop improves utilization and online learning, but it does not remove the usual reinforcement learning difficulties. Credit assignment, reward shaping, policy stability, and sample quality still matter.

Privacy and security remain operational concerns

Self hosting improves control, but does not solve everything. If an agent can access files, tools, or physical devices, the surrounding system design matters just as much as the model. Prompt leaks, unsafe tool use, poisoned extensions, and insecure logs are still real risks.

Robotics needs more than an agent layer

For embodied AI, an orchestration framework can improve workflow and usability, but dexterous manipulation, spatial memory, and reliable autonomy still depend on deeper control and representation problems. It is better to see OpenClaw RL as one layer in a stack rather than the whole stack.

What makes OpenClaw RL worth watching

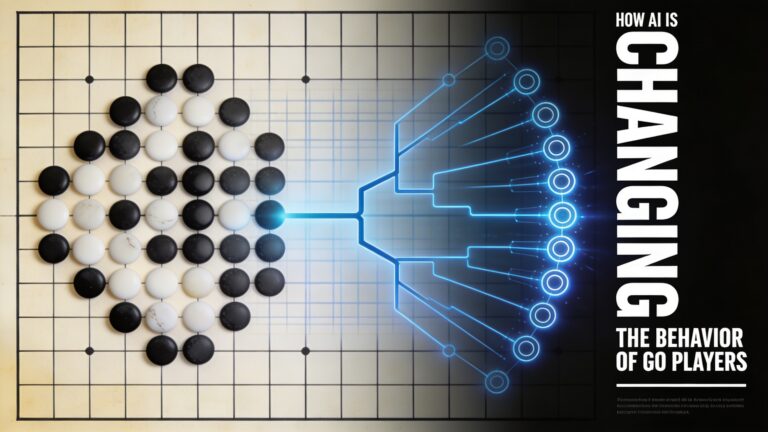

The strongest idea in OpenClaw RL is not just that it trains from conversation. It is that it treats next state reinforcement learning as a universal interface for agent improvement. A user correction, a failed test, a changed screen, and a tool error are all different forms of the same thing. They are consequences of action, and therefore opportunities to learn.

If that perspective holds up, it could shape how future agents are trained in production like settings. Instead of separating personal assistants, coding agents, GUI agents, and tool using systems into different training worlds, developers may increasingly build one online learning backbone that can absorb feedback from all of them.

That does not mean every deployed agent should be learning all the time. In many contexts, stable behavior is more valuable than constant adaptation. But for personal agents and evolving workflows, the ability to improve from ordinary use is hard to ignore.