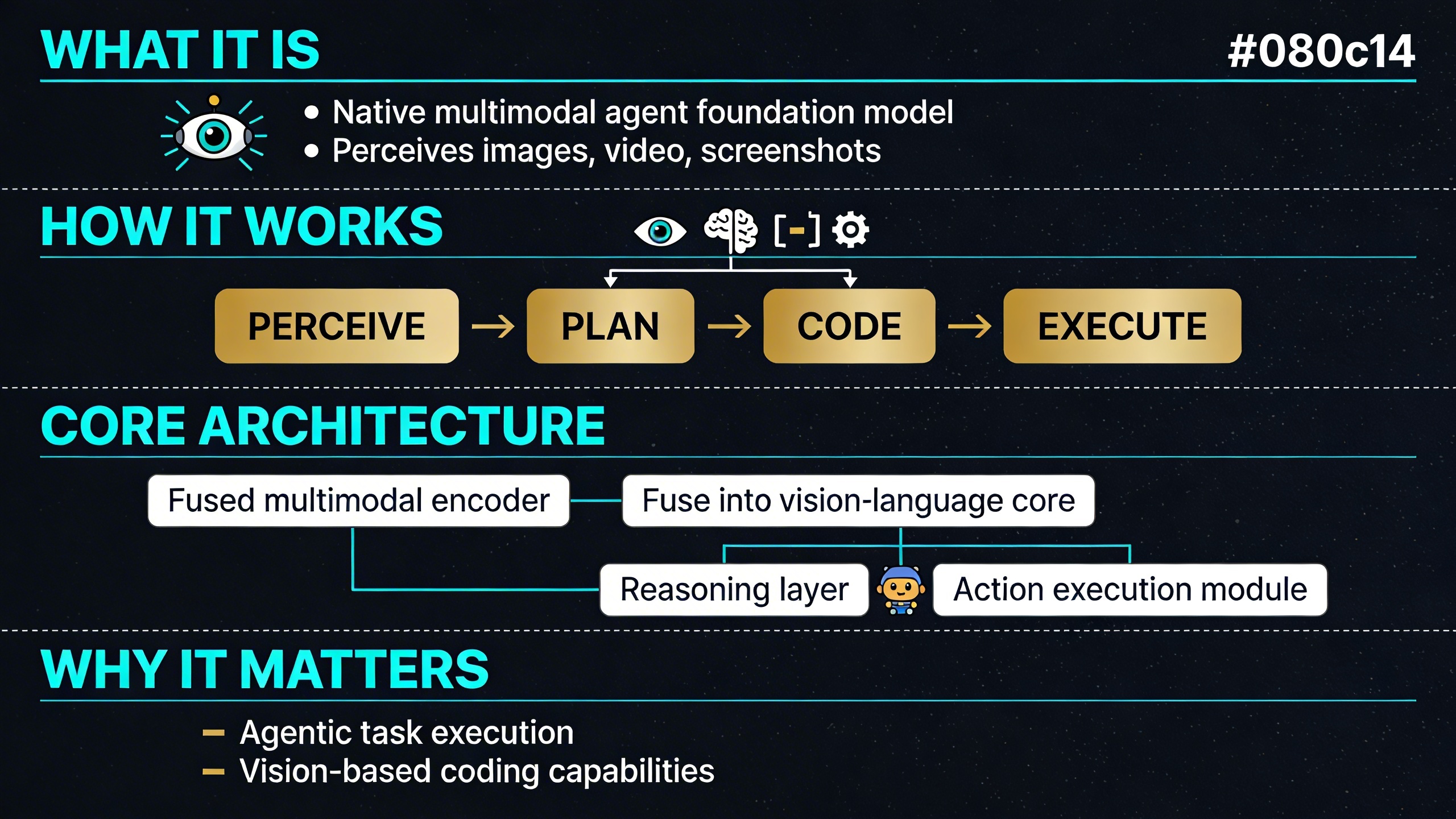

GLM-5V-Turbo is Z.ai’s first native multimodal agent foundation model, and it’s designed from the ground up to close the loop between seeing, reasoning and acting. Where many vision-language models treat image understanding as an add-on to a text-first architecture, GLM-5V-Turbo fuses visual and textual reasoning at every stage of training. That architectural choice has significant consequences for what the model can actually do.

What is GLM-5V-Turbo?

GLM-5V-Turbo is built by Zhipu AI, the Chinese AI lab also known as Z.ai, and sits at the intersection of two rapidly maturing AI capabilities: multimodal understanding and agentic execution. The model natively handles image, video and text inputs, and is optimised for tasks that require sustained, multi-step reasoning rather than single-shot responses. It was designed specifically for vision-based coding and agent-driven workflows, with support for the complete perceive–plan–execute pipeline.

The model is available via API on platforms including OpenRouter, where it is routed to providers based on prompt size and parameter constraints. Its context window supports both short and long-horizon tasks, and it is compatible with reasoning-enabled API calls that expose the model’s step-by-step internal thinking before a final answer is produced.

The architecture behind the model

GLM-5V-Turbo introduces several technical upgrades across four layers of its design.

Native multimodal fusion

Rather than aligning vision and language at inference time only, GLM-5V-Turbo strengthens visual-text alignment throughout both pretraining and post-training. It uses a new vision encoder called CogViT, paired with an inference-friendly multi-token prediction (MTP) architecture. This combination improves multimodal understanding while keeping inference efficient — a trade-off that is often difficult to achieve.

Reinforcement learning across 30+ task types

During the RL phase, the model is jointly optimised across more than 30 different task types. These span STEM reasoning, spatial grounding, video understanding, GUI agents and coding agents. Training across such a diverse task distribution is intended to produce more robust generalisation rather than narrow specialisation on a small benchmark set. The result is a model with stronger perception, reasoning and agentic execution capabilities across varied domains.

Agentic data and task construction

One of the harder problems in training capable agents is the scarcity of high-quality agent interaction data and the difficulty of verifying whether an agent action was correct. Z.ai addresses this with a multi-level, controllable and verifiable data system. Critically, agentic meta-capabilities are injected during pretraining itself, not just during fine-tuning. This means the model learns action prediction and execution patterns at a foundational level rather than as surface-level behaviours layered on top of a general-purpose LLM.

Expanded multimodal toolchain

GLM-5V-Turbo extends agent capabilities beyond pure text interactions by adding tools such as box drawing, screenshots and webpage reading with integrated image understanding. This expands the model’s operational range significantly. An agent built on this model can perceive a visual environment, plan a sequence of actions and execute those actions using visual tools — all within a single coherent loop. This is a meaningful step beyond text-only agent frameworks that rely on external vision modules stitched together at the application layer.

What can it actually do?

The practical capabilities of GLM-5V-Turbo reflect its architectural priorities. Several concrete task categories are supported out of the box.

Multimodal coding and UI replication

The model can take design mockups as image inputs and generate functional mobile page code from them. Given a welcome page and homepage mockup, for example, it can produce implementations for the visible pages and extrapolate to additional screens. This positions it as a direct tool for frontend development workflows where translating visual designs into code is a significant source of friction.

Document comprehension and writing

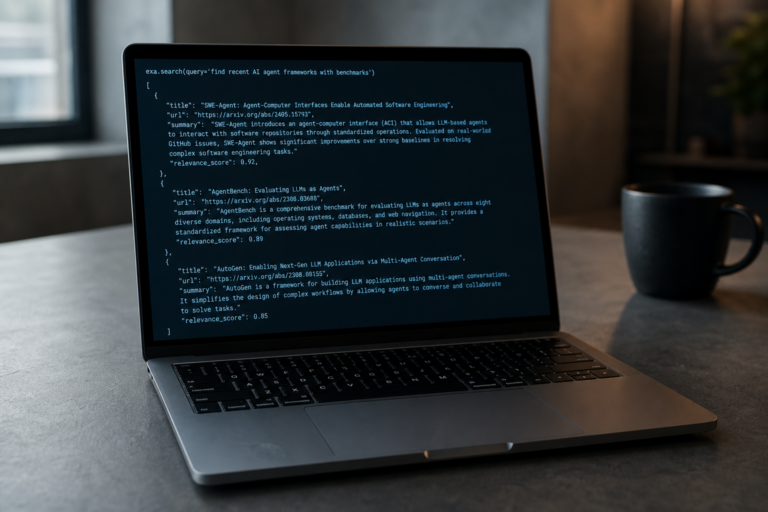

GLM-5V-Turbo can ingest academic papers or multi-page documents and produce grounded summaries, key argument extractions or even full written outputs based on the document content. This is supported by its long-context capacity and its ability to maintain coherence across extended inputs.

Video object tracking

The model supports structured output from video inputs. In one documented capability, it can track a specific object across a video sequence and return the results in a structured format such as JSON. This kind of temporally grounded output requires sustained attention across frames rather than single-frame visual question answering.

GUI autonomous exploration

A particularly interesting capability is autonomous GUI interaction. The model can explore graphical user interfaces, understand their structure visually and take actions within them. This is relevant for building automated testing agents, browser-based task execution and RPA-adjacent workflows where traditional scripting approaches are brittle.

GLM-5V-Turbo in the context of Z.ai’s model family

GLM-5V-Turbo sits within a broader ecosystem of models from Z.ai. The company’s recent GLM-4.6V series, released in mid-2025, introduced native function calling in a vision-language model and demonstrated strong benchmark performance across more than 20 evaluations, including MathVista, WebVoyager and ChartQA. The GLM-4.5 series, released around the same time, focused on reasoning modes and agentic behaviour in text-heavy contexts.

GLM-5V-Turbo is positioned as the agent-first multimodal model in this lineup. While GLM-4.6V extended the family’s visual reasoning and tool use, GLM-5V-Turbo is built specifically around the premise that the model itself is an agent — not just a model that can be used inside an agent pipeline. The distinction matters because it affects how the model is trained, how it handles uncertainty across action sequences and how it interprets visual context in the context of ongoing tasks rather than isolated queries.

Who is this model for?

GLM-5V-Turbo is most relevant for developers and teams building systems that require visual grounding combined with multi-step task execution. This includes applications in automated frontend development, document intelligence, visual QA systems, browser agents and any pipeline where a model needs to both perceive a visual environment and take meaningful action based on what it sees.

It is available via API with support for reasoning token output, making it straightforward to integrate into applications that benefit from transparency about how the model arrived at a given response or action. Providers on OpenRouter offer access with standard API compatibility, and the model supports continuation of reasoning across multi-turn conversations when reasoning context is preserved.

A model built around the full agent loop

What sets GLM-5V-Turbo apart is not any single feature but the consistency of its design philosophy. Native multimodal fusion, diverse RL training, agentic pretraining and an expanded visual toolchain all point toward the same goal: a model that can operate as a coherent agent in visually rich environments rather than a vision-augmented text model. As agentic AI systems become more central to practical deployments, models designed with this loop in mind from the start — rather than retrofitted — are likely to hold a structural advantage in reliability and generalisation.