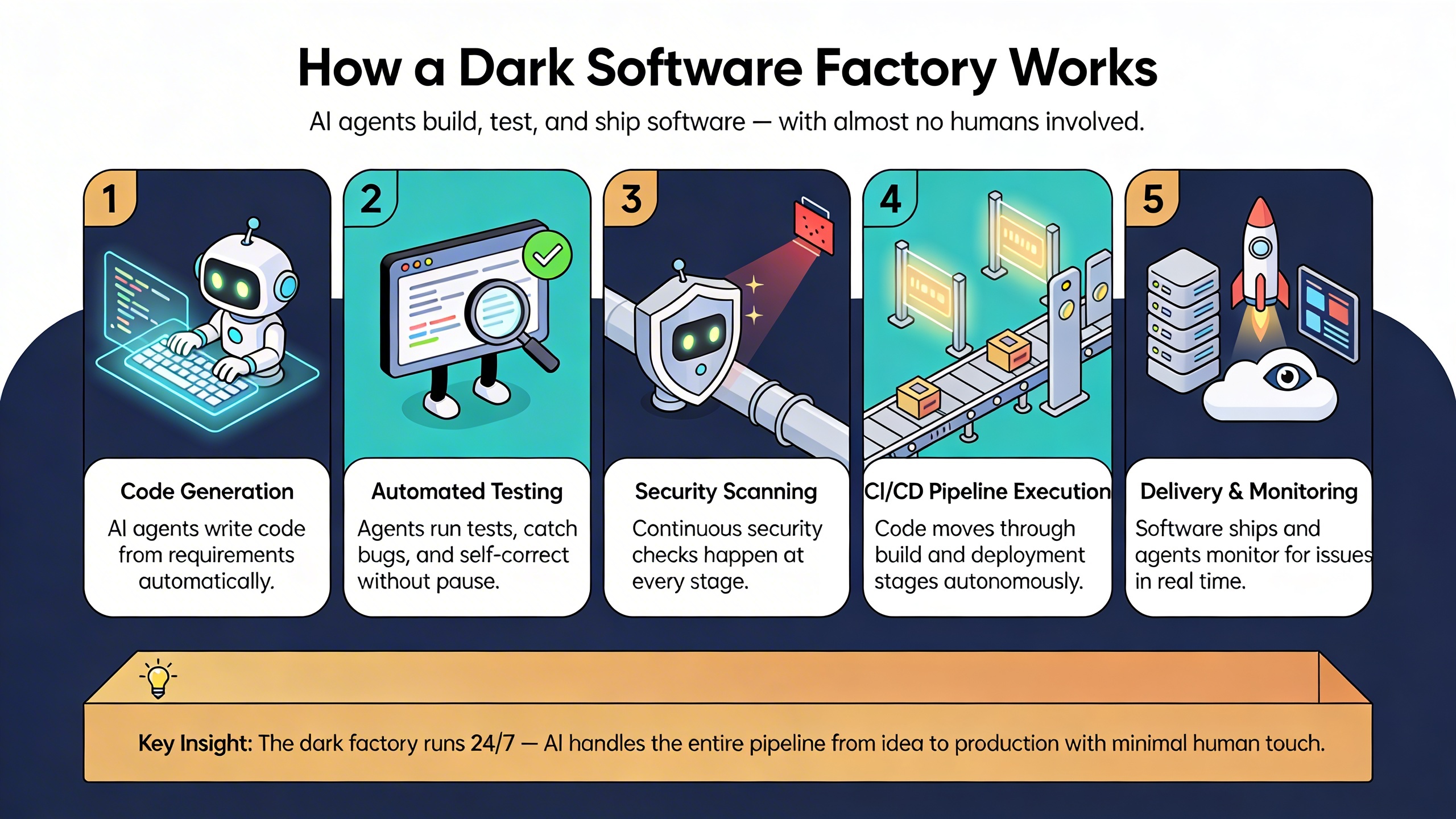

Dark software factories describe a new model of software delivery in which AI agents handle most of the implementation work. They break down tasks, generate code, run tests, validate outcomes and prepare releases with minimal direct coding by humans. The idea comes from automated manufacturing, where production continues without people on the factory floor. In software, the lights are not literally off, but the workflow changes in a similar way. Humans define intent, constraints and quality thresholds, while agents execute the bulk of the delivery process.

This is not just a more capable version of coding assistants. Earlier tools helped developers autocomplete functions, draft boilerplate and speed up repetitive work. Dark software factories go further. They turn software delivery into an orchestrated system of planning agents, coding agents, evaluation agents, test harnesses, policy checks and deployment automation. The real shift is not that AI writes more code. The shift is that software creation becomes a managed production system.

What a dark software factory actually is

A dark software factory is an autonomous software delivery environment where AI agents build and refine software from specifications and scenarios instead of relying on manual coding and line by line human review. Humans stay in the loop, but their role moves upstream and outward. They define business intent, approve stage gates, curate knowledge, set policies and review outcomes rather than touching every implementation detail.

That model depends on one core principle. The factory must be engineered, not improvised. A team does not become autonomous because it buys a coding tool. It becomes autonomous by designing a system where agents can work safely, repeatedly and audibly across planning, development, testing and operations.

Several recent examples point in this direction. Enterprises report major time savings in large migrations, agent driven pull request generation at scale and meaningful productivity gains in legacy modernization. Experimental teams have also shown that serious applications can be built through non interactive workflows where specifications and scenarios drive autonomous iterations. Whether every claim will generalize is still open, but the direction is clear. Autonomous delivery is moving from novelty to operating model.

Why now

Three trends have converged.

- Models improved. Large language models have become more reliable at following long horizon instructions and handling multi step coding tasks.

- Inference costs fell. Running agents continuously is becoming more economically viable.

- Harness design matured. Teams now understand that orchestration, memory, scenario testing and independent evaluation matter more than raw model access.

The result is a practical threshold. It is now possible to build systems where agents do more than assist. They operate as coordinated workers inside a software production environment.

The operating model shifts from coding to intent

The most important concept in dark software factories is not prompting. It is intent. If agents are going to implement software autonomously, teams need to express what they want in precise, testable and operationally useful terms.

This includes:

- business goals

- functional requirements

- constraints and trade offs

- edge cases

- security expectations

- acceptance criteria

- deployment and rollback rules

That is why intent thinking becomes a critical capability. In a traditional setup, ambiguity can be resolved by developers during implementation. In a dark factory, ambiguity spreads quickly because agents will fill gaps with assumptions. If the specification is weak, the output may still look polished while being wrong in important ways.

This changes the software lifecycle. Instead of long cycles centered on human implementation, delivery compresses into shorter autonomous units. Some teams describe these units as bolts rather than sprints. Humans set direction, clarify when needed and approve stage transitions. Agents do the construction work between those gates.

Harness engineering is the real platform layer

If intent is the input, harness engineering is the production system. The harness is the combination of rules, workflows, memory, tools, tests and evaluation logic that tells agents how to behave. It is the factory manual written for machines.

A strong harness usually includes:

- planning agents that turn goals into structured specs and tasks

- generation agents that write code, infrastructure changes and tests

- evaluation agents that independently assess behavior and quality

- tool hooks for repositories, CI pipelines, package managers and deployment systems

- memory layers that preserve lessons, patterns and prior decisions across sessions

- policy gates for security, architecture and compliance checks

One important lesson from early autonomous coding systems is that the generator should not be the judge. Agents are often overconfident about their own output. Separating planning, implementation and evaluation reduces this failure mode. Another lesson is that fresh context often beats long compressed conversations. When tasks run for hours or days, reliable progress depends on externalized state in documents, repositories and memory stores rather than in a model session alone.

Scenario testing replaces line by line review

The hardest question around dark software factories is simple. If humans do not inspect every line of code, how do they know the system works?

The most promising answer is scenario based validation. Instead of trusting unit tests generated by the same agent that wrote the code, teams define realistic end to end scenarios based on user goals and business outcomes. These scenarios can be kept outside the codebase visible to coding agents, acting like holdout sets in model evaluation.

This changes validation from syntax level confidence to behavior level evidence. The question becomes whether the software satisfies real user trajectories under expected and unexpected conditions.

That approach is especially useful when the product itself has agentic or probabilistic behavior. In those cases, a binary pass or fail test suite is not always enough. Teams may need satisfaction metrics, behavioral scoring and repeated scenario execution across many states and edge cases.

Digital twins make high volume testing practical

One of the more important ideas emerging around dark software factories is the use of digital twin environments. These are behavioral replicas of external services that software depends on. Instead of testing directly against live SaaS products or partner systems, teams create simulated clones of APIs and common edge cases.

This allows them to:

- test at scale without rate limits

- simulate rare failure modes safely

- avoid unnecessary API costs

- run thousands of scenarios continuously

- reproduce behavior consistently

For autonomous delivery, this matters a lot. Agents need rich feedback loops. If every validation step depends on fragile external services, the factory becomes slow and noisy. High fidelity digital twins give the harness a controlled environment for stress testing, regression testing and exploratory validation.

Why enterprises care

Dark software factories matter because they can change software economics, not only developer workflows.

Legacy modernization becomes more viable

Many organizations are constrained by old systems that are expensive to maintain and difficult to replace. If autonomous agents can accelerate migrations, code translation and system decomposition, programs that were delayed for cost reasons become more realistic.

Custom software becomes more attractive

When delivery cost and time fall, the balance between building and buying shifts. Some capabilities that were once too expensive to develop in house may become practical again, especially when they create operational or competitive differentiation.

Competitive cycles compress

If one company can ship validated changes in days instead of quarters, rivals will feel pressure quickly. Autonomous delivery increases the cost of delay across the market.

Advantage moves away from raw coding capacity

As code generation becomes more accessible, the differentiators change. Domain knowledge, proprietary data, high quality specifications, reliable governance and strong distribution matter more than the ability to manually produce code faster.

The security problem gets bigger before it gets smaller

Dark software factories increase speed, but they also increase risk if they are poorly governed. AI generated code can reproduce familiar vulnerabilities and introduce newer ones tied to agent behavior and software supply chains.

Common risks include:

- missing input validation and injection flaws

- broken authentication and access control

- hard coded secrets

- dependency sprawl from unnecessary packages

- outdated libraries with known vulnerabilities

- hallucinated dependencies that create supply chain exposure

- architectural drift where code looks correct but violates security assumptions

This is a key point for any serious discussion of autonomous software delivery. AI does not magically produce secure systems by default. It often reproduces the statistical habits of its training data. If the harness does not enforce security constraints, the factory may automate insecure patterns at scale.

Governance becomes part of the factory, not an afterthought

For dark software factories to work in enterprise settings, governance must be built into the platform itself. That means the system should make compliant behavior the default path.

Useful governance patterns include:

- role based access control for sensitive actions and resources

- policy as code for deployment, architecture and security rules

- service scorecards that track quality and compliance signals

- approval workflows for exceptions and high risk releases

- audit logs for all agent actions, decisions and tool use

- golden path templates that start projects with approved defaults

This is where internal developer portals and platform engineering become highly relevant. A dark software factory needs a central control plane where specifications, standards, documentation, policies and workflow state come together. Without that, teams risk creating autonomous chaos instead of autonomous delivery.

Trust is engineered through layered verification

The strongest version of this model is not trust by optimism. It is trust through instrumentation and layered checks.

A robust dark software factory should combine:

- scenario based testing

- static analysis

- architecture conformance checks

- security scanning

- behavioral regression suites

- red team agents probing edge cases

- staged rollouts and rollback mechanisms

- production telemetry feeding back into the harness

In other words, human code review is not replaced by blind faith. It is replaced by a broader evidence system. Every action should be traceable, every quality gate explicit and every failure turned into a learning signal that updates the harness.

Roles will change across software teams

Dark software factories do not eliminate engineering work. They reorganize it.

The most valuable roles are likely to shift toward:

- intent designers who can translate business goals into rigorous specifications

- harness engineers who build and refine autonomous workflows

- platform engineers who manage the delivery environment and policy layers

- security specialists who adapt controls for AI generated systems

- domain experts who define what correctness means in real business contexts

That means reskilling is not optional. Teams will need stronger capabilities in systems thinking, architecture, testing, governance and knowledge codification. The less institutional knowledge lives only in chat threads and human memory, the better the factory performs.

What will hold companies back

The barriers are usually not model access. They are organizational readiness and system quality.

The most common blockers are:

- poorly documented systems

- messy repositories and unreliable CI pipelines

- unclear ownership and approval structures

- weak architecture standards

- insufficient test coverage and observability

- cultural resistance to changing engineering roles

Autonomy amplifies whatever already exists. In a disciplined engineering environment, it can accelerate output. In a chaotic one, it can scale defects, inconsistency and security debt much faster.

What the near future looks like

Dark software factories are unlikely to replace all software development overnight. Critical systems, regulated domains and complex product work will still require substantial human judgment. But the center of gravity is moving. More software will be specified in natural language and structured documents, assembled by agents, validated through scenarios and governed through platform controls.

The teams that move first will not win because they have exclusive model access. They will win because they build better harnesses, codify more knowledge, define clearer intent and learn faster from each delivery cycle.

That is the real significance of dark software factories. They turn software delivery into a continuously improving system. Code becomes one output of that system, not the main place where engineering value lives.

The practical takeaway

Dark software factories are not science fiction and not a universal template. They are an emerging operating model for AI driven software engineering. The strongest implementations combine autonomous coding with scenario testing, digital twins, policy enforcement, secure platform design and precise business intent.

Organizations that want to explore this model should start with one realistic question. Can we express what we want clearly enough, and can we verify outcomes rigorously enough, to let agents do the rest? If the answer becomes yes, software delivery will look very different from the process most teams still use today.